MonkeyLearn integration with Scrapinghub!

Crawling the web for huge amounts of data is a hard task. You have to deal with a wide range of problems such as extracting specific content from the sites you’re crawling, retrieving new links to follow, storing the data, avoiding getting blocked, and more.

Making sense of all the retrieved data it's also damn hard. Let's say that you are scraping product reviews about Samsung Galaxy S7 and iPhone 6s from different retailers. Are these reviews positive or negative? What do they praise from these smartphones? What are they complaining about?

For the first task, there are great tools like Scrapy, the open source framework for web scraping and crawling. A few lines of Python code and you can build a complex web spider. Besides, you can use services like ScrapingHub that provide a scalable platform as a service to seamlessly run Scrapy spiders in production. If you are not a coder, you can use a tool like Portia that lets you scrape websites using their UI to select the elements on the pages you would like to scrape and build a spider without a single line of code.

For the second task, you’ve tools like MonkeyLearn, a platform that will help you to easily perform text analysis using Machine Learning.

Introducing MonkeyLearn Add-on for Scrapinghub

We are very excited to announce MonkeyLearn integration for Scrapy Cloud. This feature will bring machine learning technology to the data that you extract through Scrapy and Portia.

By combining MonkeyLearn and Scrapinghub, you’ll be able to easily build scalable applications that can retrieve and analyze data from the web.

Add-on Walkthrough

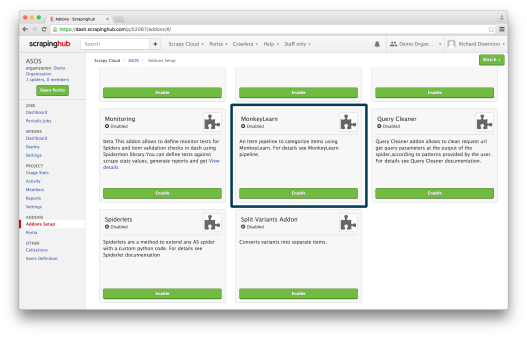

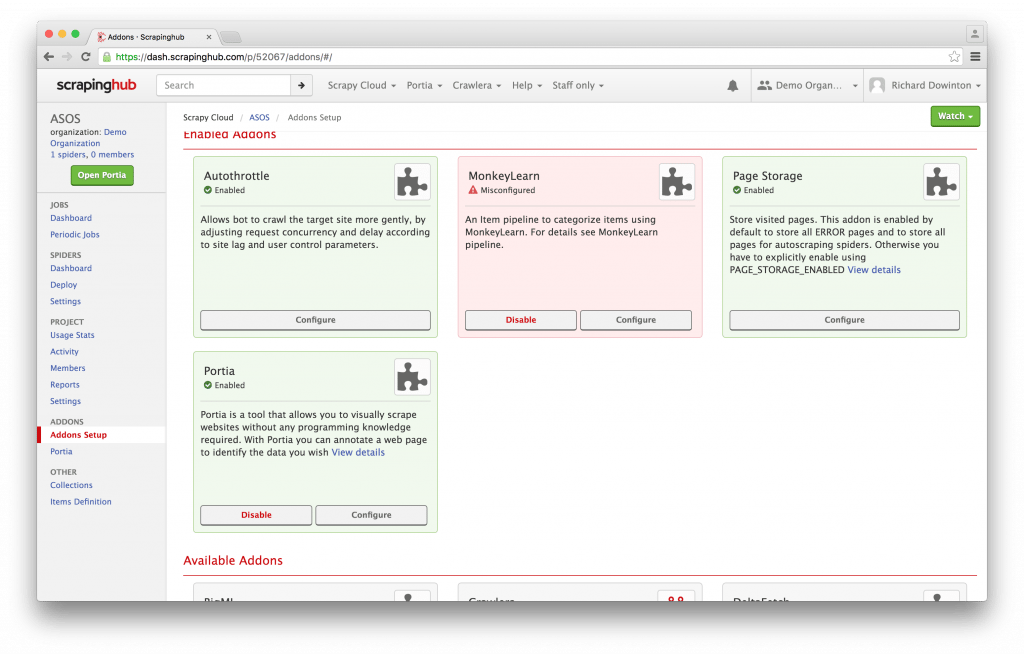

You can access the MonkeyLearn add-on through your dashboard within Scrapinghub. First, you will have to navigate to 'Addons Setup':

Then enable the add-on and click 'Configure':

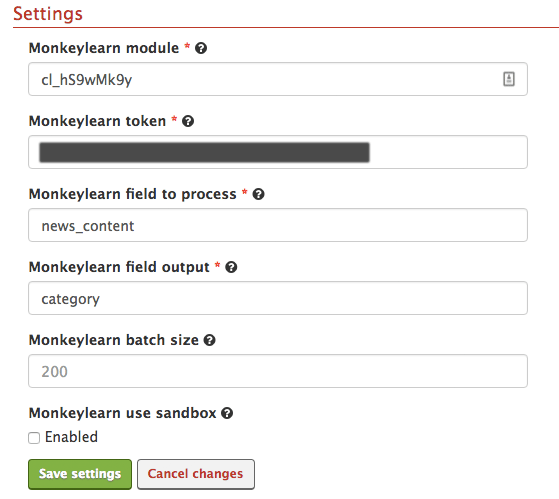

Finally, go to 'Settings' to configure the add-on:

You will need to set your MonkeyLearn API key, specify the classifier you want to use, the field you want to analyze and the field in which you want the result to be stored. MonkeyLearn reads the content from the classifier fields you’ve specified, performs the classification or extraction task on the data, and returns the result of the analysis in the field that you defined as the tag field.

For example, if you want to detect the tag of a news article based on its content, you would need to add the ID from the model you want to use in the first text box (you can use the News Categorizer public model or create your own classifier). In the second text box you would list your authorization token and the item field you want to analyze (the content of the article in this case) in the third text box. In the fourth text box you would list the name of the field that is going to store the results from MonkeyLearn.

And you’re all done! You can start scraping and analyzing web content really fast and easy.

Using MonkeyLearn with Scrapy and Portia

Since the MonkeyLearn add-on is a part of Scrapy Cloud, it works with both Scrapy (Python-based web scraping framework) and Portia (visual web scraping tool). These data extraction tools are both open source, so you can easily run both on your own system.

The add-on means you don’t need to worry about learning MonkeyLearn’s API and how to route requests manually. If you need to use MonkeyLearn outside of Scrapy Cloud, you can use the middleware for the same purpose.

Final words

We're super excited about this integration because it opens endless possibilities for developers to build all kind of cool apps.

We’ll be creating a series of tutorials with our partners at Scrapinghub that will help you make the most out of this integration and use this add-on for topic detection, sentiment analysis, keyword extraction or any custom classification on the data that you scrape from the web. Stay tuned!

Federico Pascual

April 14th, 2016