Guide to Data Labeling for AI

Data labeling is time-consuming and tedious, but it’s essential if you want to get the most out of your machine learning and AI tools.

You likely have huge amounts of unlabeled data, and perhaps you’re struggling with your tagging taxonomy – the labels you’ll use to tag your data – or wondering how to measure the accuracy of human data labelers.

Well, you’re not alone. Data labeling is hard. That’s why it’s a task often delegated to specialized data scientists, to ensure that labels are as precise, accurate, and consistent as possible. If they’re not, your data will be skewed and AI models won’t be able to make accurate predictions – they need to be taught properly in order to learn.

In this guide you’ll learn the definition of data labeling and discover some best practices on how to get started with data labeling for artificial intelligence.

What Is Data Labeling?

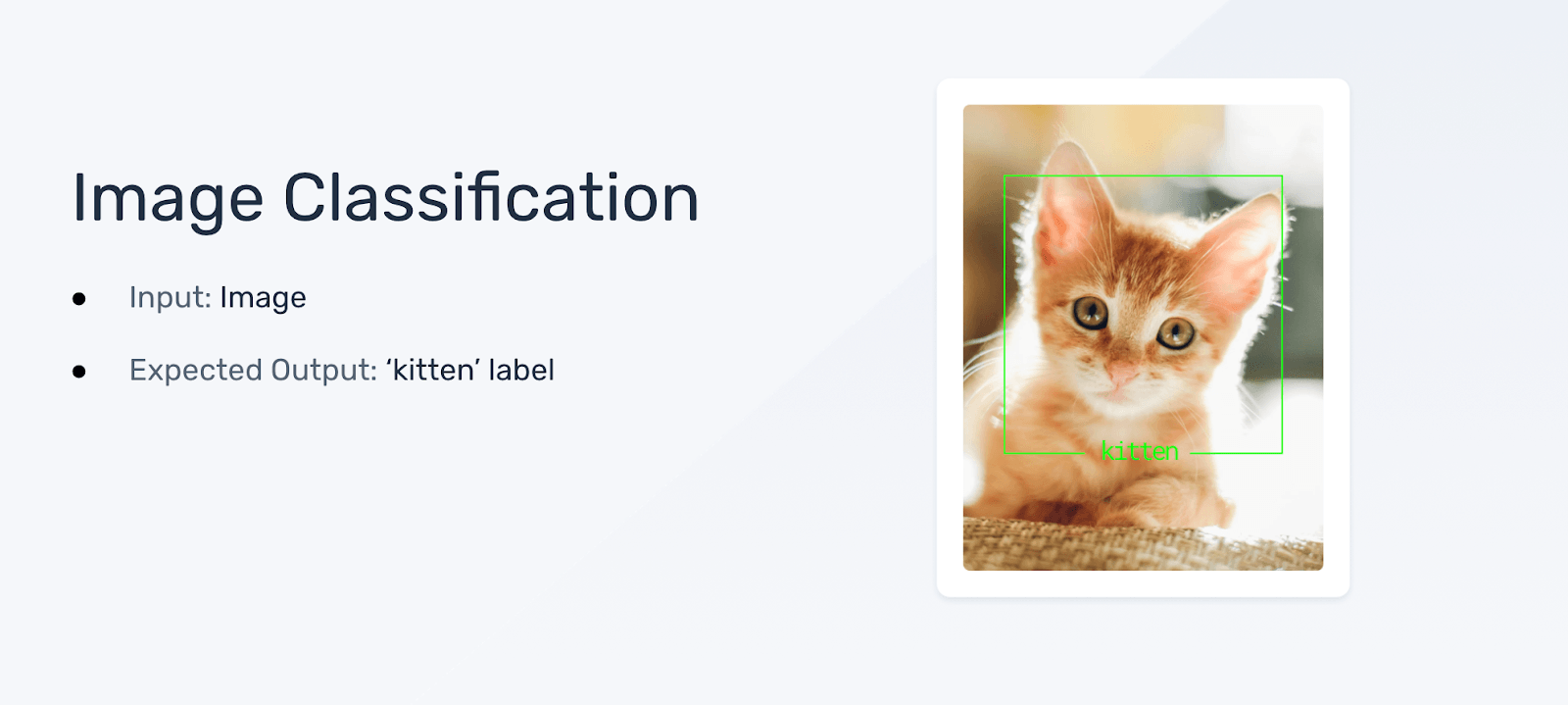

Data labeling, also known as data annotation, is the process of manually tagging data (images, text, audio, etc.) to describe what it is so that computers can process or “understand” it. Properly labeled data is needed to train AI and machine learning algorithms so that they can learn how one piece of data relates to the next.

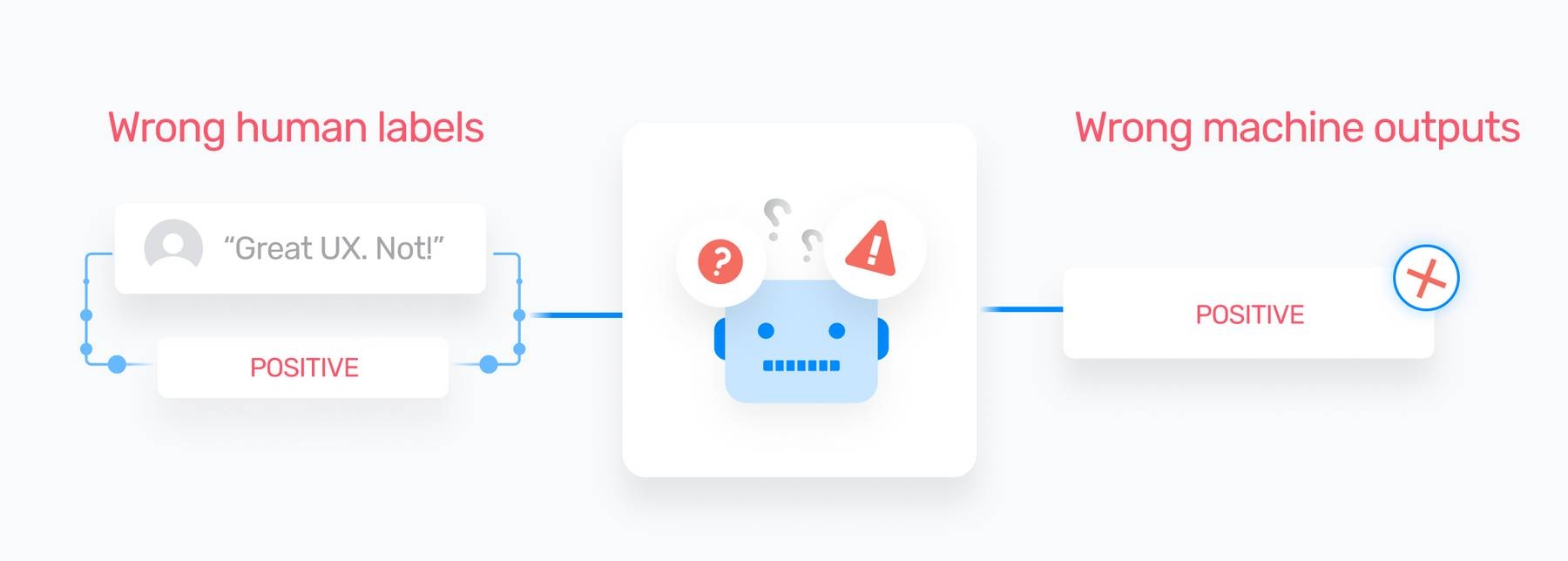

There’s a common expression in data science : “garbage in, garbage out?” It’s pretty self-explanatory: if your input data is flawed then your output data will be, well, garbage.

That’s why you need a good labeled dataset and a foolproof taxonomy. Whether it’s by category, subject, topic, or any other form – your tagging needs to be as accurate as possible, or the AI system won’t be able to learn.

Why Is Data Labeling Important?

A report by The Everest Group found that the pandemic has significantly accelerated the pace of AI adoption – by 32% – so data labeling is more important than ever. Before an AI system can identify images or analyze text on its own, it must be “trained” with hand-labeled examples. In the case of self-driving cars, that means manually labeling millions of images and videos.

Let’s imagine you want to train a sentiment analysis model. You’ll need to feed the AI model labeled examples (or “training data”) of positive, negative, and neutral sentiment. And beyond that, you’ll need to include sometimes ambiguous phrases that demonstrate human language at its most complex level, like sarcasm and irony – some of the most difficult sentiments for a machine, or even humans, to detect.

Good quality training data is key to determining the success of AI tools. It must be relevant, free from noise (like errors, duplicates, and irrelevant data) and it must be labeled correctly. Get your training data and labels in order and you’ll be able to rely on this information to improve your products, services, and everyday processes.

Data Labeling challenges

There are many challenges you’ll face when labeling your data, which we’ve listed below:

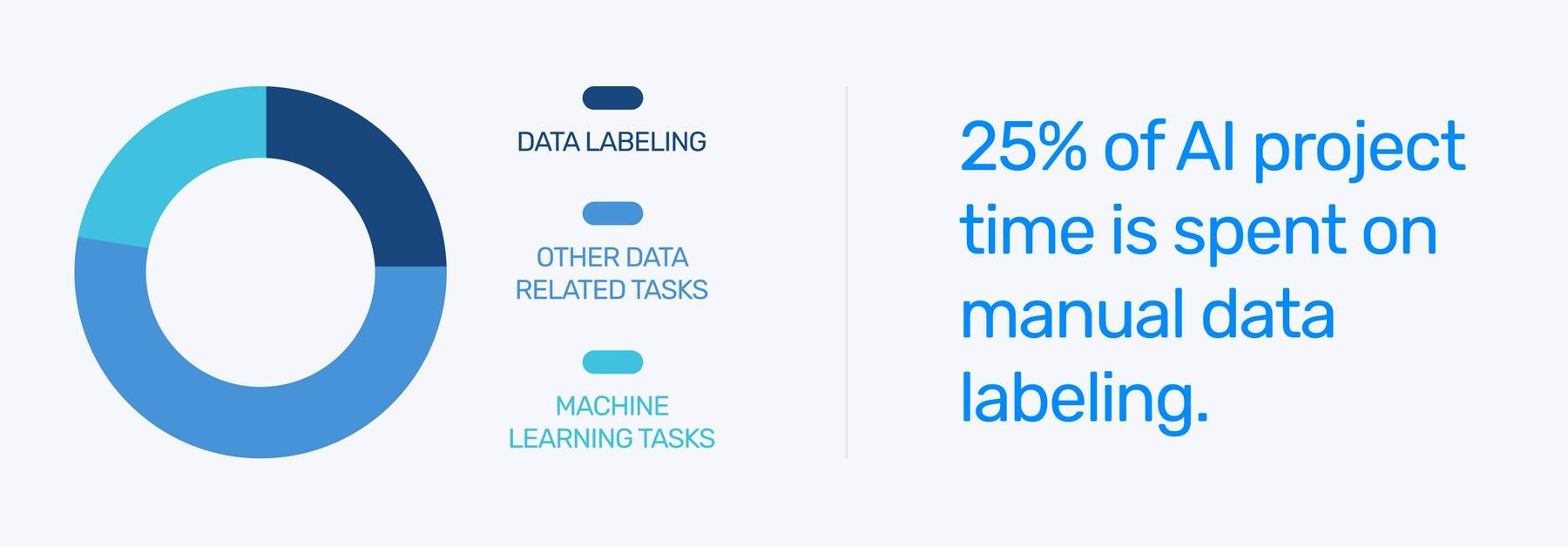

- Data labeling is time-consuming. In fact, most AI project time is spent on data-related tasks (collecting, preparing, and labeling data). You’ll need several expert human labelers on board, which can be expensive, so you’ll want to get your data labeling right the first time around.

- Inconsistency: Different life experiences lead to inconsistent labeling criteria and labels.

- Humans are error-prone: Even the same human tagger will label data inconsistently. But, there is a way to measure the accuracy of your labels. Data scientists use a calculation known as Krippendorff’s Alpha for quality assurance. It estimates the level of agreement between data labelers, so you can fine-tune your criteria as you continue to train your models.

How to Label Your Data: Some Best Practices

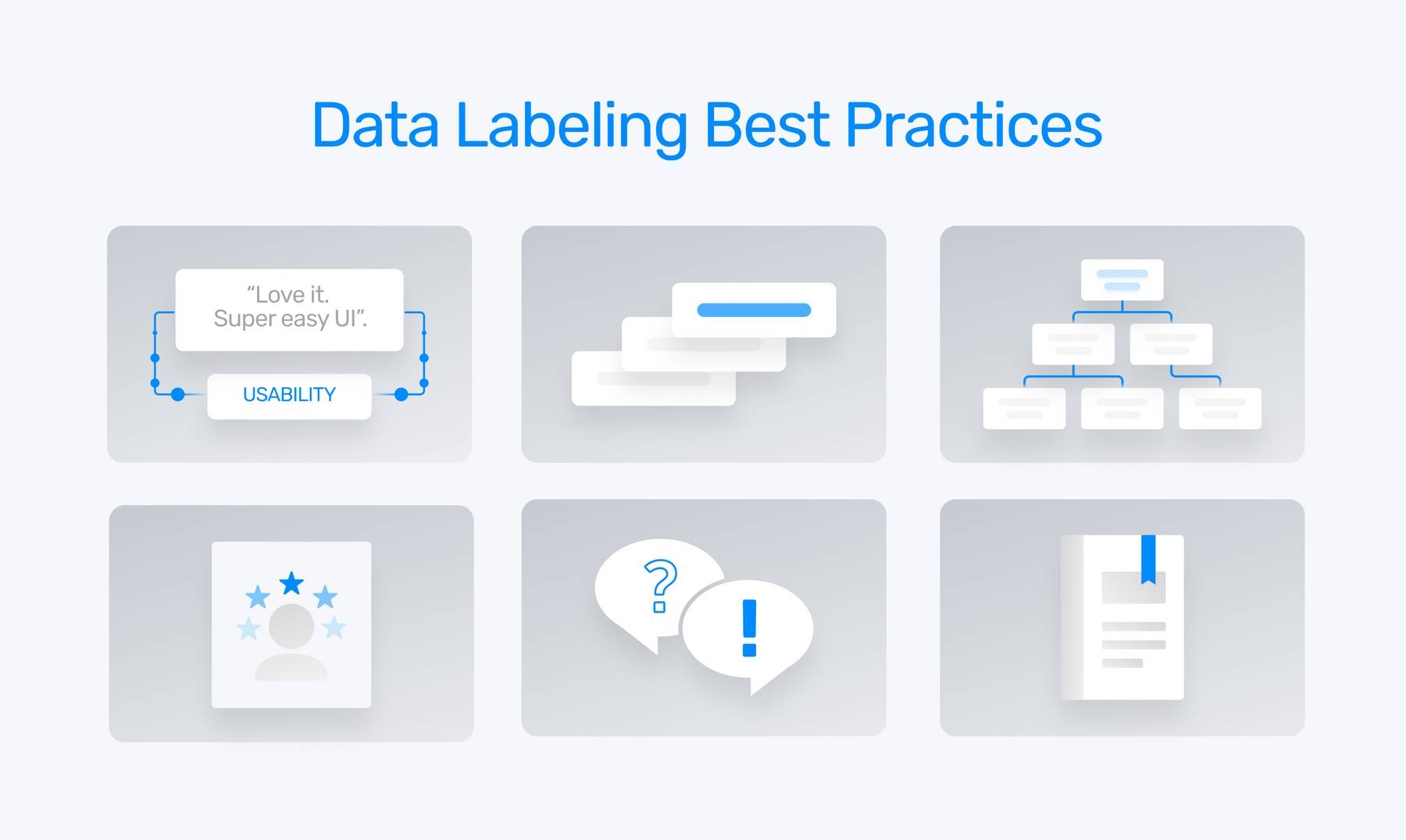

To overcome some of the challenges of manually labeling your data, there are some best practices you should follow:

1. Create a solid tagging taxonomy that’s unique to your business

There are two types of taxonomy you can create: a flat taxonomy and a hierarchical taxonomy.

A flat taxonomy is good for companies with lower-volume data, few products, or very clearly delineated departments and feedback/data classes.

A hierarchical taxonomy allows for much more specificity with the ability to add new tags. This taxonomy structure is good for companies with large amounts of data, working with multiple products or in more than one industry.

2. Keep tags to a maximum of 10

Keep tags to a maximum of 10, at least at the outset, and evolve your tagging structure over time. This will help your data labelers familiarize themselves with tags and their definitions over time, and will avoid any confusion or crossover.

3. Determine the granularity of data

Identify the granularity of data you’re analyzing – whole documents or websites, paragraphs, sentences – this will help determine the complexity of your taxonomy.

4. Choose representatives who are experts in the field.

Data labelers will find it easier to tag data if they understand industry-specific or complex language.

5. QA test your taxonomy

Include a round of QA to ensure your taxonomy is appropriate and human labelers are consistently working to your criteria.

6. Create an annotation handbook

Clearly define your tagging criteria in an in-depth annotation handbook. Include detailed correct, incorrect, and edge examples.

Benefits of automated data labeling

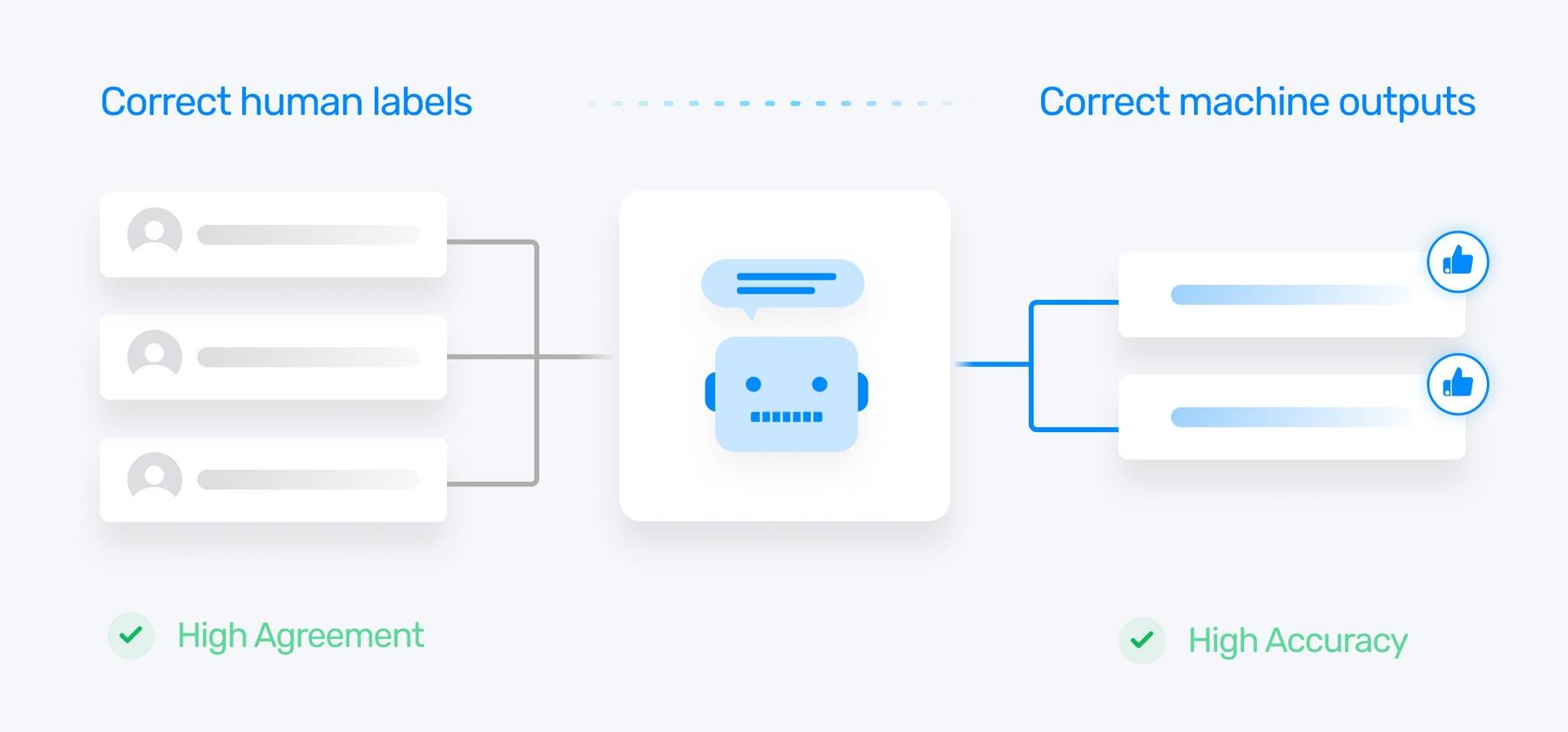

As we mentioned earlier, manually labeling data is time-consuming, but once you’ve correctly labeled your data, you can hand this task over to machines. With SaaS text analysis tools, models can begin to learn after training on only dozens or hundreds of examples.

MonkeyLearn, for example, will start automatically tagging your data with just 20 labeled samples for each tag. That’s not to say it will be entirely accurate, but you can keep manually tagging data until your supervised learning models register a high accuracy score for each tag.

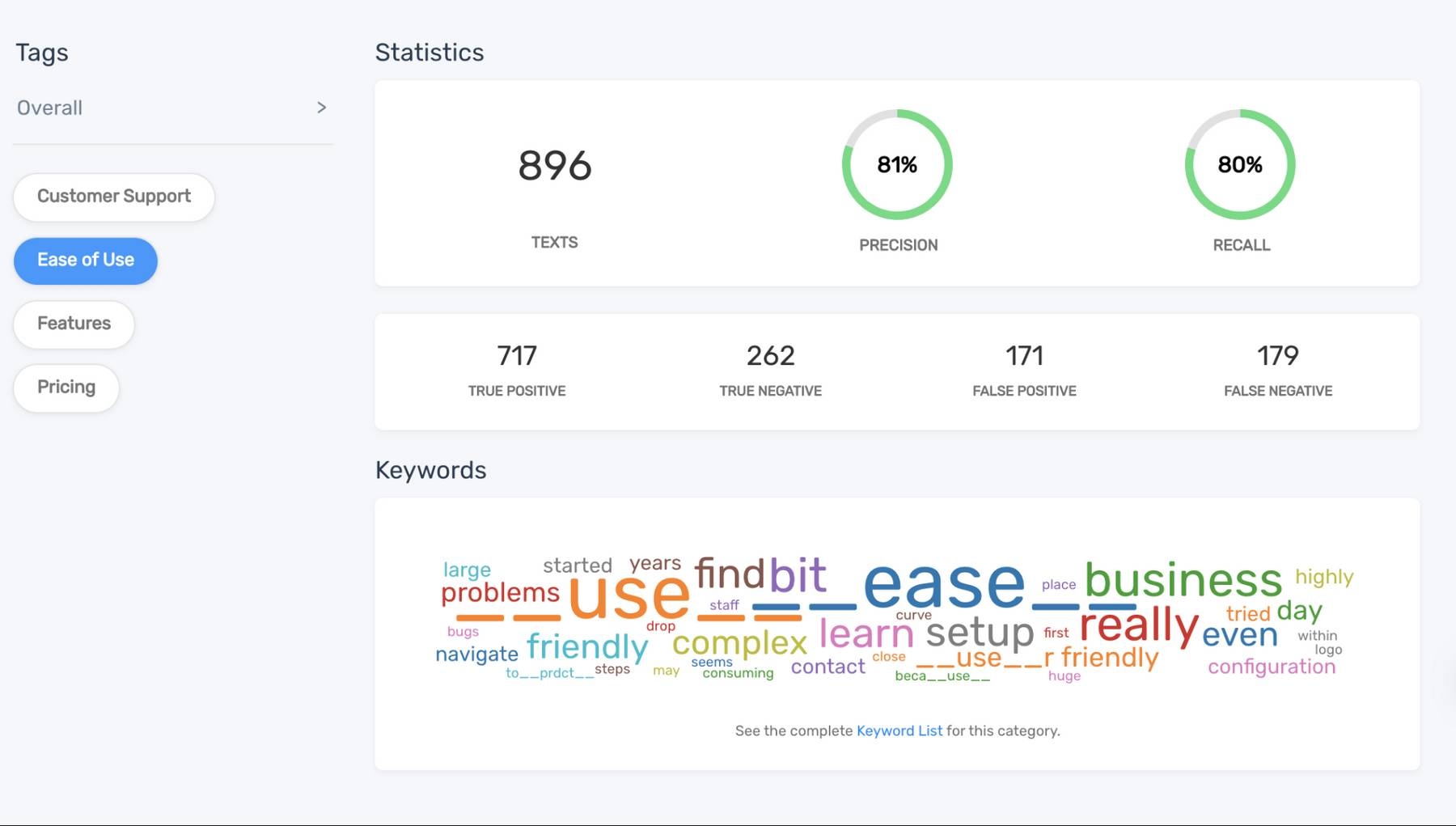

Take a look at the statistics from MonkeyLearn’s NPS survey classifier, below. The model has seen nearly 900 data samples that represent the tag Ease of Use, resulting in a high precision score of 81%.

Interested in learning more about machine learning scores? Read our guide on understanding classifier statistics.

Now, let’s take a look at the overall benefits of automated data labeling:

Huge cost savings: Machine learning can scale up or down immediately, so you only pay for the analysis you need.

Reduce employee churn: Cut down on boredom and let your teams focus on more fulfilling tasks

Improved accuracy and consistency: Machine learning models tag data consistently using a single set of rules

Get Started with Data Labeling with AI

Do you have a data labeling team and taxonomy in place? Sign up to MonkeyLearn for free or request a demo to put your data labeling structure to work.

Tobias Geisler Mesevage

March 4th, 2021