Mechanical Turk 101: How to use MTurk for tagging training data

Accurately human-annotated data is the most valuable resource for machine learning tasks. Some machine learning algorithms make use of large corpora of tagged data (i.e. large collections of annotated texts) to perform many different tasks, such as classification or information retrieval.

Usually, collecting data is not a problem provided you are planning on training machine learning algorithms for your personal or professional use. If that is the case, the chances are that you have already collected all of the data you will need. However, it is very likely that you have gathered untagged data, which for supervised machine learning tasks, is two inches short of useless.

Therefore, you will have to either take care of tagging data yourself or hire someone to do so for you. If you decide on the latter, here’s a word of warning: in-house annotation of data is expensive and time-consuming.

On this guide, we will go over how we can use Mechanical Turk for tagging data for our machine learning models. We’ll help you get started with the platform and provide you with some tips and suggestions on best practices.

We are going to talk about all of the following:

- How hard and expensive tagging data might be;

- How to get started with MTurk;

- Some best practices for the design of MTurk projects;

- How to publish projects and evaluate their results;

- Some considerations on the interaction with Turkers.

Let’s get started!

Tagging data is hard

Here is an example of a multi-label annotation task. Take a look at the text below from a random answer on a NPS survey:

When first using the Discovery product in the Adaptive Suite, I found it to be a little difficult to maneuver, both due to slow load speeds as well as a non-intuitive interface. I found that after working in it a while, taking a couple of tutorials and reading through the user guide, I was able to maneuver through it better. The connection speed issues also seem to have been resolved since it's introduction. Despite those initial cons, the product provides immense opportunity for managers to view important operational metrics in a useful and eye-pleasing manner. It can bring metrics to your users in a way that works for them, as it is flexible to adapt to what is needed for multiple types of operations. The customer service from Adaptive is also very receptive and helpful. They are very attentive to your needs and have good follow up. Great Product overall.

Now decide if the product review mentions one or more of the following:

- Features of the product or service (i.e.: integrations with other products or services, specific aspects of a problem that the product or service can solve, etc.);

- The ease/difficulty of use of the product or service (i.e.: bugs, speed, etc.);

- The price of the product or service or its value for money;

- References to the customer service of the product or service.

How long did it take for you to solve this annotation task? Maybe 10 seconds? 20 seconds? Maybe, even more than that.

How expensive is it to tag data?

Let’s do the maths. A typical worker who earns the minimum wage in San Francisco (~USD 13 per hour) will annotate 180 samples per hour (at 20 seconds per sample). This means that if you hired the worker, you would have to spend USD 72 per 1,000 samples.

Depending on the type of machine learning tasks at hand and how quickly you want to get the tasks done, you might need to annotate more data than your company can deal with in-house.

Say, for example, that you want to build a classifier with comments from an NPS survey like the one above. In order to get good results, you will need thousands of high-quality human-annotated samples.

With Amazon Mechanical Turk, you can get that many samples annotated within minutes -at the same rates- and, although you will have to take some time to evaluate the quality of the data you receive, the annotation process will be much quicker.

What is Mechanical Turk?

Mechanical Turk (MTurk) is a platform by Amazon where requesters (i.e.: people that need to solve tasks that require human participation) pay workers (also known as Turkers) who will help them finish a human intelligence task (or HIT) by working on micro-tasks or assignments. That’s it.

As a requester, you will be able to do all of the following:

- Design projects for Turkers to tag the data in your HITs;

- Manage the results of your HITs;

- Grant or revoke qualifications to workers according to their performance on your HITs.

How do I get started with Mechanical Turk?

Register as a requester

The first thing you need to do in order to submit HITs is to sign up as a requester. You can do so here.

Define your task

The second thing, which is probably the most important of all, is to take some time to define the task you will have Turkers work on. Ask yourself the following questions:

What type of task is it?

- Is it a single or multi-label annotation task?

- Is it a writing task?

- Is it a translation task?

What qualifications should Turkers have in order to do the task successfully?

- Should they speak a certain language?

- Should they be accurate?

- Should they be fast?

What should the sociodemographics of Turkers be for the task at hand?

- Do Turkers need to belong to a certain age group?

- Do Turkers need to live in a certain country?

Last, but not least, how much are you willing to pay Turkers for each HIT?

This blogpost will focus on the tasks MonkeyLearn users will need the most, that is, annotation tasks. Here's more information on other types of tasks Mechanical Turk can help you solve.

Create your project

The third step to submitting HITs to MTurk is creating a project. You can start a new project here. The process is really simple and it consists of three simple steps.

- Choose the type of project you will create from a list given by MTurk. This will create a default design for your project -which you will be able to change later, of course;

- Set the properties of your project, the properties of your HIT, and the worker requirements;

- Create an HTML design for your project -or change the one created by default.

Let’s go over each of them in turn.

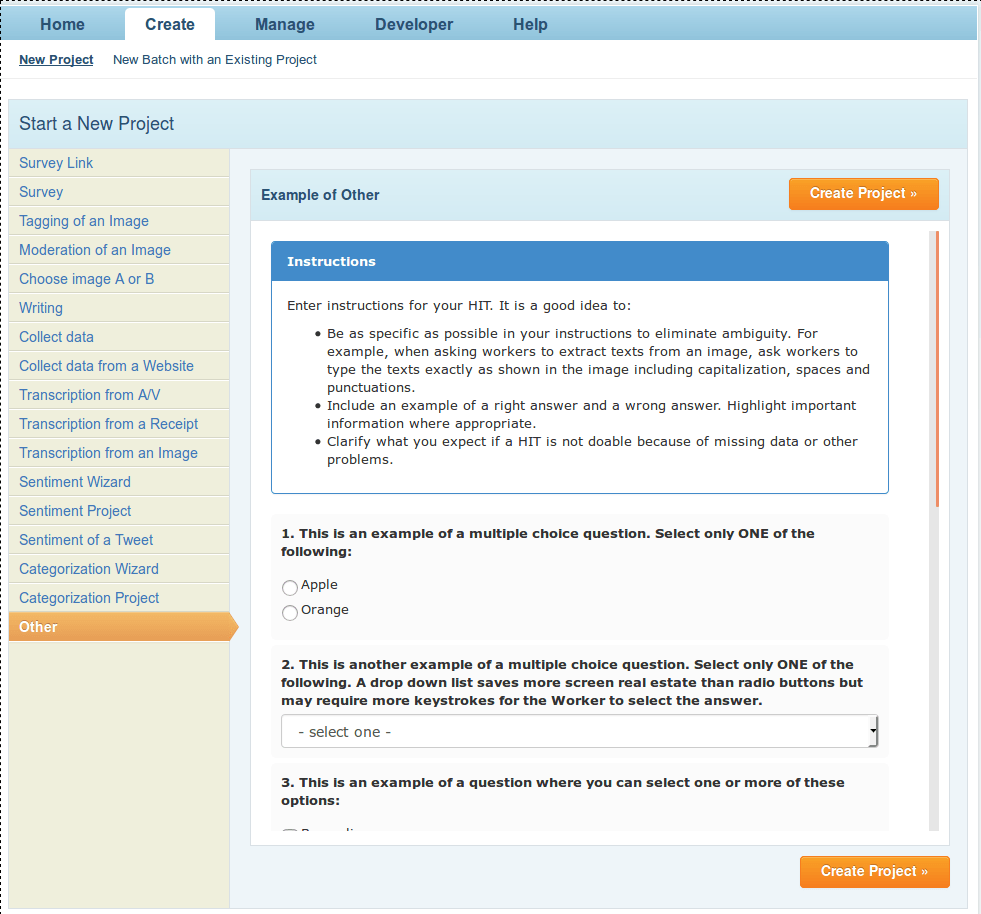

Choose the type of project

Create Project Wizard

MTurk provides a variety of project types with design templates in order to make it easy for requesters to get started. Each type of project will provide you with a default project design. Take some minutes to run through the list and choose the one that you see fit for your task. You will be able to change the design later.

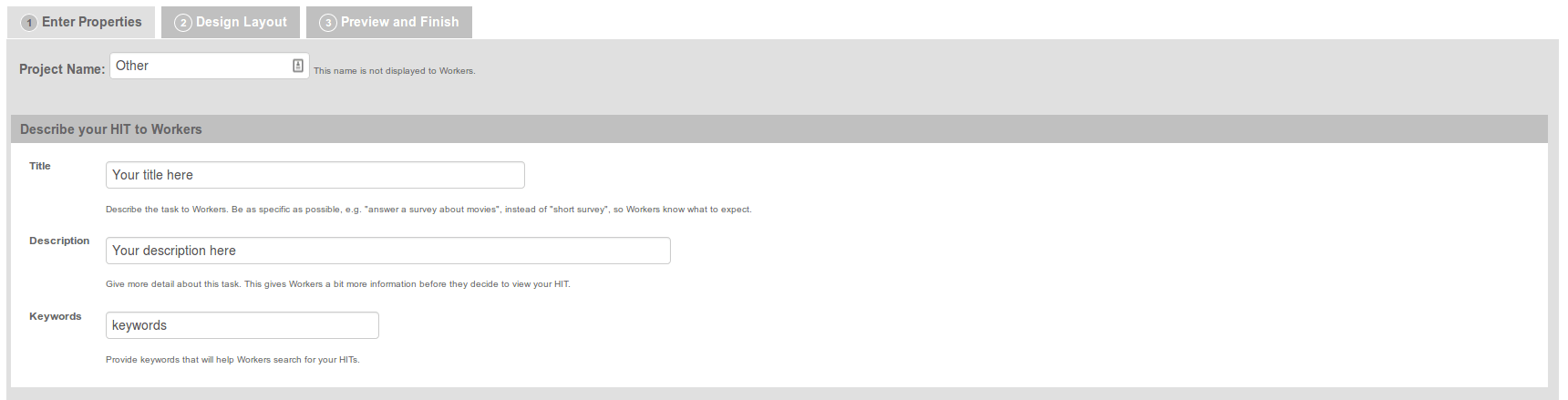

Set project properties, HIT properties, and worker requirements

As you can see in the image below, once you have chosen the type of project you will create, you will have to give it a name, a title, a description, and keywords.

Project Properties

What Turkers will see when they work on your HITs is the title, the description, and the keywords. The project name will not be seen by Turkers. It could be a good idea for you to include information about the time Turkers will have to spend to complete the assignments in the title of your project.

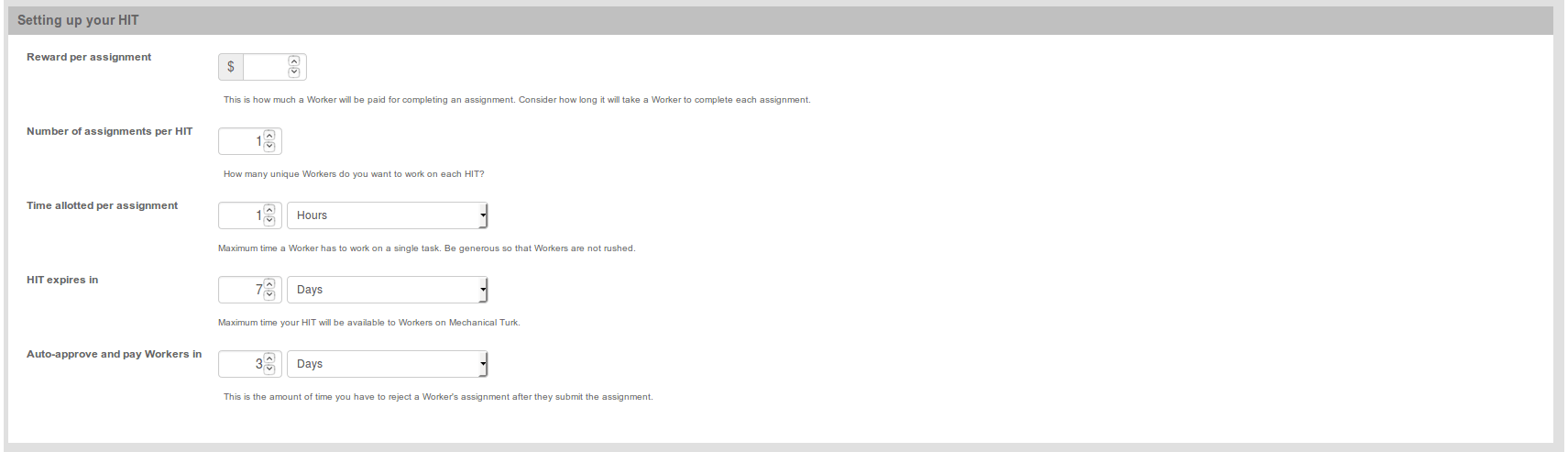

The image below shows the HIT properties you have to set.

HIT Properties

Reward per assignment

The reward per assignment refers to how much you will pay Turkers for each assignment they complete. If you are unsure about how much to pay, then calculate the amount so that a worker who works full-time (i.e.: 8 hours per day) on tasks like yours can earn at least the minimum wage in the SF area or more. Take into consideration that you should pay more for difficult tasks since no worker will want to work on a difficult task for very little money.

Number of assignments per HIT

The number of assignments per HIT (also known as plurality) indicates the number of Turkers that will work on each assignment. So, if plurality is greater than 1, you will get more than one response for every text you submit. Plural batches of data will cost you more money, but they will make it easier for you to evaluate the performance of workers, particularly when you want to test Interannotator Agreement (IAA) for a certain task or when you have doubts about the quality of the responses you will receive (for example, if the task is difficult). More on that below.

If you can afford to allow more than one Turker to work on each assignment, you might want to do so. That way, you will be able to evaluate Turker performance more easily and you will also have a second (or an n-th) opinion on the quality of the response for each assignment. If n is odd, you will be able to break the tie in case Turkers disagree. So, when you upload your data, bear in mind that you will have to pay the assignment reward n times for every text in your file (where n is the plurality of the HIT).

Pay-or-pray dilemma

This leads to what we have come to call the “pay-or-pray” dilemma. You can choose to pay more Turkers to work on fewer HITs or to pay fewer Turkers to work on more HITs.

If the former happens, you know there will be some consensus on the results that you get and you are more likely to get accurate data. If the latter happens, you will get a lot of data which might not be accurate. If you are on a limited budget, you will have to strike the right balance between quality and cost.

Time allotted per assignment

The time allotted per assignment property tells the Turkers how long it will take for them to finish an assignment. Make sure you provide workers with plenty of time to do the assignments regardless of the estimate you have made about the time needed for their completion.

HIT expiration

HIT expiration indicates for how long your HIT is going to be visible to Turkers. Make sure you give yourself plenty of time to review the results. TIP: If you have a deadline to meet for the completion of the task, set the expiration date to some days before the deadline.

Autoapprove and pay workers

Autoapprove and pay workers in indicates the amount of time you will have to review the results so as to decide whether to approve or reject the assignments. Once that time has gone by, your payments will be processed.

As you will see below, this parameter might affect your reputation among Turkers (also known as your TurkOpticon or TO). More on that below.

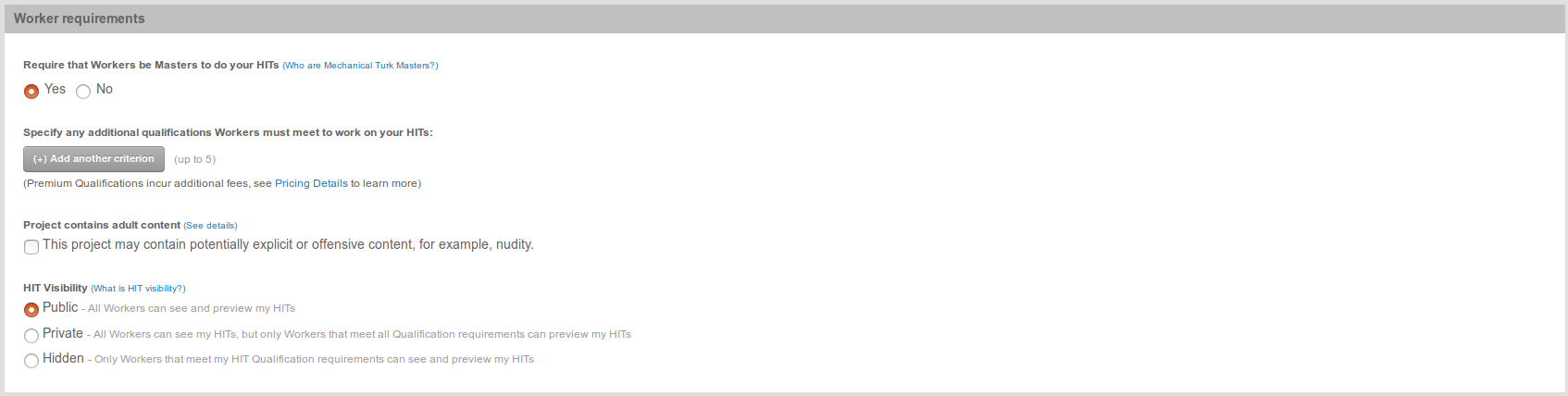

Worker requirements

Worker Requirements

In the worker requirements section you can choose the qualifications Turkers have to meet in order to work on your HITs. Depending on the task at hand, you might want Turkers to meet one or more of the following qualifications:

- Being MTurk Masters (i.e.: workers that, according to MTurk, have proved excellent across a large percentage of the HITs they have worked on);

- Having a certain HIT approval rate;

- Having a certain number of HITs approved;

- Living in a given location;

- Speaking a given language;

- and more...

These qualifications are divided into system qualifications and premium qualifications. The asterisks show system qualifications (i.e.: qualifications you can require for free). Premium qualifications are not free of charge. You can get more information their pricing here.

Adult content and HIT Visibility

You will also have to specify if your project contains adult content and set your HIT visibility. If HIT visibility is set to public, all Turkers will be able to see and preview your HITs. You can also set HIT visibility to private so that all Turkers will be able to see your HITs, but only those who meet the requirements you have set will be able to preview them. Alternatively, you can set HIT visibility to hidden so that your HITs will only be visible to Turkers who meet the requirements you have set.

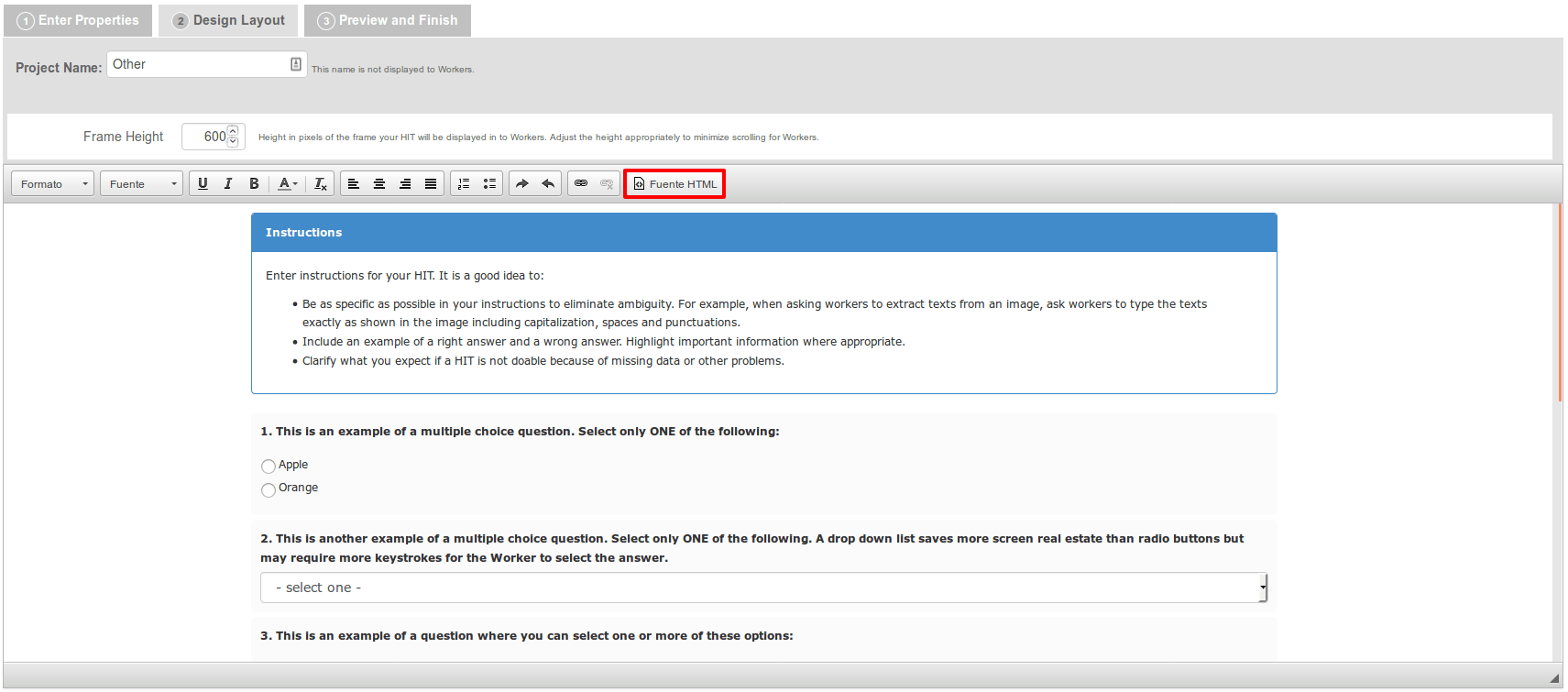

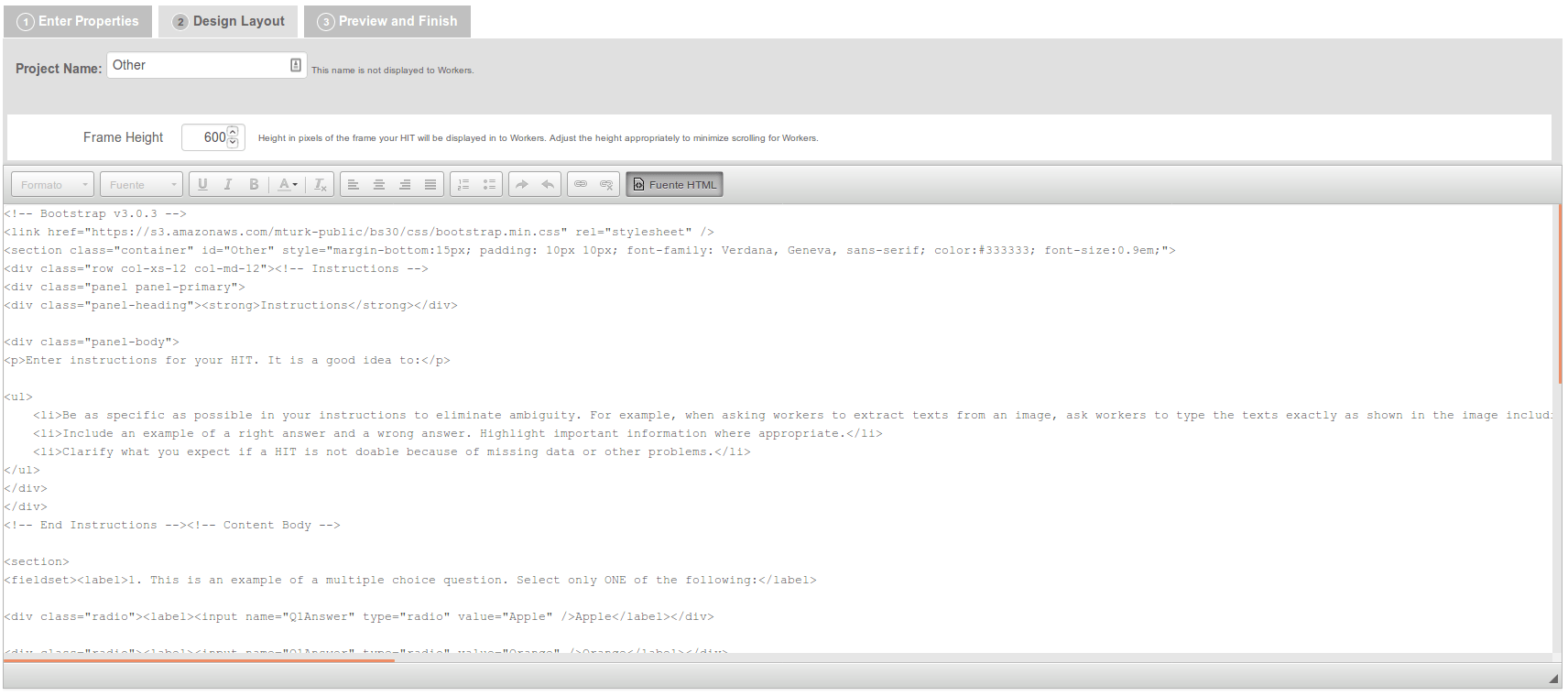

Create or change the HTML design of your project

Once you have set all of the properties of your project, you can start working on its design.

Mechanical Turk provides you with a user-friendly HTML editor to help you create a layout for your projects. However, if you want to manually change the HTML of your design, you can click the HTML source button and edit the layout yourself. See the images below:

Design Layout Tab

If you choose to modify the HTML source yourself, this information might come in handy. Pay particular attention to variable names (which will be replaced with data from your uploads) since you will have to use them to create your input data CSV file.

HTML Source

Now that you know the basics, let’s delve deeper into best practices for the design of MTurk projects.

Best practices for the design of Mechanical Turk projects

This sections focuses on the basic DOs and DON’Ts of the design of MTurk projects. After you have read this section of the post, you can read the Amazon Mechanical Turk Requester’s best practices guide to learn even more tips on design.

Create Initial Qualifications

Before you start submitting batches, it would be a good idea that you create an initial set of qualifications. This can be done under Manage → Qualification Types. Qualifications are “tags” you will grant workers based on their performance on the HITs you publish.

Qualifications can be assigned to Turkers under Manage → Workers by clicking on the Worker’s ID and clicking the Assign Qualification button or they can be granted by uploading a worker CSV file after you have reviewed the results of a task.

At first the qualifications list will be empty, but once you have evaluated the results for some HITs and assigned qualifications to workers, you will be able to use that list to choose who will be allowed to work on your HITS.

This could prove useful once you have detected high achievers for your tasks since you will get the chance to submit work to only those Turkers who are most likely to return accurate data.

Create Simple Tasks

Tasks should be kept as simple as possible so that every worker understands what the task is about.

According to the Requester Best Practices Guide by Amazon Mechanical Turk, instructions should be:

- simple;

- specific; and

- easy to read.

Also, redundant questions should be avoided; and plenty of examples should be included.

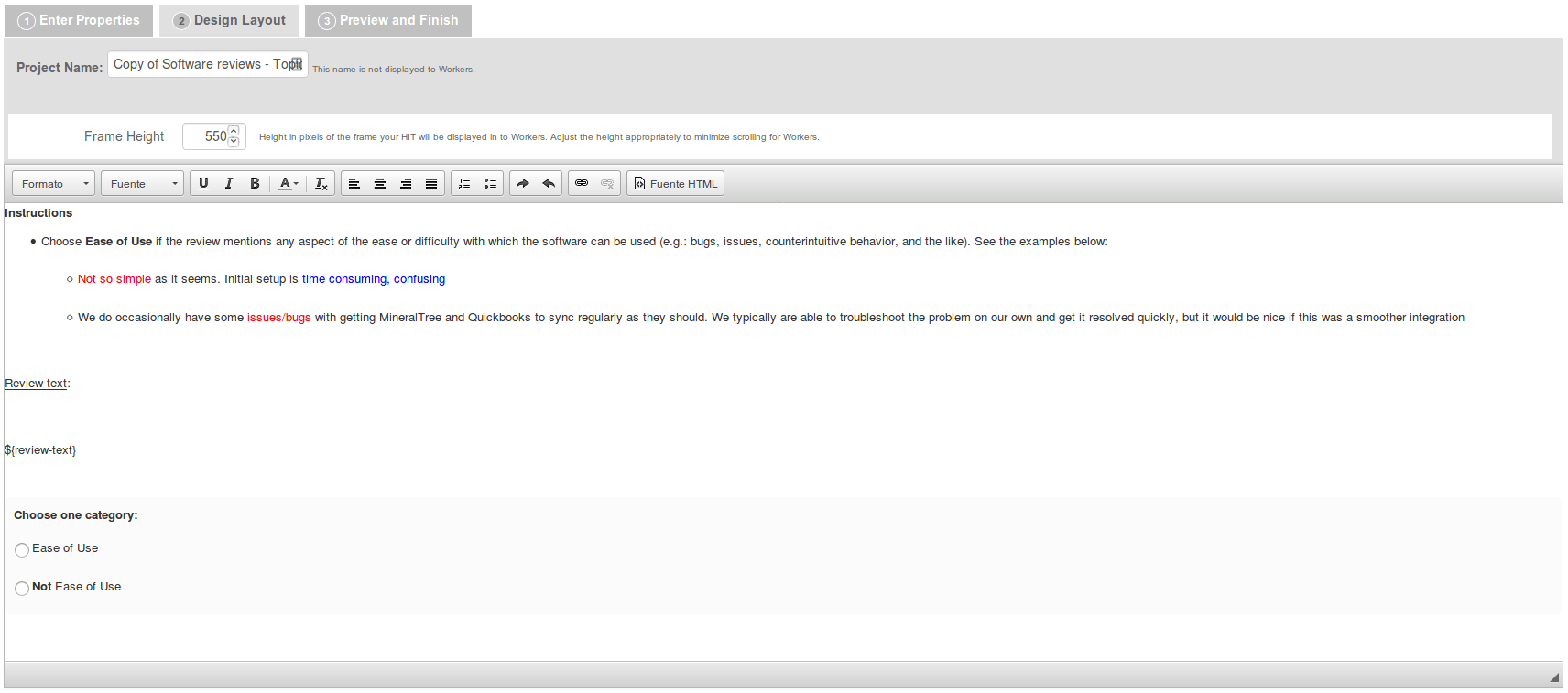

You might want to include at least three examples of HITs that have already been classified. You might also find it useful to highlight relevant information in the examples so that workers can complete the task more easily.

Here’s an example of a set of simple instructions for a categorization task:

Instructions Kept Simple

If needed, requesters should also explain the format responses have to comply with as well as the tools and methods workers are supposed to use in order to complete the task. All of that information should be included in the instructions.

Take Time to Create the Instructions for the Task

The most important component of every HIT is its instructions. The more straightforward instructions are, the better the data you get is likely to be. Here’s a couple of tips to help you create good instructions for your tasks.

Collapsed vs. Visible Instructions

Instructions can be either collapsed or visible at all times. When you submit multiple batches of data with different instructions simultaneously, instructions should be visible at all times.

This is particularly useful given that workers might work on multiple assignments at a time and could be misled to think that instructions for all the assignments they are working on are the same. If the latter occurs, the chances are that the quality of the work that you get is poor and you will end up paying for low-quality work.

We have learned this the hard way. Not too long ago, we submitted a couple of HITs with data from an NPS survey and collapsed the instructions so that Turkers would not have to read the instructions for the task every time. Bad idea. When we evaluated the quality of the responses, it was pretty clear we were not going to be able to use that data for our project.

We had no idea whatsoever where the problem was. Time and money seemed to have been wasted. After some rejections, blocks, unblocks, and a great deal of interaction with both friendly and upset Turkers who helped us figure out what the problem was, it became clear that collapsing different instructions had caused the problem.

However, if you submit multiple batches with the same instructions, you can choose to collapse them so that workers do not have to spend their time reading the instructions for every assignment.

Inform Turkers about Evaluation Policies

If you are planning on evaluating the results of your HITs manually (which we believe might be a good practice), you should make it clear to Turkers so that they know what to expect.

This way, if Turkers do not like the evaluation method you will use, they will be able to decide not to work on your tasks.

Besides, if you happen to reject results later (see below), Turkers will know the criteria you have used for the evaluation of the HITs and will be less likely to get upset.

Publishing Batches of Data

Once you have finished the design of your project, it’s time to upload data to Mechanical Turk.

Batches of data should be uploaded in a comma separated values (CSV) file. Depending on the task at hand, CSV files will have to comply with a format given by MTurk. Here’s more information on format restrictions for the CSV file.

For annotation tasks, the only format restriction the files that you upload have to meet is having a column with the header “review-text” (which is the default variable name for MTurk text review projects) under which you will place all of the texts you are going to submit in separate rows.

Splitting and cleaning your dataset

Unless you have to or want to upload all of the data you have for your tasks (maybe due to deadlines, budget issues, or the like), it is strongly recommended that you split your dataset before uploading HITs. You might want to split the texts randomly and remove very short texts from the dataset -which might be useless for topic classification purposes, for example- or filter out noisy data.

Include Gold Standard Items

In order to avoid the pay-or-pray dilemma (see above), you might want to include gold standard items. Gold Standard Items are texts you have already annotated yourself which you will upload to test Turker performance.

Once you have annotated those texts, you might want to create a batch with them and submit it for annotation. When Turkers have finished their work, you will determine which Turkers have done the task well and grant them your custom qualifications.

In the future, you might want to address new submissions to those Turkers who have been granted certain qualifications only. Also, according to the Requester Best Practices Guide, you should include gold standard items in all of your batches in order to measure the performance of workers over time.

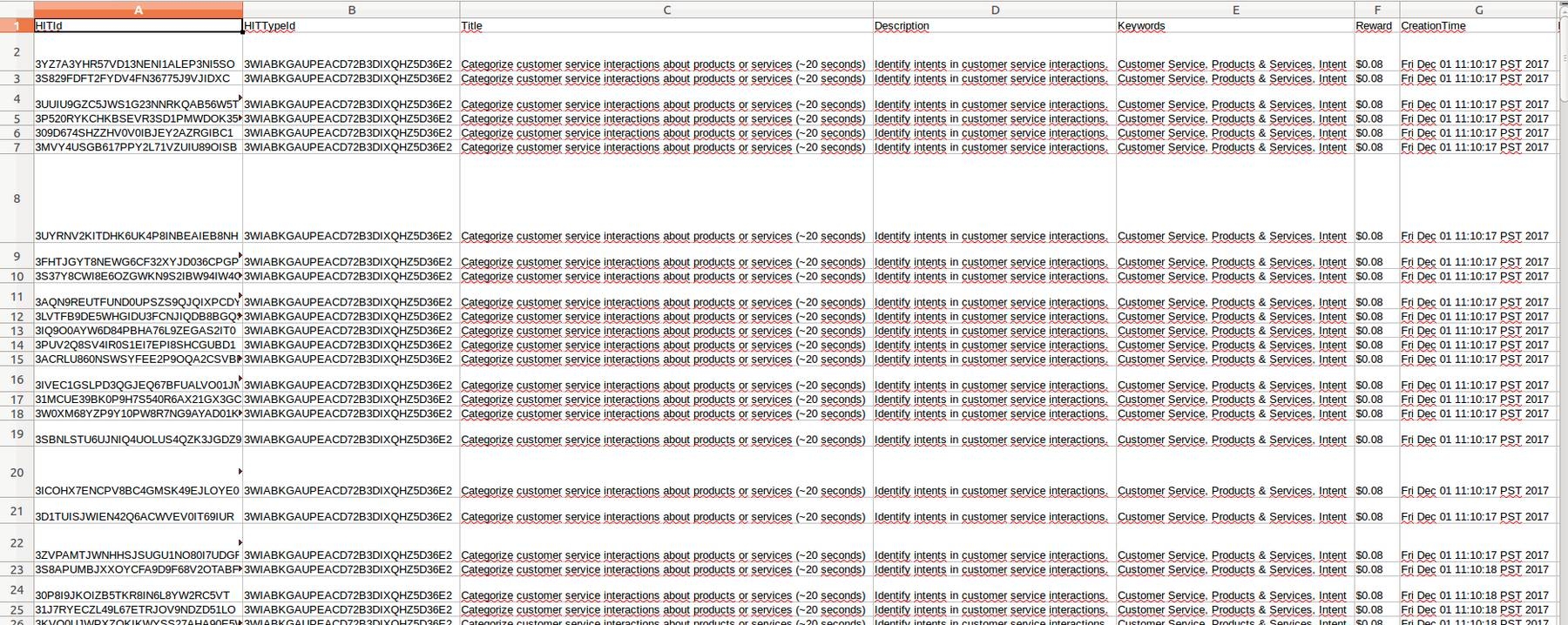

Evaluating Results

Once Turkers have finished working on your HITs, you will be able to download the results in CSV format (Manage → Results → Download CSV). A result CSV file looks like this:

Screenshot of Result CSV File

Besides the responses to all of the assignments, the result CSV file contains lots of information about the HIT you have submitted and the Turkers who have worked on it, for example, the time of completion of the HIT, the Turkers’ IDs in MTurk, the Turkers’ Approval Rates, and the Turkers’ qualifications.

You might want to use some of this information to evaluate Turker performance and the quality of the responses you have received.

Evaluating Turker Performance: HIT Approval or Rejection, Qualifications and Blocks

Gold Standard Items and MTurk’s UI

If you have created gold standard items and you have submitted them for annotation, then you will be able to compare the responses that you get with your own annotations.

You should determine which Turkers have been accurate in order to grant them the custom qualifications you created before submitting batches of data to MTurk. To do so, Go to Manage → Workers → Download Workers Results and download your Worker CSV File.

You might want to calculate the ratio of correct responses for each Turker and grant him a certain qualification if the ratio is greater than a threshold. However, many times workers will annotate just a few texts from your data which makes it difficult to decide whether a high ratio of correct responses is enough to grant them a qualification.

To grant a qualification, you can go to Manage -- Workers, click a worker’s ID and click Assign Qualification Type.

Submitting a small number of gold standard items for all of the batches of data you submit to MTurk can help you track Turker performance over time in order to revoke or grant your custom qualifications. This might be a bit more expensive, but unless you want to code (see below), it might be worth doing.

Accepting and rejecting a Turker’s work

Once you have evaluated a Turker’s performance, you can choose to accept or reject their work.

In order to accept a Turker’s work, you can type an X under the Accept header of the row containing the annotation in the CSV result file and upload it to MTurk. To reject work, you can type some feedback under the Reject header of the row containing the annotation in the CSV result file.

Here’s a word on ethics: if you do reject work -which is not a good idea,- do not use that data in your projects. Using data from rejected work might be really bad for both you and Turkers and it is, of course, unethical.

Blocking a Turker

You will also have the chance to block or unblock workers by filling out the last two columns of the CSV. Blocking workers means that they will not be allowed to work on your HITs in the future.

That said, we should note that MTurk might block a Turker’s account if too many blocks are detected, so try using qualifications instead of blocks. This way you won’t do any harm to Turkers and you will still be able to filter out low-achievers.

Gold Standard Items and AWS API

Another way of evaluating the performance of Turkers is submitting your texts and gold standard items in one batch and demand that Turkers annotate gold standard items and get a good score at those annotations before working on other assignments.

This way, you can make sure that Turkers who work on your assignments will do well. This can be done from the API by setting assignment review policies. You can access the API reference here. Unfortunately, to the best of our understanding, requesters cannot do this from the UI, so they will have to code in order to perform this kind of assessment of Turker performance.

To Keep or Not to Keep: Evaluating the Quality of Responses

Measuring Interannotator Agreement (IAA) in Plural Batches

If you have submitted plural batches, i.e. batches for which two or more Turkers are allowed to work on any given sample, one of the first things you will have to do once you have received the responses to your submission is assess how good or bad Interannotator Agreement (also known as Interrater Reliability or Concordance) was.

Now, what if IAA between Turkers is low? This would mean that Turkers had problems doing your assignments regardless of the responses they have provided. Of course, some of them might have nailed it, but maybe you would want to check for problems in the submission (e.g.: in the design or the instructions) since design problems might lead to poor IAA. If no problems are detected in the submission, then Turkers might have found the task difficult and that would be it. However, if you detect problems in the submission, you will have to fix them and submit your batches again in order to get quality data.

You will also have to compare the responses you received against the gold standard items. And, what if IAA between workers is high, but their performance on the gold standard items is low? This is likely to mean that there were problems with the submission. A high IAA between workers means that they have understood the task and have responded very similarly; however, a low performance on the gold standard items means that the responses they have given do not match what your expectations were. That said, you will probably have to redesign the task or reconsider your set of possible responses.

Interacting with Turkers

Turkers interact a lot between them and sometimes they might seek interaction with you.

Forums

There are a lot of forums where turkers interact (e.g.: MTurk Crowd, Turker Nation, or Reddit) and talk about the requesters, the HITs they have worked on and a lot more. Many forums also allow requesters to sign up so that they can interact with workers. You might also receive emails from workers whenever they have doubts or complaints about your HITs.

If workers happen to contact you via email or in forums, take some time to reply. According to the Requester Best Practices Guide, this should ideally make workers happy and might lead to better HITs, better data, and a better TurkOpticon.

TurkOpticon

A requester’s TurkOpticon (also known as TO) is a score given to you as a requester based on reviews by Turkers. You can find your TO here. A requester’s TO has 4 components:

- Fairness: how fair you are when accepting or rejecting work;

- Promptness: how fast HITs get approved and workers get paid;

- Generosity: how well you have paid workers for their work;

- Communicativity: how responsive a requester has been to workers’ complaints, concerns, and the like.

Basically, your TO reflects how good a requester you are from the workers’ stance. Knowing about a requester’s TO before working on their HITs is great for Turkers. So, making sure your TO is as good as possible might give you greater chances of success with MTurk.

For example, workers whose HITs have been rejected -and therefore don’t get paid- are likely to get upset -even if you have provided them with information about the evaluation criteria for the responses to your HITs. This will probably lead to their posting negative comments about you and your HITs on forums, which might make your TO drop.

So, although according to Mechanical Turk, it is OK for requesters to reject work, it might not be a good idea. Keeping a low rejection rate is likely to help you to get a high TO.

If your TO gets too low, the chances are that workers will not want to work on the HITs you publish. Therefore, you might end up having to wait longer to obtain results for your tasks.

Final Words

Mechanical Turk is a great resource to obtain tagged data really quickly. You can get thousands of samples tagged within minutes. Besides, if you take time to define your task well and create simple and easy-to-read instructions, the quality of the data you get can be really good.

It’s up to you to set the standards workers and responses will have to meet for you to consider responses good enough for your project. So, you will have to spend some time evaluating the quality of the responses you get so as to decide whether to keep them -and use them- or not and the quality of the tasks you have submitted. Here’s a lesson we’ve learned the hard way: the evaluation of the quality of training data you get from crowdsourcing services is just as important as any other step in the process of building good machine learning models. Unless you can tag your training data yourself, there is no escaping taking some time to evaluate the quality of the training data you will use.

Also, remember that interacting with Turkers -for example, by participating in Turker communities- can make the process of evaluating the quality of your tasks easier. Turkers can help you figure out problems with your instructions or submissions, which might simplify the process of evaluating the quality of your data.

There are other providers of human-annotated data you might want to submit your data to, such as ScaleAPI among others, but Mechanical Turk is likely to be your best call if you need human-annotated data at affordable rates right away.

Hernán Correa

January 18th, 2018