Best APIs Online for Natural Language Processing in 2022

Natural language processing (NLP) APIs are used to analyze and classify text much more efficiently and accurately than even humans could.

When you're ready to get started with NLP, APIs are extremely helpful to integrate natural language processing software into your existing systems and tools.

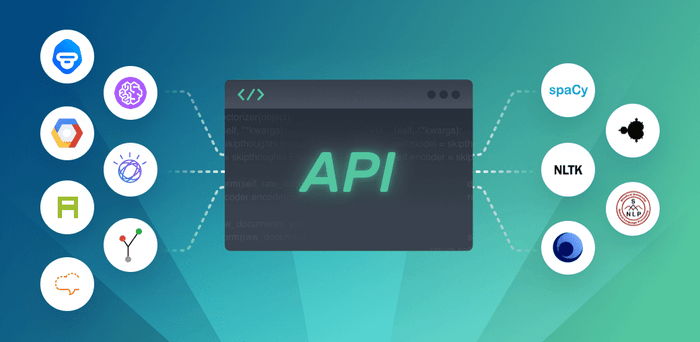

There are both open source and SaaS options depending on your needs, budget, and timeframe. Open source APIs are free and can be widely customized, but are best for companies with on-staff developers and machine learning experience.

SaaS APIs, on the other hand, can be implemented right away, often with just a few lines of code. With tools like MonkeyLearn, you can get started right away.

The Best SaaS and Open Source APIs for NLP

Best SaaS APIs for NLP

MonkeyLearn

Best for: Companies of any size that want easy API setup to access powerful pre-made and customizable NLP tools.

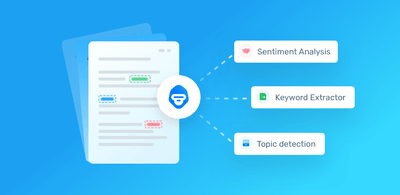

MonkeyLearn's cloud solution is intuitive and super user-friendly to perform all manner of text analysis, from entity extraction to sentiment analysis. With the MonkeyLearn API (available in Python, PHP, Ruby, Javascript, and Java) you can set up a number of powerful NLP tools in no time, with just a few lines of code. The APIs make it easy to connect to apps, like Google Sheets, Zapier, Zendesk, and more.

You can try out MonkeyLearn’s machine learning models for free to see how these NLP tools work:

And when you need to train your NLP models with industry-specific data and language, there are simple tutorials to set them up in just a few minutes.

Google Cloud NLP

Best for: Companies that want a managed service and easy integration with Google Cloud Storage.

Google Cloud NLP offers pre-trained Natural Language APIs to set up their features into your pre-existing systems: sentiment analysis, entity analysis, entity sentiment analysis, content classification, and syntax analysis.

The APIs are designed for easy integration with Google apps and Google Cloud Storage – perfect for companies that already use Google systems heavily. And their AutoML allows users with little computer science experience to customize tools.

AYLIEN

Best for: Companies that want to incorporate NLP into their proprietary apps and services.

AYLIEN has business solutions “built by developers, for developers,” as they say, designed for use within a company’s app or as part of their service.

Their APIs, available in seven languages, offer social media monitoring, brand analysis, document processing, and content tagging, among others, that use NLP to automate processes and save time. And their thorough documentation and online demos take the pain out of embedding your APIs.

IBM Watson

Best for: Companies that want to create custom NLP models (sometimes with small data sets) or process text in multiple languages.

With the Natural Language Classifier API from IBM Watson, developers can create highly accurate custom labels and classifiers with small data sets and little machine learning experience.

The APIs are available in six coding languages and can perform NLP analysis in 13 human languages. They allow you to build, train, and manage your analysis with just a little code, and you can evaluate your results to continually improve your confidence scores.

MeaningCloud

Best for: Companies that want to classify text to the specific taxonomies of their field or industry.

MeaningCloud’s Text Classification API analyzes individual phrases, then evaluates how phrases relate to each other to assign a “global polarity value.”

Their statistical classifiers use sample text documents for categorization, then rule-based classifiers help refine the output. Based on previous outputs, they can even categorize documents when no example texts are available.

Their API is pre-trained for taxonomies, like IPTC, IAB, ICD-10, and Eurovoc, and can be easily molded for other industries. Their test console allows you to simply choose an input and configuration and see the results immediately.

Best Open Source APIs for Natural Language Processing

All of the below are available in Python, the favored programming language for machine learning.

NLTK (Natural Language Toolkit)

Best for: Programmers that want a relatively easy start with NLP code.

NLTK is one of the most used open source platforms for programming with natural language data. The top of the website features “some simple things you can do with NLTK” with pretty short code to get you started. And there are a number of other places to find production-ready APIs to help you set up tools, like stemming and lemmatization, sentiment analysis, and named entity recognition.

It’s a great start to NLP, and resources like Natural Language Processing With Python, written by the creators of NLTK, can be extremely helpful. But it’s primarily used for research and education models, so it’s not the most practical for handling large amounts of data.

SpaCy

Best for: Developers that want high-performance NLP analysis.

SpaCy is an improvement on NLTK that is extremely fast and can handle huge amounts of data. It has become the open source tool of choice for professional-grade NLP.

The website offers a number of courses to help with setup, and the API is relatively easy to use for those with coding experience. It’s great for simple keyword extraction, building whole natural language understanding systems, or prepping text for deep learning.

TextBlob

Best for: Developers that want a user-friendly interface and functionality.

Built on NLTK and Pattern, TextBlob offers APIs for a multitude of NLP tasks: part-of-speech tagging, noun phrase extraction, classification, and sentiment analysis, among others.

It’s a top choice among less-experienced coders for it’s clean and easy interface that still performs great, though it doesn’t go too far beyond the most common NLP tools.

Stanford CoreNLP

Best for: Researchers and developers who want to perform multi-level and multi-language NLP.

Stanford CoreNLP can run a tool pipeline on text with just a couple lines of code and offers a single option to change tools. It also provides an annotator pipeline to include additional outside annotators.

Stanford CoreNLP is written in Java but has Python wrappers. They offer a CoreNLP API to get full performance and a Simple API “for users who do not need a lot of customization.”

CoreNLP works in seven human languages, so it can be great for language research, machine translation, and news analysis.

Gensim

Best for: Developers that primarily want to perform document comparison NLP tasks.

Gensim is particularly specialized to topic modeling, document indexing, and similarity retrieval, so if you want to do much beyond this, you’ll need to combine it with other libraries.

It does, however, perform very fast in Python thanks to its use of incremental online algorithms, data streaming, and low-level BLAS libraries.

Gensim is mostly used for document research–searching for similarities within hundreds of thousands of documents–and is common in medicine, insurance, patent research, and fraud detection.

Final Note

Natural language processing can save time and money and perform tasks automatically that you may have never thought possible.

And there is certainly a lot to take in from the world of NLP APIs. However, whether you’re an experienced coder, or just want to copy and paste a few lines of code, there’s something available for every skill level.

Try out free NLP tools and APIs before you buy then check out our various plans – from team to business.

Inés Roldós

May 27th, 2020