Sentiment Analysis APIs Benchmark

Sentiment analysis is a powerful example of how machine learning can help developers build better products with unique features. In short, sentiment analysis is the automated process of understanding if text written in a natural language (English, Spanish, etc.) is positive, neutral, or negative about a given subject. Nowadays, we have many instances where people express opinions and sentiment: tweets, comments, reviews, articles, chats, emails and more.

One popular example is Twitter, where real-time opinions from millions of users are expressed constantly. Just using Twitter, you could know things like:

- Are people talking positively or negatively about your brand?

- Are your followers liking your product and leaving positive feedback?

- Is your customer support efficient or is it being criticized?

- What are the best and worst aspects of your competition?

Companies use sentiment analysis on Twitter to discover insights about their products and services. For example, a hotel can process their followers or mentions and automatically get feedback to detect the opinion and sentiment of their guests toward particular aspects of the hotel, like location, service, cleanliness, sleep quality or room quality. You can take a look at this particular application in our previous post.

Sentiment Analysis Complexity

The thing is, sentiment analysis is damn hard. It's one of the most complex machine learning tasks out there. Things like sarcasm, poor spelling, lack of context, and the subtleties of sentiment make it difficult for a machine learning algorithm to understand the real sentiment behind a text expression.

But it's not only a difficult task for an algorithm; sentiment analysis is such a subjective task that even if you have 3 humans tagging 1,000 tweets with its respective sentiment (positive, neutral, negative), most often they won't agree in many of those tweets.

For example: is the following tweet positive? Or is it something that could be labeled as neutral?

"We got my dad an iPhone 6 Father's Day and he doesn't know what he's going and it's so funny"

Human agreement on the sentiment of a piece of text, specially on a tweet, may vary a lot because of the subjective nature of sentiment analysis. Most probably, people only agree on around 60-70% of the times.

Another complexity related to sentiment analysis is that the sentiment of expressions change depending on the domain. It's not the same doing sentiment analysis on tweets that talk about products as it on politics or movies. For example, reviews about hotels may have very different expressions than consumer products. Moreover, certain expressions or key phrases may mean a positive sentiment about a particular aspect of a hotel (for example 'cheap price'), but the same expression could mean a neutral sentiment (or even a negative sentiment!) about other services of products. For example, for a luxury product, someone saying 'cheap' probably isn't a good thing.

Sentiment Analysis APIs

Incorporating these types of machine learning technology has historically been very complex, often requiring a Natural Language Processing (NLP) expert. Those are scarce and most startups and SMBs do not have the resources to hire one.

Luckily, companies and developers that can't access a dedicated team of NLP and Machine Learning experts can access tools for automatic text processing and integrate them within their apps through APIs.

There are several sentiment analysis APIs out there that are ready-to-use and that use different techniques, ranging from manually crafted rules to machine learning algorithms to detect the polarity on a given text. These APIs make it really easy for any developer to incorporate and use NLP technologies with just a couple of lines of code.

API Benchmark

Using a sentiment analysis API that fits your needs and that’s accurate and fast enough for your specific use case is a key decision for any developer or company interested in integrating these type of automatic analysis. This is why we created the following benchmark where we:

Collected around 1734 tweets to create a dataset of tweets about the following topics:

- Celebrities: Obama, Mayweather, Kim Kardashian, Chris Evans, Tom Cruise.

- Brands: Google, Apple, Tesla, Verizon.

- Movies: Avengers, Star Wars, Star Trek.

- Other topics: iPhone, android.

Manually tagged all tweets according to their sentiment (positive, neutral or negative). We used CrowdFlower for this task, a people-powered data enrichment platform where contributors manually tagged each tweet according to their sentiment (see Crowdflower Methodology section for details on that).

Classified the tweets from the dataset using different sentiment analysis APIs:

Each API provides an endpoint that can receive a piece of text and return the predicted sentiment (positive, neutral or negative).

- Compared the results between the predictions made by the different APIs and the prediction made by human taggers.

Benchmark Results

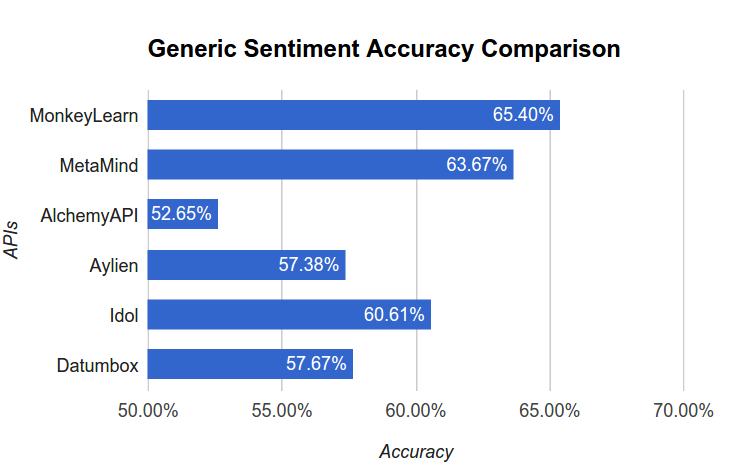

The level of accuracy for each API that we've got are the following:

The results show that MonkeyLearn, MetaMind and Idol returned the best results in terms of accuracy. The accuracy is the proportion of tweets that were correctly tagged with sentiment by the particular API. That is, in the previous results, MonkeyLearn assigns the correct sentiment in the 65.40% of the 1734 tweets used as tests.

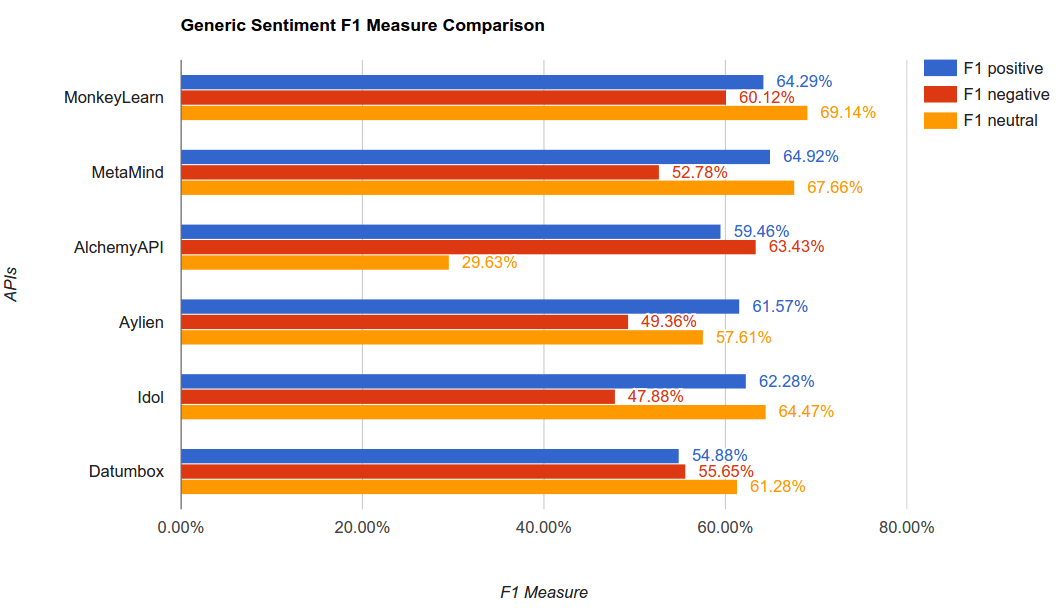

Going deeper into each tag, we calculated the F-measure (a harmonic mean between the precision and recall) for each tag (positive, negative and neutral). These metrics will give us a sense of how well each API performs on predictions within each particular tag.

The results are:

When you have to use a sentiment analysis classifier (and a machine learning classifier in general) you should decide which of the metrics are more important for your particular case: do you need to be more accurate on predicting positive or negative sentiment? As an example, if you are monitoring the reviews about your product, you may be more interested in detecting bad reviews than positive reviews in order to take a corrective action as soon as possible.

If you want to reproduce the benchmark, you can download the dataset with the tweets and the corresponding sentiment tags (by humans and APIs). You can also access the source code written in Python to run the APIs to get the corresponding metrics or take a look at our generic Twitter sentiment analysis classifier.

CrowdFlower Methodology

In order to create a human tagged dataset of tweets to benchmark the APIs predictions, we used CrowdFlower, the leading people-powered data enrichment platform, where users can distribute micro-tasks (like tagging a tweet with a sentiment) to over 5 million contributors.

Within CrowdFlower, we created a job and asked their contributors to classify these 1734 tweets according to their sentiment: positive, negative, and neutral.

It is worth noting that each of those tweets were tagged by at least 3 different and independent CrowdFlower contributors. For example, if a certain tweet was classified by 2 contributors as 'positive' and by 1 contributor as 'neutral', we used the majority decision and determined the sentiment of the tweet as 'positive'.

When contributors were tagging each tweet, they were presented with control tweets to ensure that they were tagged correctly. With these control tweets, CrowdFlower assigns a confidence score that helped us ensure that the resulting tagged dataset has only been done by trusted and proven contributors.

Other Benchmark Results

Sentiment analysis is such a complex machine learning task that it works much better when a customized algorithm is tailored specifically for a particular use case.

As we mentioned before, expressions and their corresponding sentiment varies depending on the particular domain where they are used.

For domain-specific sentiment analysis, MonkeyLearn has an edge over the rest of the APIs; developers can easily create their own custom text classifiers that fit their particular needs.

We wanted to dig deeper within this benchmark and see how the APIs performed if we ran sentiment analysis on specific use cases. We also wanted to see how much of an edge it is to be able to build a custom machine learning algorithm per use case.

For this part of the benchmark we:

- Got industry specific human-made datasets that CrowdFlower has made publicly available as part of their Data for Everyone initiative.

- Used part of this data to quickly train MonkeyLearn classifiers in a matter of minutes.

- Used the rest of the data to test the APIs (training and testing sets are disjoint).

- We compared the results of the human tagging vs the API’s.

For that purpose, we picked three datasets that caught our attention and we think are representative of different verticals:

- Tweets about brands and products.

- Tweets about Apple products.

- Tweets about airlines.

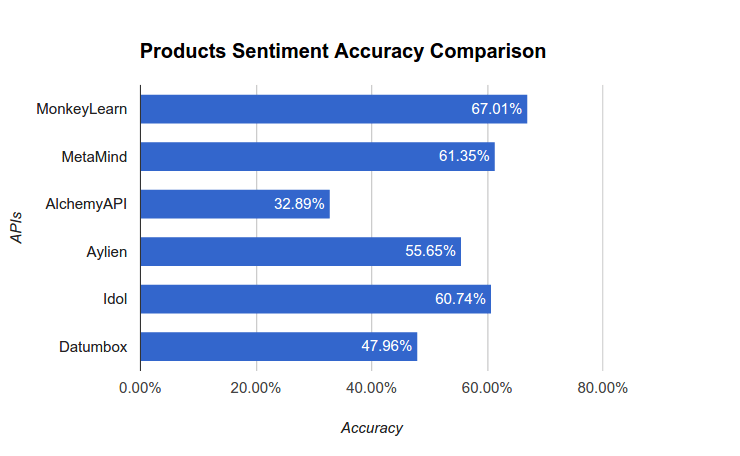

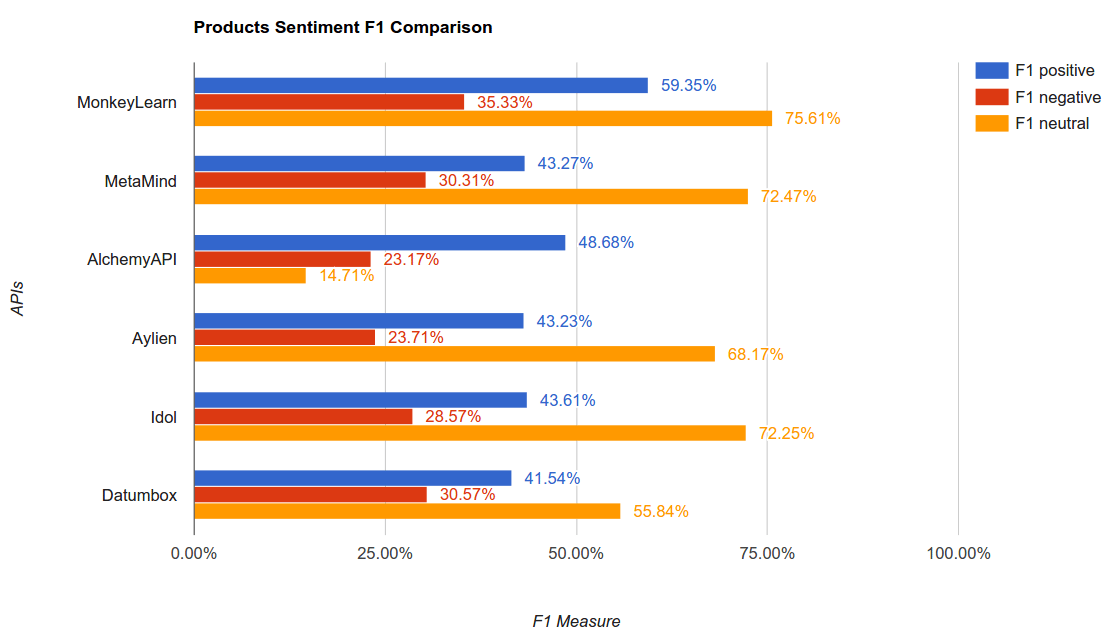

Tweets about brands and products

We used this public dataset where CrowdFlower contributors evaluated tweets about multiple brands and products according to their sentiment. These are the results when we compared the APIs to the human tagging:

You can download the dataset with these tweets about products and the corresponding sentiment tags (by humans and APIs) here.

You can try the MonkeyLearn classifier we built for sentiment analysis of tweets about Brands and Products here.

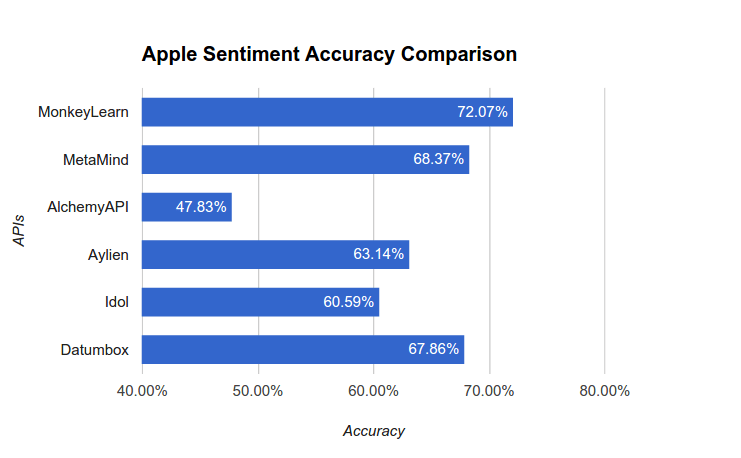

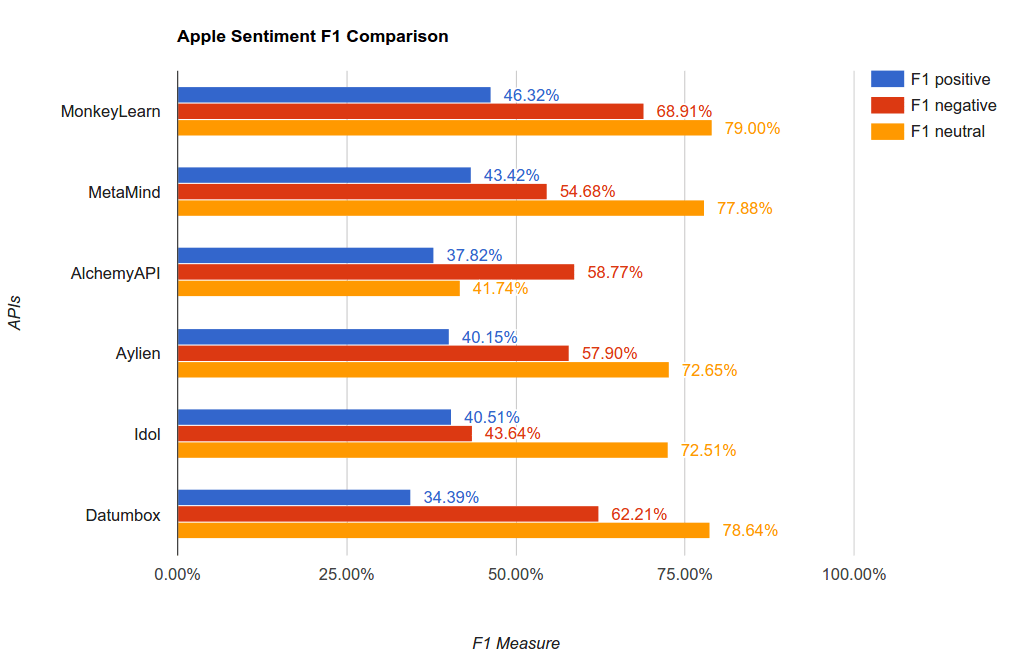

Tweets about Apple Products

We wanted to go even deeper and perform sentiment analysis of tweets related to only one specific brand: Apple.

So, we used this public dataset where CrowdFlower contributors were given a tweet and asked whether the tweet was positive, negative, or neutral about Apple. These are the results when we compare the APIs vs. the human tagging:

You can download the dataset with the tweets about Apple and the corresponding sentiment tags (by humans and APIs) here.

You can try the MonkeyLearn classifier we built for sentiment analysis of tweets about Apple here.

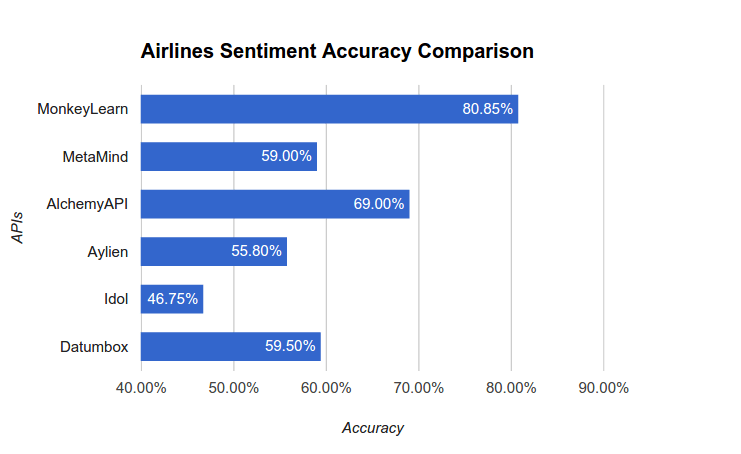

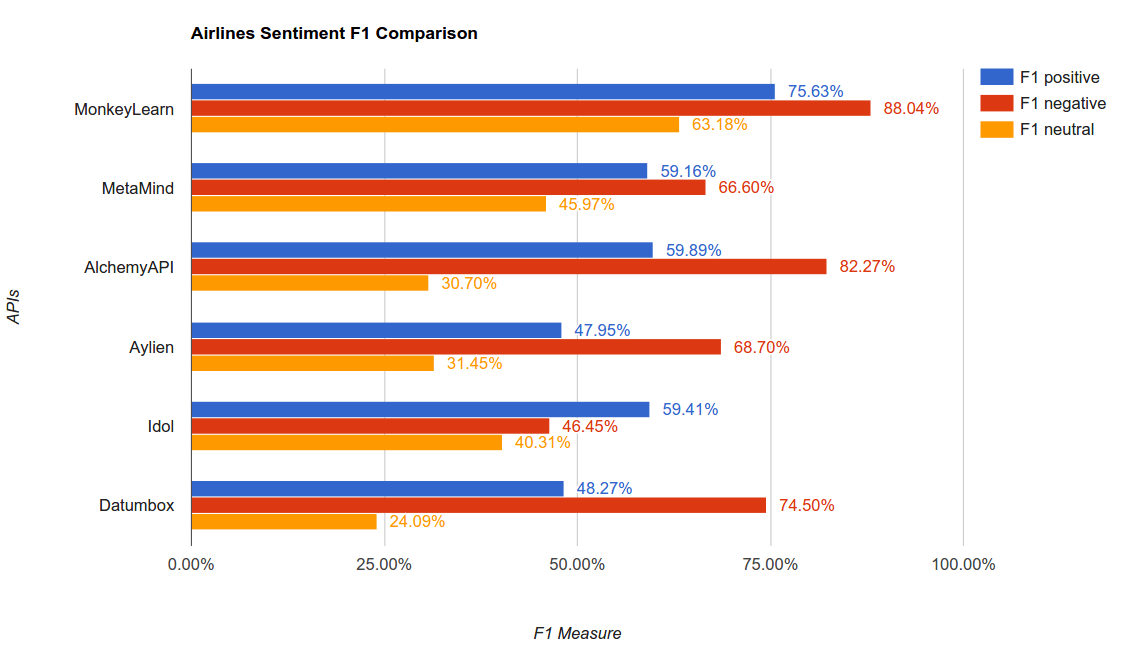

Tweets about Airlines

We wanted to try another industry vertical so we can compare how the APIs performs in other areas.

So, we used this dataset where CrowdFlower contributors were asked to classify positive, negative, and neutral tweets related to U.S. major airlines. These are the results when we compare the APIs versus the human tagging:

You can download the dataset with these tweets about U.S. airlines and the corresponding sentiment tags (by humans and APIs) here.

You can try the MonkeyLearn classifier we built for sentiment analysis of tweets about U.S. airlines here.

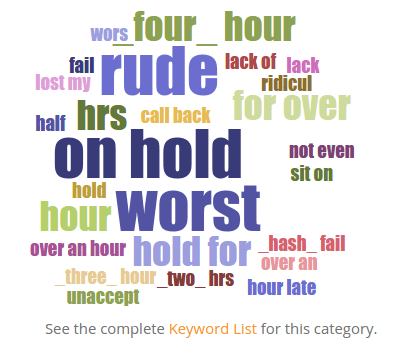

If you take a look at the classifier, you will see how MonkeyLearn's algorithms** learned which terms are most correlated to each tag (or sentiment). For example, the following is a keyword cloud of part of the terms correlated to the negative tag:

If you take a look at the detailed list, you can see how particular terms related to the sentiment about airlines are learned.

Conclusions

Sentiment analysis is a very complex machine learning task. Not only do algorithms have problems predicting the sentiment of a tweet, but humans have a rough time agreeing on the sentiment behind a tweet or any given text.

The good thing about using APIs is that machines are scalable, so if you have to process huge amounts of texts in real time, the best option is to go with machine learning. Besides, machine learning algorithms can be** improved over time simply by adding more text data.

Nowadays, any developer or company can quickly integrate these type of analysis into their apps or platforms, but they must try and choose smartly which API to integrate.

Finally, it's much more accurate to use a customized classifier that fit your specific needs and use case than using a generic one-size-fits-all sentiment analysis API. MonkeyLearn has an advantage in that:

- It provides an easy to use graphic user interface to quickly train customized machine learning algorithms on the fly without being an expert.

- It allows rapid prototyping and continuous improvement by adding more data and retraining in a couple of minutes.

- It gives access to advanced algorithms for feature extraction, which is one of the hardest aspects to deal when working with Machine Learning applied to text analysis.

- And last but not least, it provides a cloud computing platform and API that isolate the developers from real world pains like deploying, optimizing and scaling a machine learning stack in order to get maximum reliability and performance in production.

We hope this post gave the reader an overview of the sentiment analysis problem and the possible tools available on the market. Our mission in MonkeyLearn is to democratize the access to Natural Language Processing & Machine Learning technologies to empower every developer to build the next generation of intelligent internet applications.

Raúl Garreta

August 13th, 2015