Introduction to Text Analysis APIs

Long gone are the days when every single task was carried out by humans. We now live in an era where routine tasks are performed by machines, freeing us from tedious, time-consuming assignments.

Which is just as well when it comes to company data. Information is growing by the second, meaning traditional methods of manually processing text data are simply not scalable. That’s why more and more businesses are gradually replacing this task with machine learning techniques.

By using text analysis models powered by machine learning, you can elevate the speed, scalability, and accuracy with which you process text data, in a near fault-free environment.

For developers, the easiest way to leverage text analysis is by using APIs. Whether it’s open-source or SaaS APIs, you’ll be sure to find the right option that fits your needs. And it’s easier than you think to get started with text analysis.

In this post, you’ll learn about text analysis – what it is and the different text analysis APIs currently available on the market – then we’ll show you how to build a text analysis model from scratch.

Let’s get started!

What is Text Analysis?

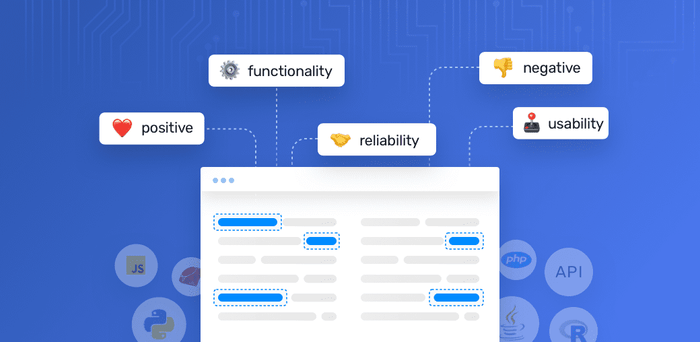

Text analysis is the automated process of structuring and gathering information from text. While there are plenty of methods for analyzing text data, the most frequently used techniques are text classification and text extraction.

Let’s start with extraction. This involves extracting information that’s already within your text data, such as keywords, names of brands, companies or people, prices, dates, client information, and so on.

Maybe you want to find out what your customers mention most often in a set of online reviews. By detecting patterns and frequency, a keyword extraction model can deliver a glimpse into which topics are being mentioned most and extract the most relevant words and expressions from these reviews.

Classification models, on the other hand, automatically categorize information by assigning tags that you’ve previously defined. Instead of extracting information that exists within your data, a classifier understands your text.

For example, let’s say you want to categorize online reviews about your SaaS into the topics or themes they talk about. You can train a topic classifier that can predict tags such as Customer Support, UX, and Features based on the content of a piece of text. This classifier can make sense of a review such as “I had to wait forever before someone finally responded to my issue” and classify it as Customer Support.

Popular examples of text classifiers include:

- Sentiment analysis: detecting if a text is talking positively, negatively, or neutrally.

- Topic analysis: understanding what a text is talking about (e.g. understand if a product review is talking about customer service or pricing).

- Urgency detection: detecting if a text is urgent or not.

- Intent detection: detecting the purpose behind a customer conversation or enquiry (e.g. upgrade plan, unsubscribe from mailing list, etc.

But how do these tools make sense of the human language? By harnessing the power of machine learning, text analysis models can automatically learn from data to process high volumes of information with accuracy, glean insights, and automate business processes.

Want to find out how to get started with text analysis? Keep on reading!

Text Analysis APIs

To use text analysis with machine learning, you can use either open-source or SaaS APIs.

Open-source libraries are publicly available, free, flexible, and can be tailored to suit your needs. However, you’ll need to build your machine learning models and the necessary infrastructure from scratch which will take time and resources. Plus, you’ll need a high-level understanding of machine learning.

On the other hand, text analysis APIs offered by different SaaS tools are ready to use allowing developers to quickly incorporate text analysis into their apps. If you opt to integrate SaaS tools to your apps via their APIs, you’ll only need to write a few lines of code and you won’t have to worry about the underlying infrastructure or learn about machine learning.

Now that you know the advantages and disadvantages of using open source and SaaS APIs, let’s take a look at some of the available options, starting with open-source libraries. There are endless libraries available for building text analysis models, but we’re going to introduce you to some of the most popular ones for Python, R, and Java:

APIs for Python

The most popular programming language among developers interested in machine learning is Python, so there are many options available for implementing text analysis models with this language, including:

NLTK: the Natural Language Toolkit is one of the best-in-class libraries for Natural Language Processing (NLP) as it provides a diverse set of tools including tokenizing, part-of-speech tagging, stemming, and named entity recognition. On top of this, it is one of the easier NLP packages to use and has a large community, as well as well-documented resources.

SpaCy: this is an NLP library that offers industrial-strength statistical capabilities, and that’s widely used for text analysis. It provides pre-trained neural models for tagging, parsing and entity recognition for several languages.

Scikit-learn: one of the most popular machine learning for Python. Scikit-learn provides a superior level of performance and added flexibility when creating text analysis models. It features classification, regression, and clustering algorithms that play nice with Python’s numerical and scientific libraries NumPy and SciPy.

TensorFlow: by far, one of the most widely used libraries for deep learning. Although it’s not recommended for people that are just getting started with machine learning, for those with more experience it’s possible to build text analysis models from scratch using deep learning.

PyTorch: a deep learning library built by Facebook and deeply rooted in the Torch library. It is mainly used for computer vision and natural language processing applications. It provides an intuitive experience when building machine learning models, and has quickly developed a strong support community.

Keras: is a high-level library that is capable of running on top of TensorFlow, CNTK, or Theano. Keras was developed for easy and fast prototyping. It’s a library with a focus on user-friendliness and provides great extensibility.

APIs for R

R is a software environment that is mainly used by statisticians and data miners looking to develop statistical software for data analysis. Some of the most popular packages in R for text analysis include:

Caret: an R package with multiple machine learning functions, from data ingestion and preprocessing, feature selection, and tuning your model automatically.

Mlr: this stands for machine learning in R and its framework provides the infrastructure for methods such as classification, regression, and survival analysis, as well as unsupervised methods such as clustering.

APIs for Java

Java has one of the largest ecosystems for your Natural Language Processing needs:

CoreNLP: Stanford’s very own NLP toolkit that is written in Java and includes APIs for all major programming languages. It is capable of implementing bag of words, recognizing parts of speech, normalizing numeric quantities, marking up the structure of sentences, indicating noun phrases and sentiment, extracting quotes, and much more.

OpenNLP: created by the Apache Software Foundation, this machine learning-based toolkit is a perfect fit for the processing of natural language text. It supports language detection, tokenization, sentence segmentation, part-of-speech tagging, named entity extraction, chunking, parsing, and conference resolution.

Weka: this library offers a collection of machine learning algorithms that are useful to build data mining tasks. It’s comprised of tools for data preparation, classification, regression, clustering, association rules mining, and visualization.

SaaS APIs

Don’t want to invest too much time learning about machine learning or building the necessary infrastructure? Well, luckily there’s an alternative to open-source tools: SaaS APIs for text analysis. These tools offer APIs that are simple to use and don’t require machine learning expertise to get started analyzing data. Here are some of the best ones available for text analysis:

Create Your Own Text Analysis Model with MonkeyLearn

MonkeyLearn makes it easy for you to get started with text analysis. With its intuitive interface and simple API, you can use machine learning models for text analysis in next to no time.

You can use one of our pre-trained models, from sentiment analysis to keyword extraction models, to glean insights from your data immediately.

Or, if you’re looking for more accurate insights we recommend you build a text analysis model tailored to your needs, and your business.

In this section, we’ll walk you through the steps to create a custom classifier with MonkeyLearn. For this tutorial, the classifier will be trained to analyze hotel reviews and tag the topics or themes they talk about (things like Price, Service, Cleanliness, and Location). Once you’ve learned how to create a classifier, we’ll show you how to use the model’s API to analyze incoming data and make predictions.

Let’s get started!

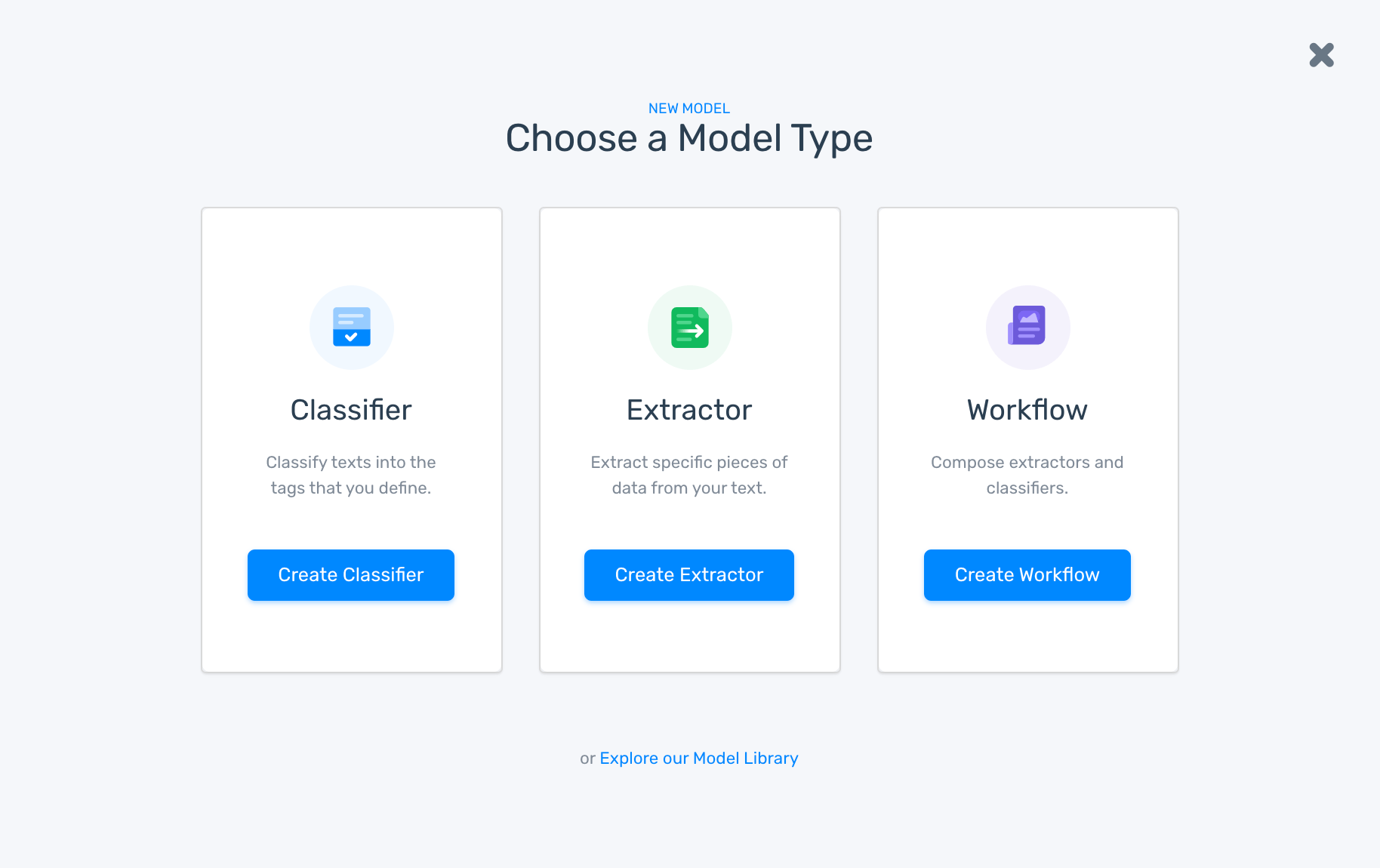

1. Create the model

First, you’ll need to sign up to MonkeyLearn for free. Once you’ve logged into your account, go to MonkeyLearn’s dashboard and click on ‘create model’. This action will prompt you to choose a model type. For the purpose of this tutorial, select ‘create classifier’:

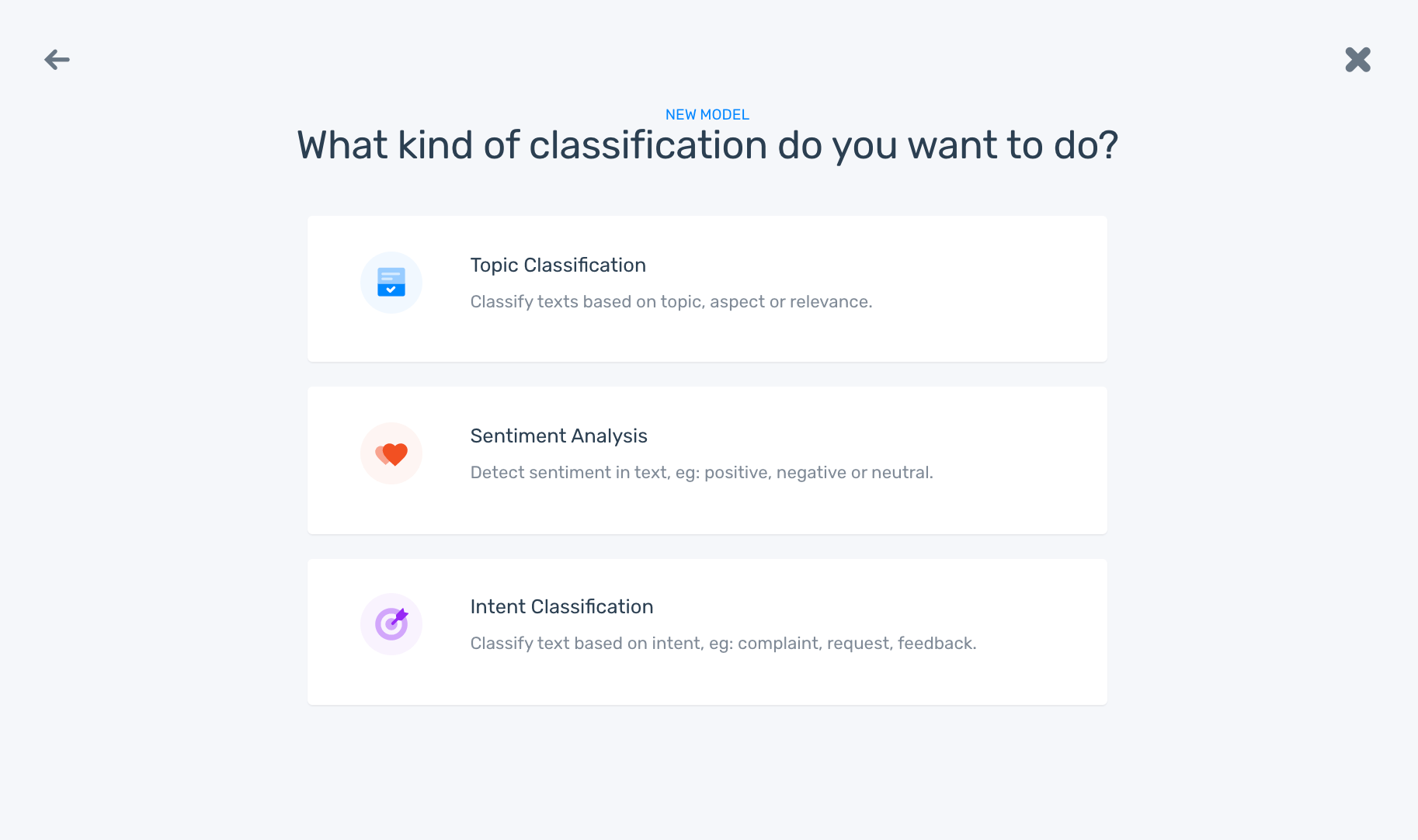

2. Choose the type of classification

The next step is all about the type of classifier you want to build. Go ahead and choose topic classification:

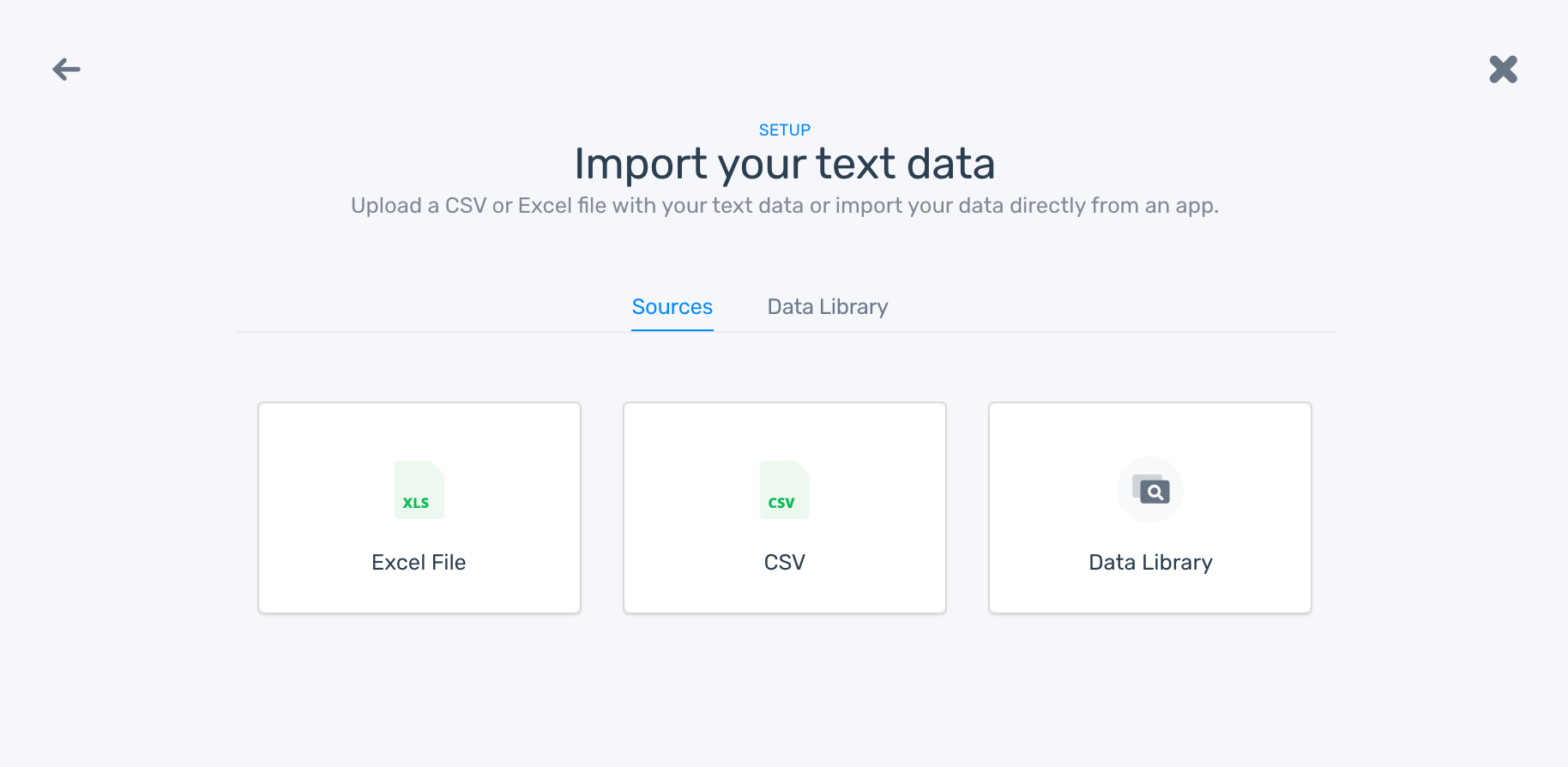

3. Import text data

Your model’s most important ingredient is data! In this step, you’ll need to upload data so you can then train your machine learning model. With MonkeyLearn, you can upload data from various sources, including from Excel and CSV files, or by using one of our many integrations.

For this step, go to ‘Data Library’ and download a public dataset with hotel reviews:

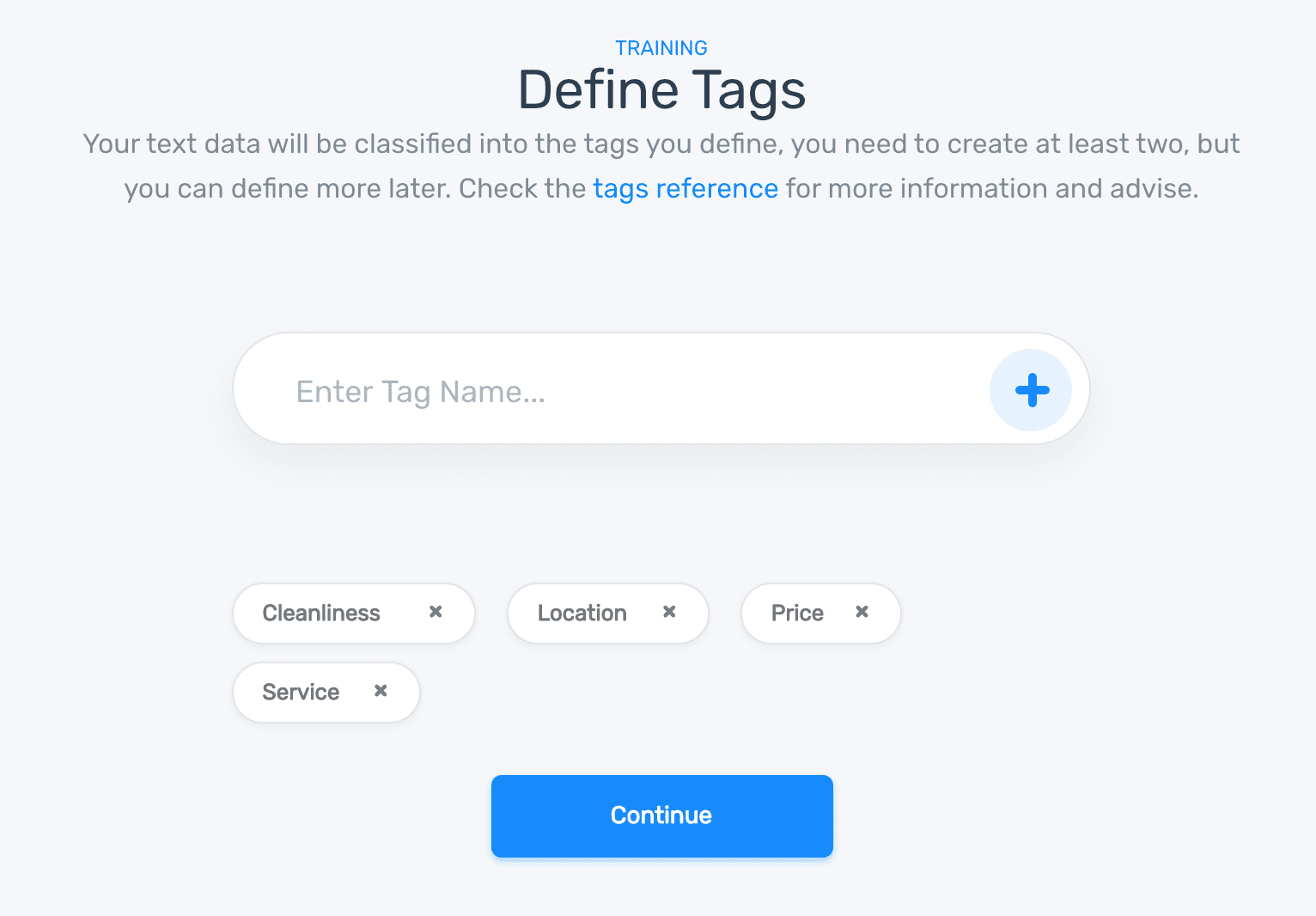

4. Define tags

Think of tags as the different topics or themes that you want your model to make predictions on, then add them to your classifier. For this example, we want to analyze hotel reviews, so our tags are going to include Price, Service, Cleanliness, and Location:

5. Train your model

This is where the real fun begins. Training is one of the most important, if not the most important step of creating a machine learning model. To start training your model, tag text examples with your previously defined tags (topics):

You’ll need to classify at least 10 texts for each tag before your model can start making predictions on its own. As you tag text data, the accuracy of your model increases.

5. Test your model

After tagging your data, you can either continue to train the model by uploading more data, or test it and see how it performs:

6. Integrate the model with its API

Now that you have trained a customized classifier for topic detection, you can use it to analyze new text data by calling the model’s API with Python, Ruby, PHP, Javascript, and Java.

For example, here’s how to make a request to MonkeyLearn’s API using Python:

from monkeylearn import MonkeyLearn

ml = MonkeyLearn('<<Your API key here>>')

data = ['The location is great', 'The hotel staff was rude to us']

model_id = '<<Insert model ID here>>'

result = ml.classifiers.classify(model_id, data)

print(result.body)

And here’s the output for this request:

[{

'text': 'The location is great',

'classifications': [{

'tag_name': 'Location',

'confidence': 0.971,

'tag_id': 34737175

}],

'error': False,

'external_id': None

}, {

'text': 'The hotel staff was rude to us',

'classifications': [{

'tag_name': 'Service',

'confidence': 0.932,

'tag_id': 35167175

}],

'error': False,

'external_id': None

}]

And there you have it, your own custom classifier that uses machine learning to analyze data!

Wrap-up

Text analysis with machine learning allows you to accurately get value from data in a reliable, cost-effective, and fast fashion. Instead of analyzing data manually, you can use one of the available APIs to make better use of your data and resources.

Luckily, there are various tools out there that can help you use text analysis successfully, such as open-source libraries and SaaS APIs. Open-source tools are great because of their flexibility and capabilities, but they require time and resources, plus extensive machine learning knowledge. Whereas, SaaS tools such as MonkeyLearn are ready to use and allow you to integrate text analysis right away.

If you want to learn more about MonkeyLearn and what our solution can do for you, be sure to request a demo so our team can help you integrate text analysis into your business.

Federico Pascual

October 21st, 2019