Text Classification Using Naive Bayes

There are many different machine learning algorithms we can choose from when doing text classification with machine learning. One family of those algorithms is known as Naive Bayes (NB) which can provide accurate results without much training data.

In this article, we will explore the advantages of using one of the members of the bayesian family (namely, Multinomial Naive Bayes, or MNB) in text classification and will help you get started with MNB-based models in MonkeyLearn.

Converting Texts into Vectors

In order to leverage the power of bayesian text classification, texts have to be transformed into vectors before classification.

Vectors are (sometimes huge) lists of numbers. That’s it.

Those numbers will help the algorithm decide whether the vector representation of a text belongs to a category or not. There’s a great many ways of encoding texts in vectors. If you want to learn about some of them, read this.

Now, one of the many things you can encode in vectors is the probability of appearance of a word or a sequence of words of length n (also known as n-gram) within the words of a text or the words of a category. Since a Naive Bayes text classifier is based on the Bayes’s Theorem, which helps us compute the conditional probabilities of occurrence of two events based on the probabilities of occurrence of each individual event, encoding those probabilities is extremely useful.

For example, knowing that the probabilities of appearance of the words user and interface in texts within the category Ease of Use of a feedback classifier for SaaS products are higher than the probabilities of appearance within the Pricing or Features categories will help the MNB text classifier predict how likely it is for an unknown text that contains those words to belong to either category.

You can learn more about Naive Bayes text classification in this blog post, where it explains how probabilities are calculated over a sample training dataset and how easy it can be to determine whether a text belongs to a category or not just by taking a look at its words.

Creating a Text Classifier with Naive Bayes

You can create your first classifier with Naive Bayes using MonkeyLearn, a easy-to-use platform for building and consuming text analysis models.

First you'll need to sign up for free to MonkeyLean.

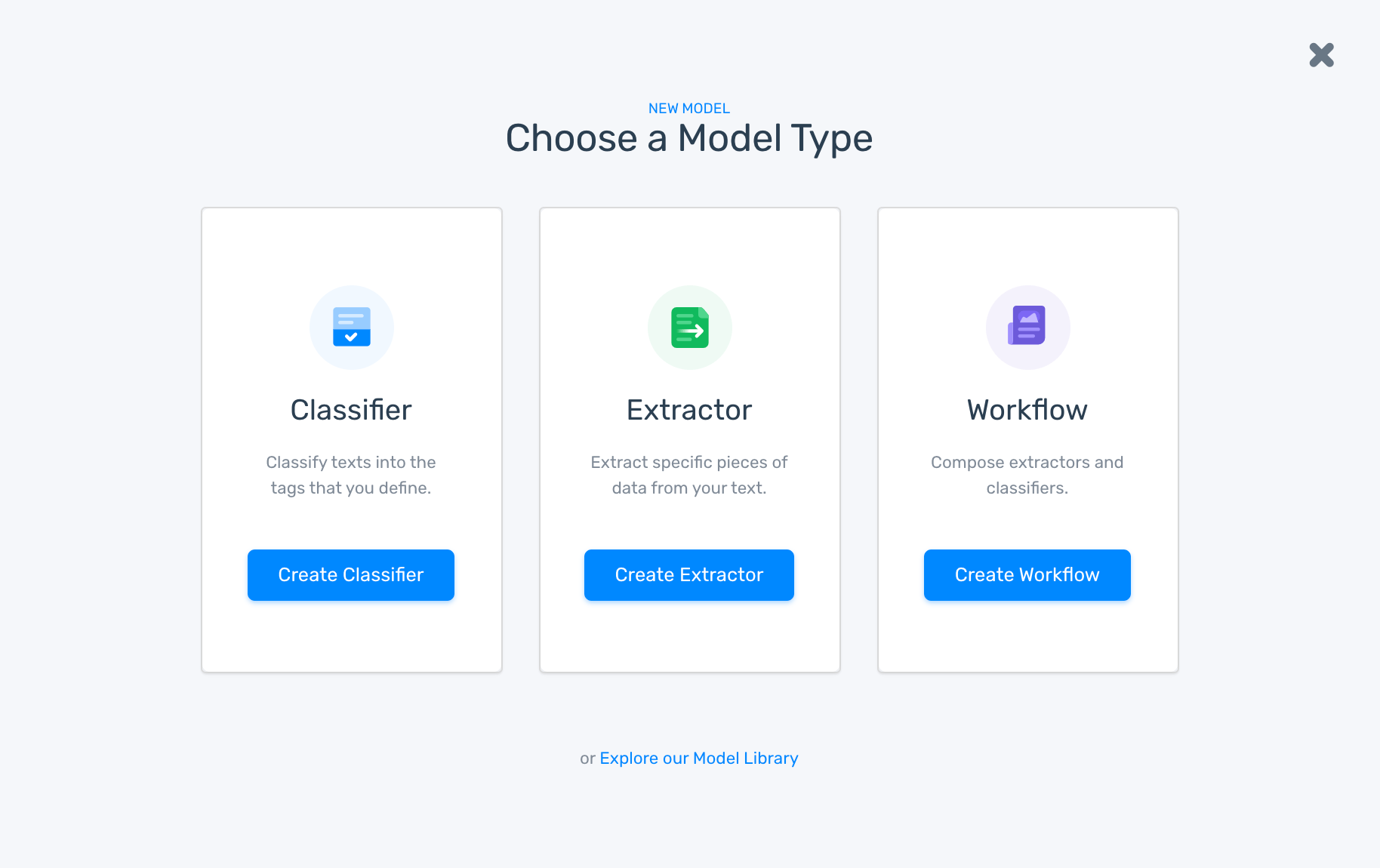

1. Choose A Model

Go to MonkeyLearn's dashboard, click on create a model, and choose Classifier:

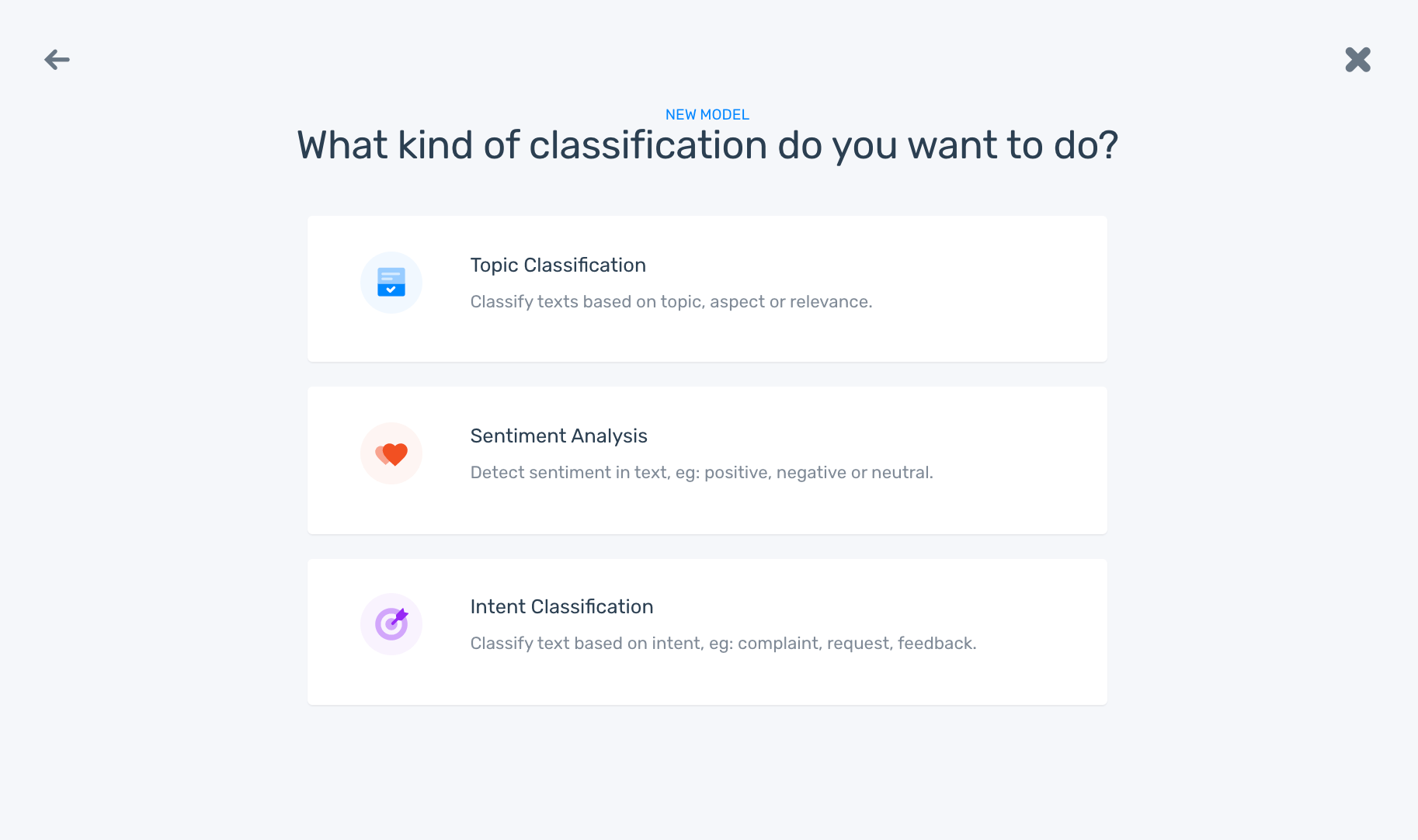

2. Choose Classification Type

Then, choose the type of classification task you would like to do. In this quick tutorial, we are going to focus on a very specific problem, i.e. how to analyze the topics being talked about in texts from hotel reviews, let’s choose Topic Classification:

By the way, remember that text classification using Naive Bayes might work just as well for other tasks, such as sentiment or intent classification.

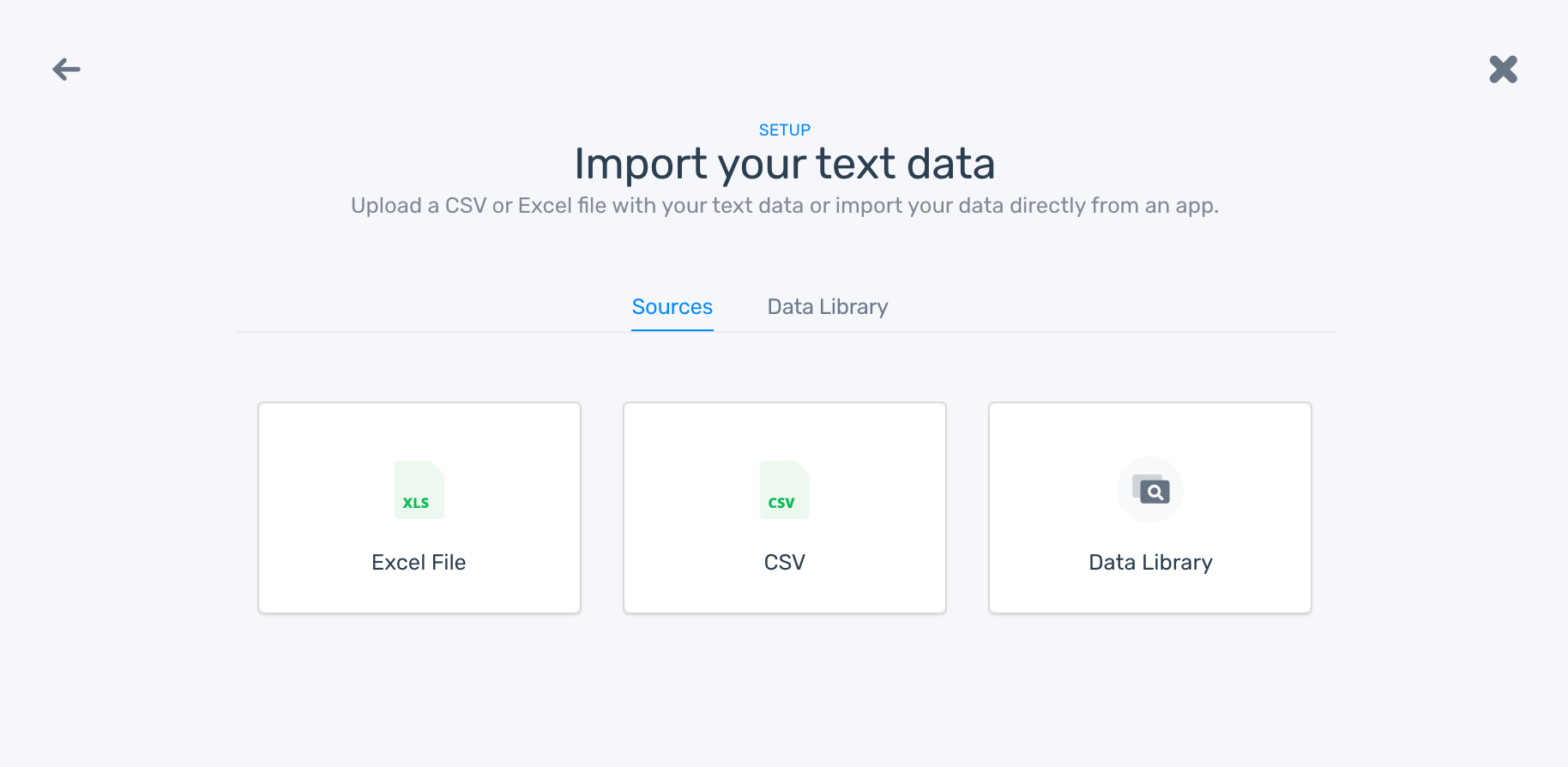

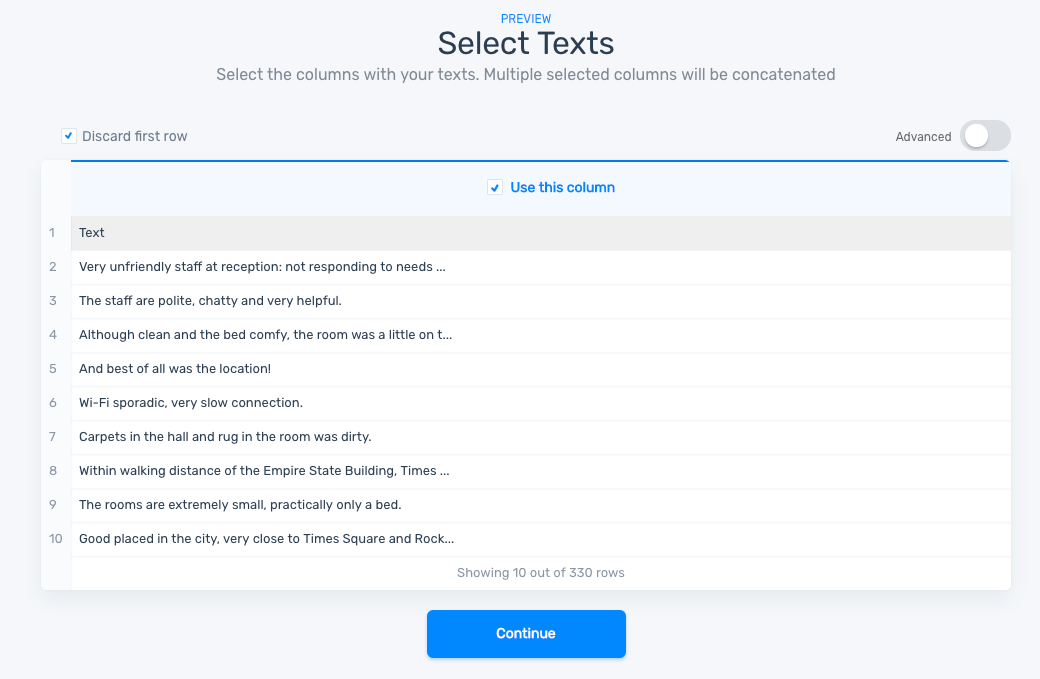

3. Import Data

As a next step, we need to import the data that we’ll use for training the classifier:

Once we’ve uploaded the file, a preview of the data will be shown on the screen, let’s click Continue:

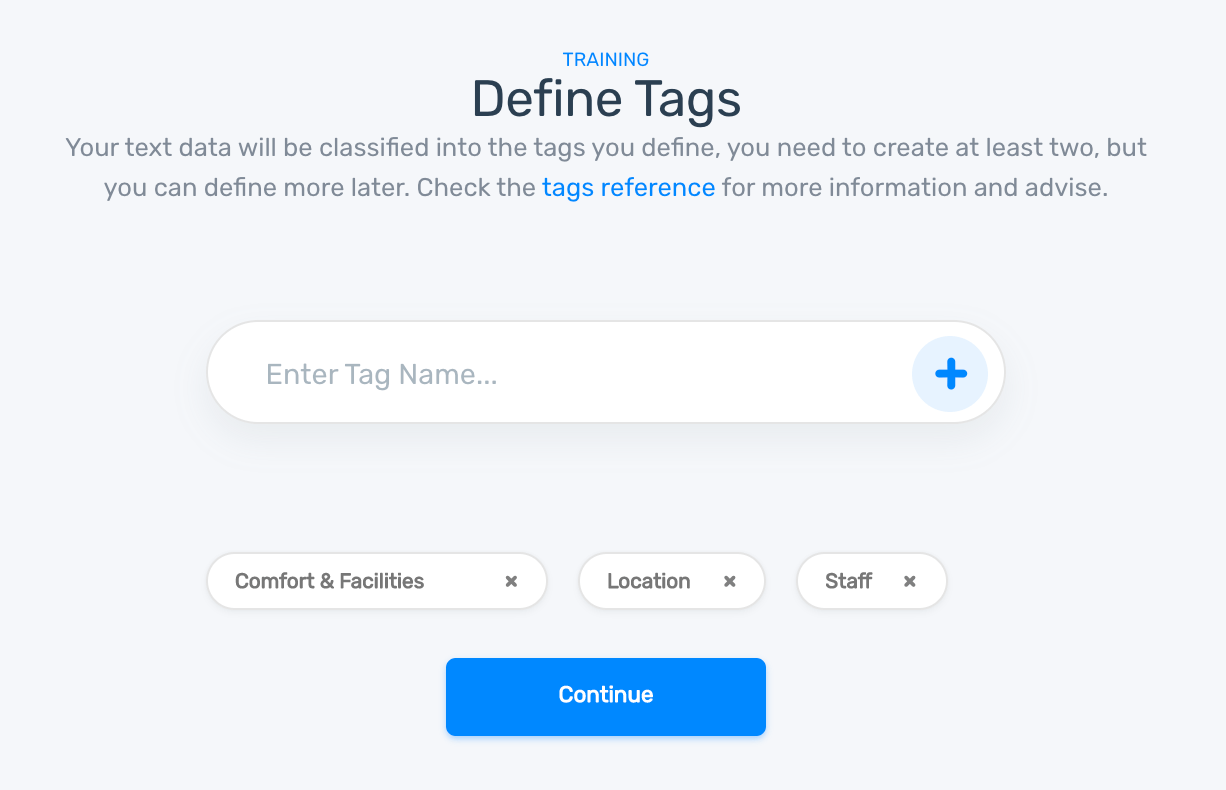

4. Define Tags

Now, it's time to define the tags that our classifier will use. Let’s work with tags like Location, Comfort & Facilities, and Staff:

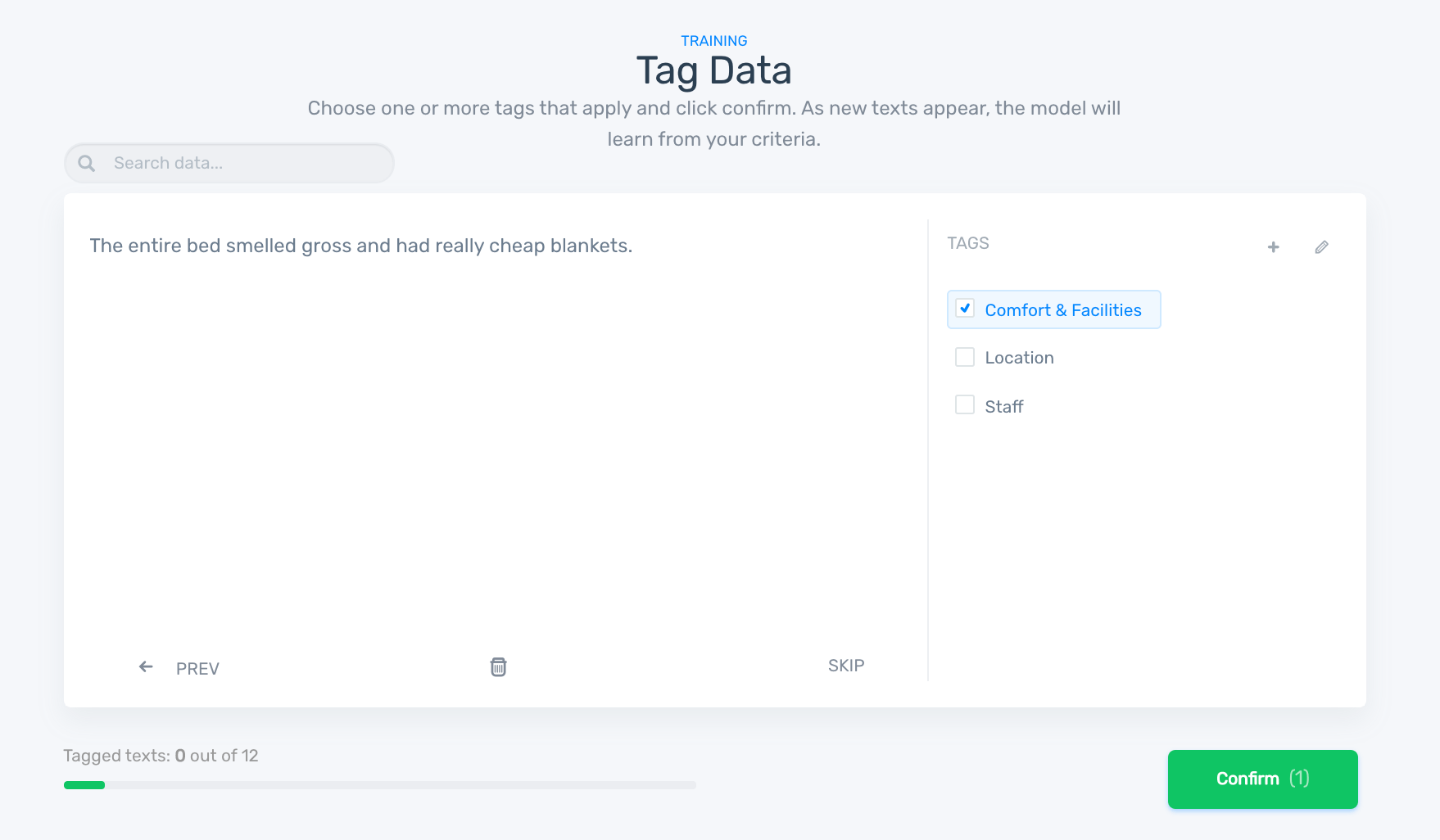

5. Tag Data

Next, we’ll need to tag the data with the appropriate categories to start training the classifier. This will help the naive bayes classifier learn that for a particular text, you expect a particular set of tags as output:

Once you have finished taking care of your training data, you will have to name your classifier. Type some descriptive name in the textbox and click Finish.

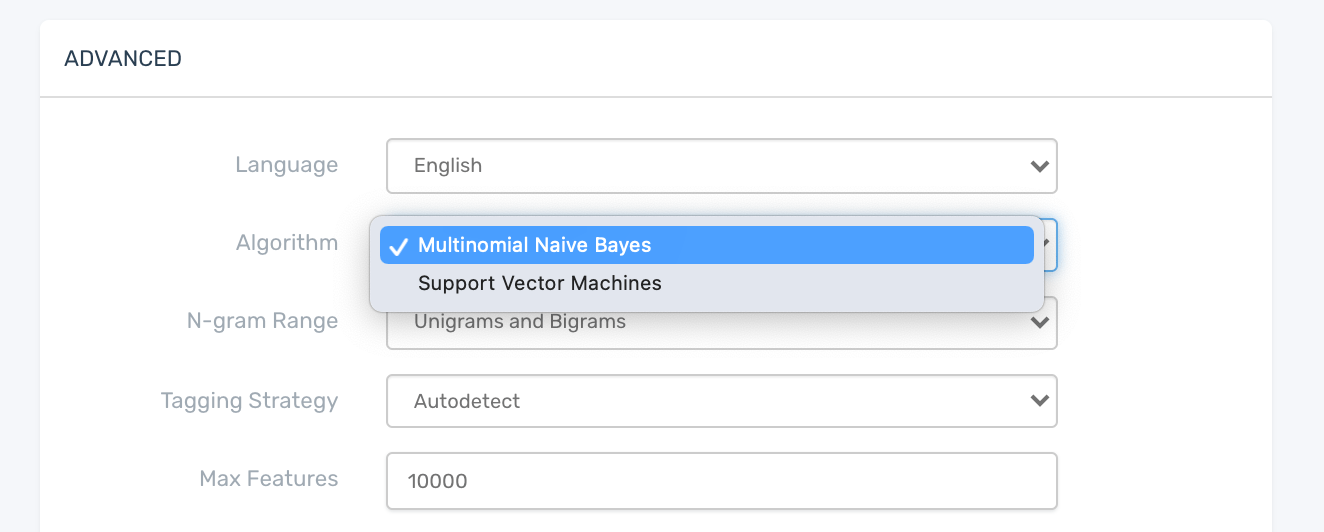

6. Set Naive Bayes Algorithm

Since MonkeyLearn uses another classification algorithm by default (SVM), you will have to change your classifier’s advanced settings at this point. Go to your classifier’s settings page by clicking the settings icon on the top right corner of your browser. A screen like this will appear:

Choose Multinomial Naive Bayes in the Algorithm dropdown menu and click Save. Your classifier will retrain automatically. That’s it. You have just configured your classifier to use the MNB algorithm for a text classification task.

7. Test Model

Now you are ready to give it a try, go to Run and try it out. Here’s an MNB text classification example from a hotel review:

If results happen not to be as good as you thought they would be, don’t worry! This might mean you will need to upload more training data to your model. Go to Build > Data, upload more samples, tag them, and try the model again until the results you get are satisfactory.

Final words

Using Naive Bayes text classifiers might be a really good idea, especially if there’s not much training data available and computational resources are scarce. Usually, results are pretty competitive in terms of performance if features are well engineered.

This is where MonkeyLearn jumps in. Creating text classifiers and carefully engineering features in order to improve the results of your models is really simple with Monkeylearn. It won’t take too long for you to get great new insights from your data.

Why don’t you try it out? Sign up to MonkeyLearn for free or schedule a demo with one of our experts.