Learn What Data Cleaning Is and How To Do It

Nowadays, it’s data that makes the world go around. So, in order to make smart business decisions and improve your bottom line you need a solid data strategy, efficient analysis tools, and reliable data.

The emphasis here is on the word reliable. Unreliable data will cost you both time and money. The question is, how can you strengthen the accuracy of your data?

The answer lies in implementing the right data cleaning processes and tools.

Cleaning your data is not the most glamorous of processes; it can be tedious and time-consuming. However, getting it right will save you from bigger troubles further down the line.

Here’s what we’ll cover in this post:

Let’s go.

What Is Data Cleaning?

Data cleaning or data cleansing is the exercise of removing or correcting inaccurate information within a data set. This could mean missing data, spelling mistakes, and duplicates, to name a few issues. Bad information like this within your data set can lead to issues with analysis if not properly addressed before this step.

This might mean you have to redo your analysis, which is a time-consuming and costly process. You might miss out on useful insights which would allow you to improve your customer experience. Worse still, you could make important decisions based on erroneous information which can then lead to negative outcomes for your business.

Data cleaning, therefore, is an integral part of data preprocessing.

There are many different techniques for getting your data clean. Which ones you follow will depend on your data and what you hope to get out of it. There are also a number of data cleaning tools that can help you through this process.

Data Cleaning vs Data Wrangling

It’s important to note that data cleaning and data wrangling are separate elements of your data preprocessing stage and should not be confused.

Data cleaning focuses on fixing inaccuracies within your data set. Data wrangling, on the other hand, is concerned with converting the data’s format into one that can be accepted and processed by a machine learning model.

Once you have cleaned your data up following the steps we’ll go through below, only then will you be ready to start data wrangling.

The Importance of Data Cleaning

As data in the world (and its influence) increases at exponential rates, so too does the cost of bad data. An IBM study in 2016 estimated that bad data costs US businesses a total of $3.1 trillion a year. That is a big strain on the economy and on individual businesses.

Accurate, relevant customer or business data, gives insights that let you make the right marketing decisions that ultimately lead to more business. Working with bad data slows down your sales and will slow down your profits.

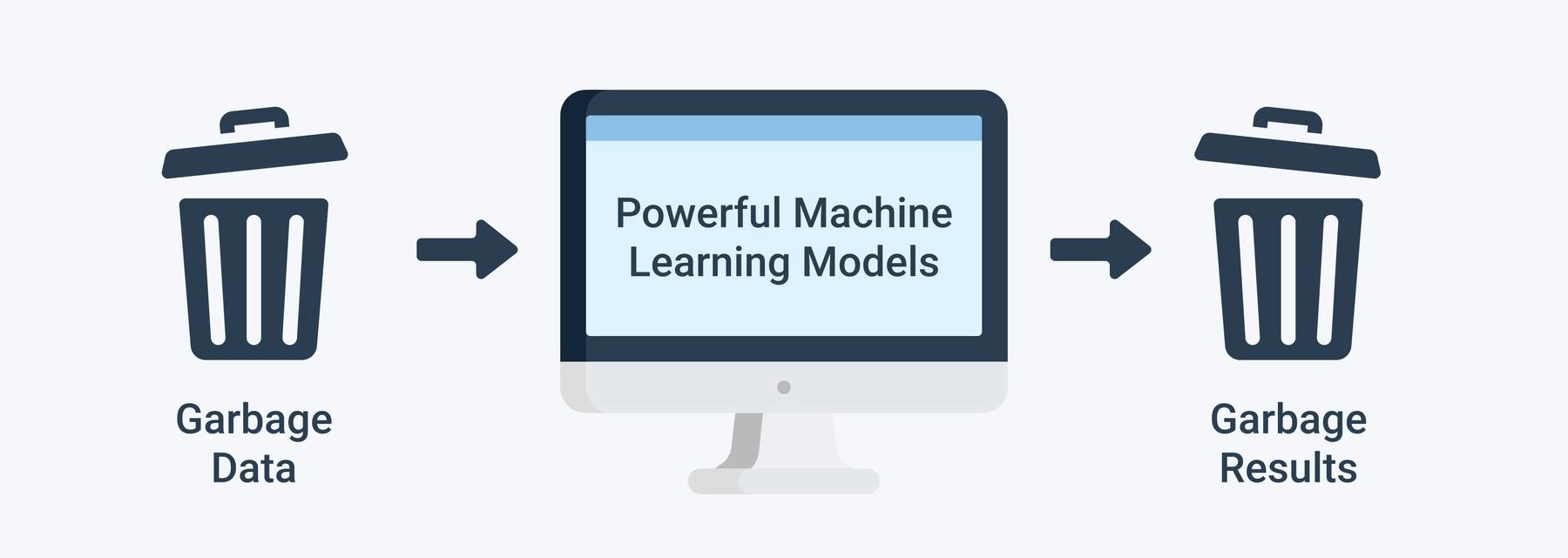

Data cleaning is particularly important if you want to carry out data mining or analyze your data using machine learning tools. You can have the best machine learning algorithms in the world, but if you put dirty data in, your results will reflect that.

The ‘garbage in, garbage out’ concept is apt here.

Where To Start With Data Cleaning

The next logical question is how do you clean data? But, before talking about data cleaning, it’s worth noting the important role of solid standardization and data-entry guidelines and practices.

With these practices in place, you’ll prevent a great deal of bad data from being created in the first place. The amount of data cleaning needed will reduce significantly and you’ll be able to save money.

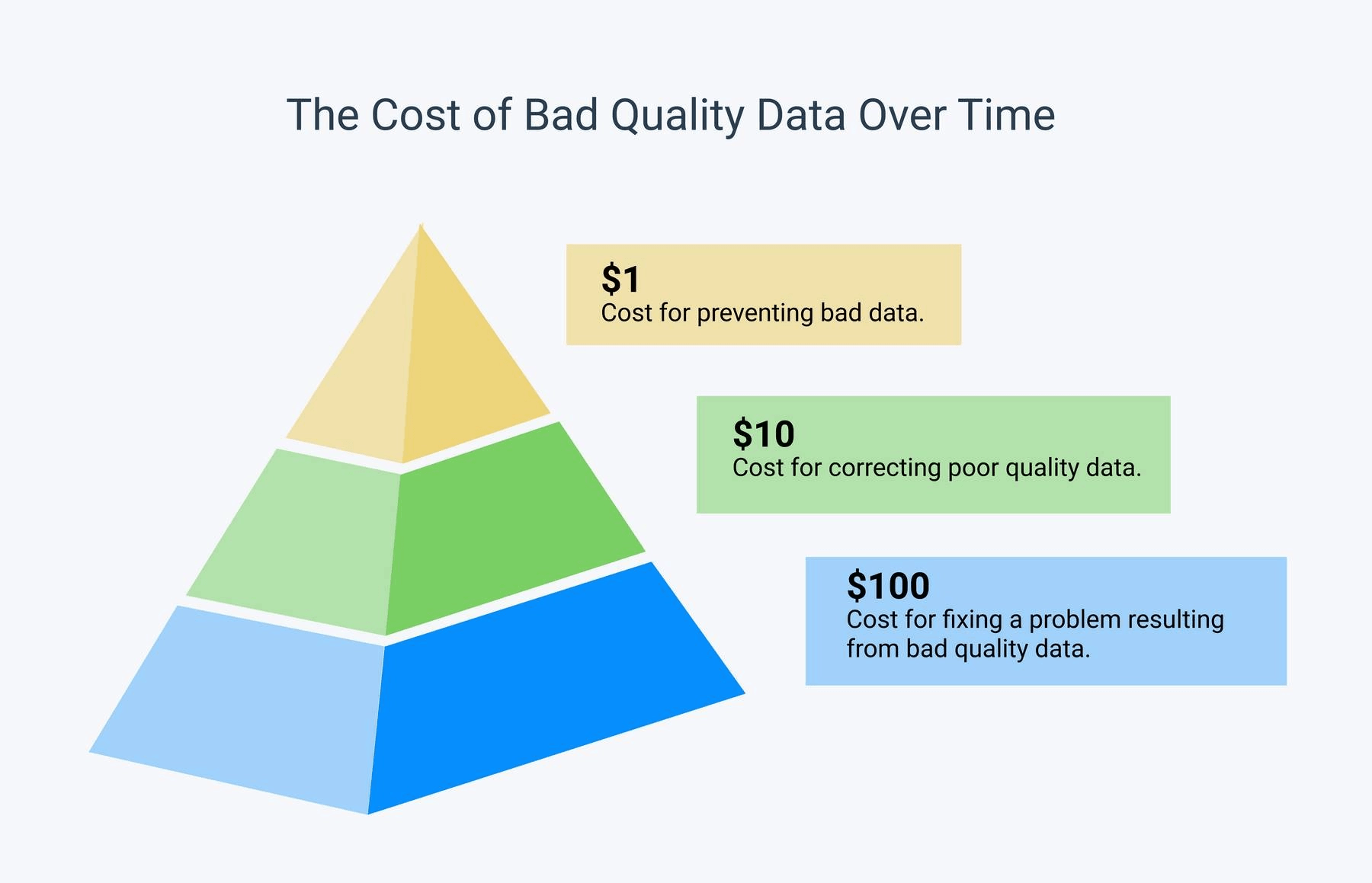

Below is a great visual to give you an idea of the kinds of savings you are looking at. As you can see, it’ll cost you much less if you put clean data in at the source. If you don’t achieve that, data cleaning will still prevent the bigger losses that working with dirty data can cause.

You should also go into your data collection process with a plan. This means setting clear objectives about what kind of data you want to collect, why, and what you hope to achieve out of the subsequent analysis.

You might be able to limit the amount of bad data that you collect, depending on where it’s coming from. However, some dirty data will inevitably slip through the net. To clean it, you’ll need the following steps.

6 Data Cleaning Steps To Follow

- Remove irrelevant data

- Resolve any duplicates

- Correct structural errors

- Deal with missing fields

- Zone in on any data outliers

- Validate your data

1. Remove Irrelevant Data

Before doing anything, you need to make sure that the data you’re including really needs to be there. For example, if you are collecting data on women between the ages of 18-35, there is no reason for a 60-year-old man to appear in your data set.

Removing these irrelevant fields will cut out a lot of distraction and noise from your data. This will make the analysis stage much more efficient letting you cut to the insights faster.

2. Resolve Any Duplicates

Duplicates within data can come as a result of merging information from different sources, or as a result of manual entry errors.

Duplicate information in your data can give you misleading insights. It can also result in bad customer experiences. For example, if you have a customer’s email listed twice, you run the risk of sending them duplicate communications, which might irritate them.

For these reasons, it’s crucial to carefully scour your data to remove any duplicate entries.

3. Correct Structural Errors

Structural errors in your data include things like typos, incorrect capitalization, and any spelling issues or formatting that might confuse a machine learning model (such as spelling out a date rather than using a number).

Following on from our earlier example of a duplicate email, if you have errors in the spelling of your customers’ emails, you run the risk of not contacting them at all and missing a sale.

You’ll also need to standardize formatting such as the date and time format or units of measurement. That way they can be grouped correctly and analyzed much faster.

If you don’t fix these issues you might not reach your customers. You could also create new, unnecessary categories which weakens any efforts you make to gain insights, and confuses your machine learning models.

4. Deal With Missing Fields

Missing fields often happen when forms are filled out incorrectly. If left as is, you can get skewed results from your data and miss out on valuable information.

You can do one of two things when it comes to missing fields. If the field is important for your analysis you should try to input the missing data. If you don’t know what the missing data is or you are unable to find it, you should fill the field with a zero or the word missing.

Your second option is to remove the observations that have this missing value. This would only be suitable if this section of your data is not crucial for your analysis.

5. Zone In on Data Outliers

An outlier is a minority data point that varies greatly from the majority of the other data.

Outliers are not incorrect, but as they sit much further from the other data points, they may give an inaccurate representation of your data if you take them into account.

An example of this would be if you took the average age of first-time home-owners. Including someone who bought their first home aged 80 would unrealistically impact your mean.

To decide whether you keep or remove outliers, you’ll need to assess each individual case to see if it will add to your analysis or take away from it.

6. Validate Your Data

To wrap up your cleaning process, you’ll need to validate your data. This involves taking a holistic view of your data and double-checking that everything is how it should be.

Some questions you should ask throughout this process should include whether you have enough data to work with, whether it’s ready to be put through a machine learning model, and whether it is clean enough to meet your needs.

These are just 6 steps you can take to clean your data. You can find more data cleaning techniques here for a more in-depth approach.

Takeaways

Cleaning your data is a time-consuming, yet essential task that once completed allows you to move on to more exciting tasks, like the analysis of your data.

That said, analyzing data can bring its own set of challenges. The principle one being that data sets are often huge. Going down the route of manual analysis can lead to inefficiency and subjective results.

This is where AI can help. MonkeyLearn offers a host of tools that help you analyze reams of data in a matter of seconds.

Sign up for a free demo today to learn how you can get the most out of your squeaky clean data.

Inés Roldós

November 3rd, 2021