What is Data Cleansing? Data Cleaning and Preparation Explained

Data analysis is a cornerstone of any future-forward business. Whether parsing customer feedback for insight or sorting through customer data for demographic trends, the results of your analysis influence your business’s path forward.

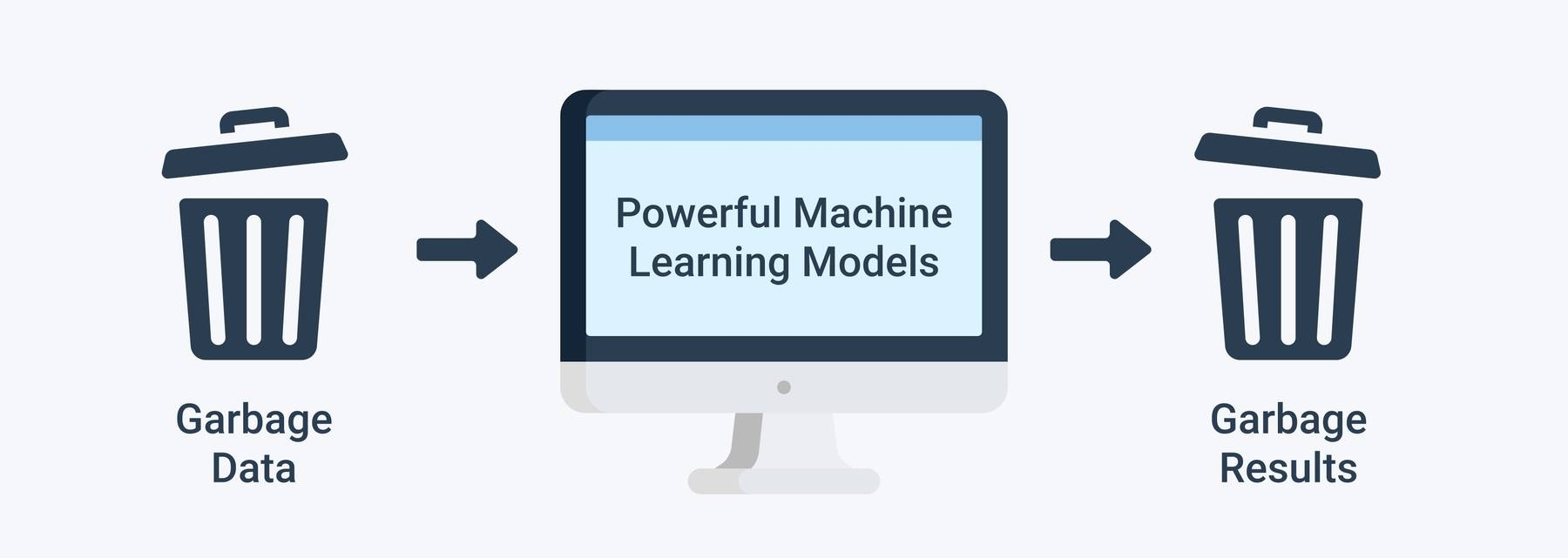

But using bad data spells disaster. It doesn’t matter how advanced your analytics software is or how well thought out your approach – bad data in means bad data out.

This guide to data cleansing will outline the value of proper data cleaning and data preparation. We’ll introduce and outline the importance and thought behind proper data prep, then walk you through some essential first steps to set you on your way.

Sloppy data can easily be overlooked, but doing so can sink the smartest business model.

Feel free to click through to the section that most intrigues you. Off we go!

What is Data Cleansing?

Data cleansing aka data cleaning is the process of exploring, filtering, and correcting data in order to ensure that it can accurately be analyzed.

Data cleansing can sound intimidating, as it seems like it might refer to the elimination of data, and more data means better results, right? Well, that’s not necessarily true (more on that later), but it’s important to understand that data cleansing is often referred to as data cleaning, and is also closely related to data preparation. All three refer to the act of arranging data for more accurate analysis

Typically, the tasks required to properly cleanse, clean, and prepare have fallen to technically proficient employees – usually data scientists. While they are qualified to perform far more technical tasks to solve complex problems, data cleansing and overall dataprep has historically taken up most of their time.

This is significant because data scientists are highly skilled, specialized employees (often with higher salaries) who could be spending their time on process innovation and/or strategic planning for the future of your data analysis divisions. Instead, they are too often shackled to the essential, but often monotonous and exhausting task of data cleaning.

Freeing technical employees up to make the most of their capabilities by democratizing your data cleaning process can be the catalyst to a revamped and supercharged data division. Let’s jump into the returns this can bring by taking a look at the bottom line import of great data prep.

Data Cleansing Benefits

Understanding the benefits of excellent data cleansing is as easy as understanding a simple phrase, which MonkeyLearn CEO Raúl Garreta stands by:

“If your downstream process receives trash as input data, probably the quality of the results will also be of bad quality.” -Raúl Garreta

As we here at MonkeyLearn are in the business of providing cutting-edge machine learning algorithms, this hits home. All our work on getting data analysis right, and equipping it to deliver the most useful results, is useless if bad data is fed in.

Garbage in, garbage out.

Let’s state that in tech terms:

Your refined downstream processes, produced using advanced (and often expensive) software, are only as good as their upstream input.

So are we saying that you can make all the right choices, map your data process, and collect the right kind of customer feedback only to be stymied by some misspelled words or missorted numbers? In a word – yes.

A decimal, some wrongly grouped dates, or even something as simple as inconsistent address formats can throw a wrench in even the most impressive of data analysis systems.

Tossing bad data into good software is akin to filling up a sports car with diesel fuel – it’s a misunderstanding of the machine by the user, not the car's fault. Understanding the kind of input that your software likes, and what will trip it up, is just as, if not more, important than the time spent interpreting its results.

Inaccurate results can have a cascading failure effect. If strategic decision-makers move on bad data, it can send your entire company in the wrong direction for significant amounts of time. This could waste untold dollars and yield negative results.

In cutthroat modern industries where consumers make choices between brands based on tiny differentiating factors, this can make the difference between staying above water or not.

Easy to say, harder to do: Here are the four most impactful steps to follow for successful data cleaning.

Data Cleansing Steps

The data cleansing process writ large is a sum of four sub-processes, each with a specialized purpose, that add up to ‘clean data’. Here are some best practices to keep in mind with each. The subprocesses are data exploration, data filtering, data cleaning, and data validation.

1. Data Exploration

To explore your data is to understand it.

Raúl also chips in here, explaining:

“Data exploration just means taking a look at the data to get an overview of what the data is about. It also allows you to start understanding what needs to be cleaned, ie: what records need to be removed, reformatted, etc."

Just as you need to understand your analytics software to know the proper data to feed it, you also need to understand the makeup of your data before cleaning it. The two processes go hand in hand – they just approach the problem from opposite angles.

Within the data, there could be dates, times, sentiment-oriented overall responses, feature-specific feedback, or even something else entirely.

Whatever there is, making a note of what needs to be cleaned or what needs to be removed completely will set you up successfully for the rest of your data cleaning process.

2. Data Filtering

This is the simplest step, but messing it up can trip the whole system.

Now that you know your data, you have to filter out what you don’t need. This requires some creative thinking.

Your data scientists (who ideally design the approach, then hand the work off) along with all of your data analytics strategic minds must take a look at your data exploration results and make some critical decisions.

First of all, what information is relevant for analysis? This is often a difficult decision as data that seems extraneous can later turn out to be absolutely essential – take our data visualization example, for instance.

But, some things are easier to cut, like duplicate numbers, specific names, and other personal information.

Your second task is to come up with every type of scenario and format that this kind of extraneous information might come in. Duplicate numbers can come in numeric ‘11 times’ form or written out ‘eleven times’ form. Names can be personal ‘George’ or can refer to businesses ‘George Foreman’ – the latter could be linked to useful data and would need to be grouped.

Your strategists will have to keep this kind of trickery in mind while creating their data filtering approach.

3. Data Cleaning

Wait, isn't this all Data Cleaning? Well yes, but this subprocess refers specifically to all of the tried-and-true, classic data cleaning techniques. These have been around for some time and many businesses are familiar with them from times before digital data, when they would have to correct them by hand, on paper (gasp, the horror).

These are:

- Standardize capitalization

- Fix errors (like typos)

- Fix structural errors (group paragraphs, edit run-ons, etc)

- Translate language (to business standard)

- Handle missing values. You have 2 options: (1) Remove observations that have the missing values, or, (2) Manually input missing values.

And finally, added for the digital age:

- Fix character encoding (see NLP practices)

For a deeper dive on all of the above, you can hop to our awesome data cleaning step guide which outlines and explains the science and practice of implementing the above steps.

4. Data Validation

Ideally, with that done, you’ll be left with clean data. But, as any scientist worth their salt would insist, you then have to check your results.

Characteristic of Clean Data

With your cleansed data in hand, you want to check for the following criteria. Your data should be:

1. Accurate

Decimals should be in the right place, dates interpreted, and addresses correctly transcribed. Failure on any or all could lead to anomalies and growing inaccuracy as the data moves down the line.

2. Complete

As detailed in Data Filtering above, ensuring that you have all the necessary data is mission critical – keep as much as you can and make sure you are eliminating only extraneous and or bad data.

3. Consistent

Machines are learning but aren’t perfect. The entire industry of data preparation relies on your teams' understanding of this and preparing your data with your specific software in mind. If the input is consistent for what your software can handle, you will be successful.

4. Traceable

Down the line, once your clean data is analyzed you are going to get results. Your team will need to look at these results and ask where certain data was gathered from. They need to be able to address drill-specific demographics, functionalities, and other friction points. So, when the time comes, you’ll need the source information for all your data points linked and ready.

If your data meets all of the above standards, congratulations – you’ve done a bang-up job. Now it’s time for the fun stuff.

Takeaways

We’ve talked about the value of understanding both your software and your data, so you can clean your data, leaving it in a software-friendly format.

The end goal is to leverage the data for results, using software. Fortunately for you, the world of data analytic software is advancing at a breakneck pace, adding power and accessibility by the day.

Whether it's adding a first-data analysis platform to your repertoire, or supplementing and supercharging your existing suite, MonkeyLearn’s industry-leading data analysis and customer feedback AI is programmed to be an easily integrated, data-friendly, powerful data solution.

Equipped to wrangle and transform any size data archive, and with the power and performance to deliver detailed, insightful, and visually appealing results, the MonkeyLearn Studio can take your analytics to the front of the pack, regardless of your business size or model.

Sign up for a demo with one of our analytics experts today to discover custom fit analysis tools made for your data, or just jump right in and discover the results for yourself with a free trial.

Rachel Wolff

October 14th, 2021