Survey Responses: How & What to Ask to Get The Responses You Need

According to data from SurveyMonkey, two thirds of customers say they complete more than half of the surveys they receive.

While that may not seem so bad, it doesn't mean to say that all these survey responses are equal.

Some of the survey responses you get might be irrelevant, so instead of the 1000 survey responses you thought you had, you might only be left with half that are actually decent.

What your business does to hone its survey approach and incentivize more responses that are more honest and complete, will separate you from the pack and pay dividends.

This guide will walk you through the various types of survey responses, the most effective methods and approaches to survey distribution, then dial in on the analysis you perform on your survey responses.

Let's jump in - click through to the section most useful to you.

- What Is a Survey Response?

- Types of Survey Responses

- Survey Response Channels

- How to Present Your Survey Responses

- Takeaways

What Is a Survey Response?

A survey response is a customer's submission of a survey, regardless of whether they completed the whole survey or not. The data sent will be quantitative or qualitative, depending on the questions you asked.

So, what is a 'good' survey response rate?

Your survey response rate is the number of customers who actually take the time to respond to one or more questions and submit the survey, relative to the number of customers asked.

To calculate your survey response rate, you can use the following formula

Survey Response rate = Number of respondents who completed a survey/ total number recipients * 100

For example, if you send out 1000 surveys and get 200 in return, that's a response rate of 20%.

The higher your response rate, the better - a higher survey response rate indicates less possible bias, and therefore more confidence in your results.

According to a recent consumer study, across the board response rates from 5% to 30% are considered typical, and any response rate over 50% is considered excellent.

Now, if your business falls below your industry average, it can be demoralizing. And you may decide that you need to change your survey feedback strategy - maybe even buy survey responses.

While a seemingly easier route, buying survey responses of any significant size (thousands or more) can be prohibitively expensive. The more questions you ask, the more it will cost.

Now, if you are a smaller company, buying surveys online could be the option for you.

But, if you are a company with a large customer base, you might need to explore options beyond paying someone to help you reach your target audience and get a good number of completed survey responses.

Here are a few factors that may affect response rates and the types of response you get:

- Demographics (age being a huge one for online surveys)

- Geographic location (relative cultural values)

- Incentives (what's the reward, what kind of customer would want it)

- Industry type (B2B surveys usually get a higher response than B2C)

- Survey type (short, long, open-ended or close-ended)

Next, we are going to lay out the types of questions to ask and which channels might work best for you, so that you can get the survey responses you need - both in terms of quality and quantity.

Types of Survey Responses

To master your survey response game, it's helpful to have a firm grasp on its components.

Survey responses come in two forms: quantitative and qualitative.

As we explain both types of response, we are going to list the forms they are distributed with.

We'll begin with quantitative feedback.

Quantitative surveys ask for 'quantifiable' responses, aka responses that translate directly into numbers, such as a rating on a one-to-ten scale or a yes/no answer to see if they were satisfied with a product feature. Let's explain each method.

Quantitative Survey Methods

- Multiple choice

- Yes/no binaries

- Rating scales

- Likert scales

- Further varieties

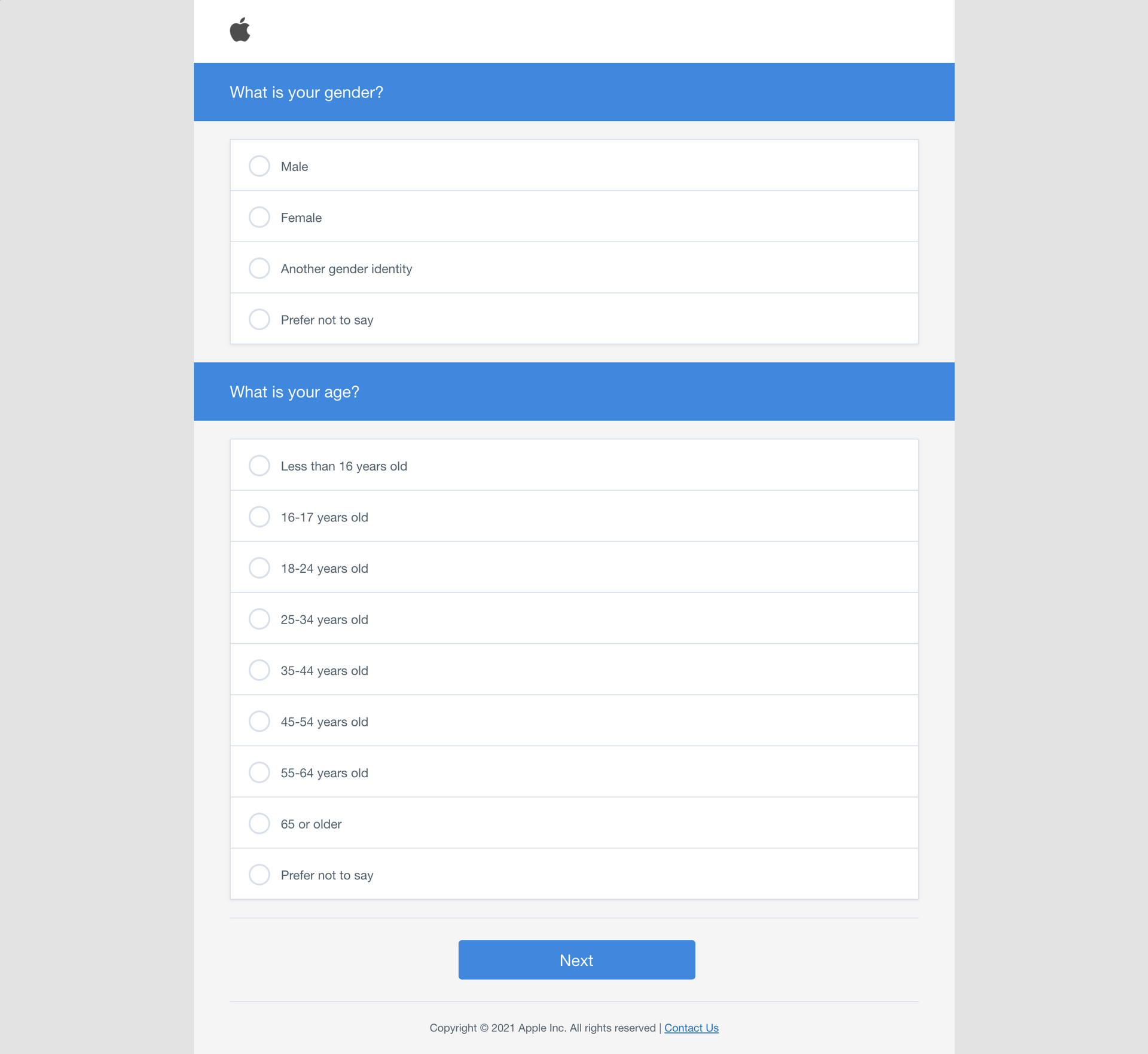

1. Multiple choice

Multiple choice surveys are similar to those tests you took in high school. They offer a limited number (1-4, A-D) of options that the customer can choose from. This limited nature makes the breadth of your customer base's responses easy to tally and thus innately quantitative.

2. Yes/no binaries

This modality is surveying at its simplest. An example of a yes/no binary is:

"Did you enjoy your experience purchasing from our company today?"

Followed by a simple 'radio button' (a circle that can be checked) for either yes or no.

Because of this survey's simplicity, it draws results that are easy to understand, plot and visualize.

3. Rating Scales

The Net Promoter Score question (one of the most popular customer satisfaction survey metrics) uses a rating scale. It asks:

"On a scale from 0-10, how likely are you to recommend this company to a friend or colleague?"

This survey method draws a bit more nuance, and the tabulation of your responses will undoubtedly uncover data trends that will prompt further investigation.

4. Likert Scales

Likert scale survey questions seek to not only engage passionate customers, who are already likely to answer any other question method, but draw out sentiment and reactions from the less-polarized by asking direct questions.

They ask, for instance, "Do you agree or disagree with (x-product related issue)?" and offer multiple choice bubbles to draw out responses. They look like this:

By using the psychology of direct questions followed by clearly marked answer parameters, Likert scales are able to increase response rates from more neutral customers, whose views are equally important to their more loyal counterparts.

5. Further varieties

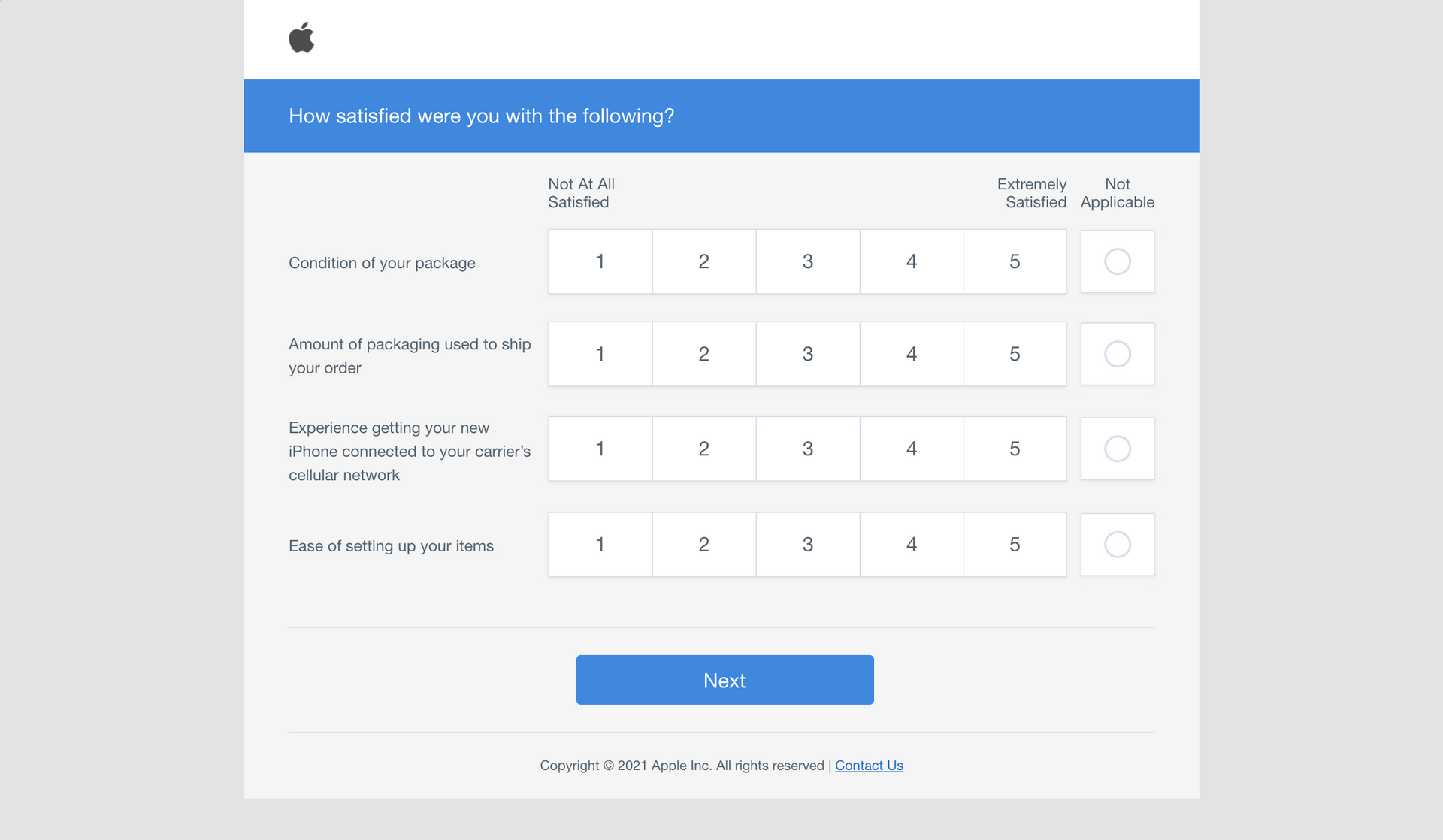

Further varieties, which really amount to just further variations on the above four basic structures exist. Likert scales, for instance, can be combined into one big question page, called a survey matrix, so the customer can more easily track their relative sentiment as they go through the list of questions.

Here's what this looks like:

These other, perhaps more exotic, varieties are worth exploring because they could lend hidden advantages.

Take the survey matrix - on its face it doesn't seem like it would yield any different results than a series of Likert questions, asked in a row. But, by stacking questions, surveyors actually found that survey matrices drew higher response rates because they appear shorter than a series of questions, which make respondents less apprehensive.

It's key to remember that quantitative data is of best use when it is aggregated and displayed using data visualization techniques to discern overall trends and areas in need of attention.

Crucially, any quantitative-heavy survey approach is missing out on essential, critical, and likely deep insight by not adding on an open-ended question that will elicit the qualitative feedback behind the customers quantitative rating - it'll tell you the why behind their score.

Qualitative Survey Methods

Qualitative survey data is mainly drawn from additional comment boxes that follow quantitative survey questions.

These are called open-ended questions, and the data behind them can and has kept many a customer experience team in the know about their customers and ahead of the competition.

Here is what a filled out open-ended response box looks like, following a close-ended question like:

"On a scale of 1-10 how would you rate our product?"

You can see that the open-ended response lends additional data that gives context. This business now knows, for this review at least, that the customer's score came as a result of a support experience, not an innate product flaw.

When the customer explains, in written text, the reasons behind their answers they are opening a pathway to bettering your business. In essence, they are telling you what they want, and businesses who listen to the voices of their customers (VoC) consistently and significantly outperform those who don't in the long term.

Survey Responses Channels - Where to Send Your Surveys

So armed with a great set of questions that elicit both qualitative and quantitative data, you're finally ready to distribute your surveys?

But how? And why should you choose a given method?

Well, that requires a little introspection. Take into consideration the size, industry, and modus operandi/ brand voice of your particular business. Then, pick out an option from those we are about to lay out. The top survey response channels are:

- Website-Integrated Surveys

- Email Surveys

- Social Media Surveys

- SMS Text or Chat-Based Surveys

- Telephone surveys (CATI)

- In-App Surveys

1. Website-integrated Surveys

Website integrated channels include pop-ups, post-purchase landing pages, and individual landing page pop-ups and/or embedded response options.

Ideally, these will pleasantly catch customers' eyes at the proper times - you want to leverage surveys when they have just interacted with your business, for example when they've made a purchase online. Offering a discount as an incentive to first time site-visitors remains a hyper-popular and successful option.

However, beware of respondents who rush through surveys, giving untruthful responses, just to get the reward. Many campaigns before have been compromised because of 'satisficers' of incentives.

2. Email Surveys

What might be thought of by some as more of a classic technique, email survey response campaigns are the ancestors of all online campaigns. And they still work. In fact, a truly personalized email that makes the customer feel cared can yield better response rates than other methods.

Everyone who does business online has at least one email address. Now, we all know how annoying unsolicited and repeated emails can be - the goal of any successful email survey response campaign should be to be the opposite of this.

Brevity and/ or offering an incentive if the customer is kind enough to spend their time responding is recommended. Being honest about average survey response length and sending a thank you follow up is also good practice.

3. Social Media Surveys

With new apps vying for control of the attention spans of both the young and the old at all times, it can be a challenge to find the right social media for your brand. Demographic research, above all else, might be the key to helping you solve this puzzle.

Understanding where your customer base is located is key to getting the most survey responses. For example, Facebook tends to skew towards an older demographic than Tik Tok. The same is true for regionality - some popular Chinese apps share a very small American user base - know who is buying your stuff, and you'll be led to the right platform. Then it's just an issue of getting people's attention aka classic advertising.

4. SMS Text or Chat-Based Surveys

Political campaigns and savvy viral marketers have already keyed into this distribution method. Leveraging surveys directly through SMS or any other kind of instant messaging app can be extremely successful.

With people spending more and more time on their more and more powerful phones, a short, succinct text for feedback, especially one leading with a ratings scale (1-5) response can prompt customers to shoot back a quick reply. Then, you can follow up with the option for them to type out some open-ended feedback - you've got your foot in the door.

5.Telephone Surveys (CATI)

Telephone surveys, aka CATI surveys, can fill a much needed hole in the survey response option spectrum.

Directly calling customers might come off as intrusive and invasive to come, but if it comes at the right time for the right demographic (frankly, older customers) can demonstrate investment and genuine interest in customers' happiness. A human voice on the phone comes across as an old-school, respectful move to some - as long as the call doesn't come during work or mealtimes.

6. In-App Surveys

In-app surveys, much like website surveys, if leveraged correctly can elicit far higher response rates. This effectively is doubled if the survey is simple and requires only a press of the button on a phone.

Users will see the survey, instinctively react, and give you the responses you crave. Invested and emotional users might even go on to type out brief but crucial open-ended feedback.

How to Present Your Survey Responses

Presenting or 'reporting' your survey responses refers to what you actually do with the data once you have completed your surveying to your satisfaction.

By another name this is the survey data analysis and data visualization phase, where numbers and text are crunched into clues that can inform product and company roadmap.

Now we again split apart - but not for good - our two types of feedback as they require different approaches

For your close-ended data meaning your basic quantitative responses you can simply tabulate the responses on basic software such as excel.

But, and this is a crucial but, don't let entries become untethered from any open-ended follow ups that came with them.

You're open-ended data, likely text based, need a more deft touch. As we hinted at before, it's lucky we live in a time that artificial intelligence and machine learning have begun to harness techniques like sentiment analysis and thereby unlocked the ability to analyze open-ended data with no coding needed, at scale, and in real time.

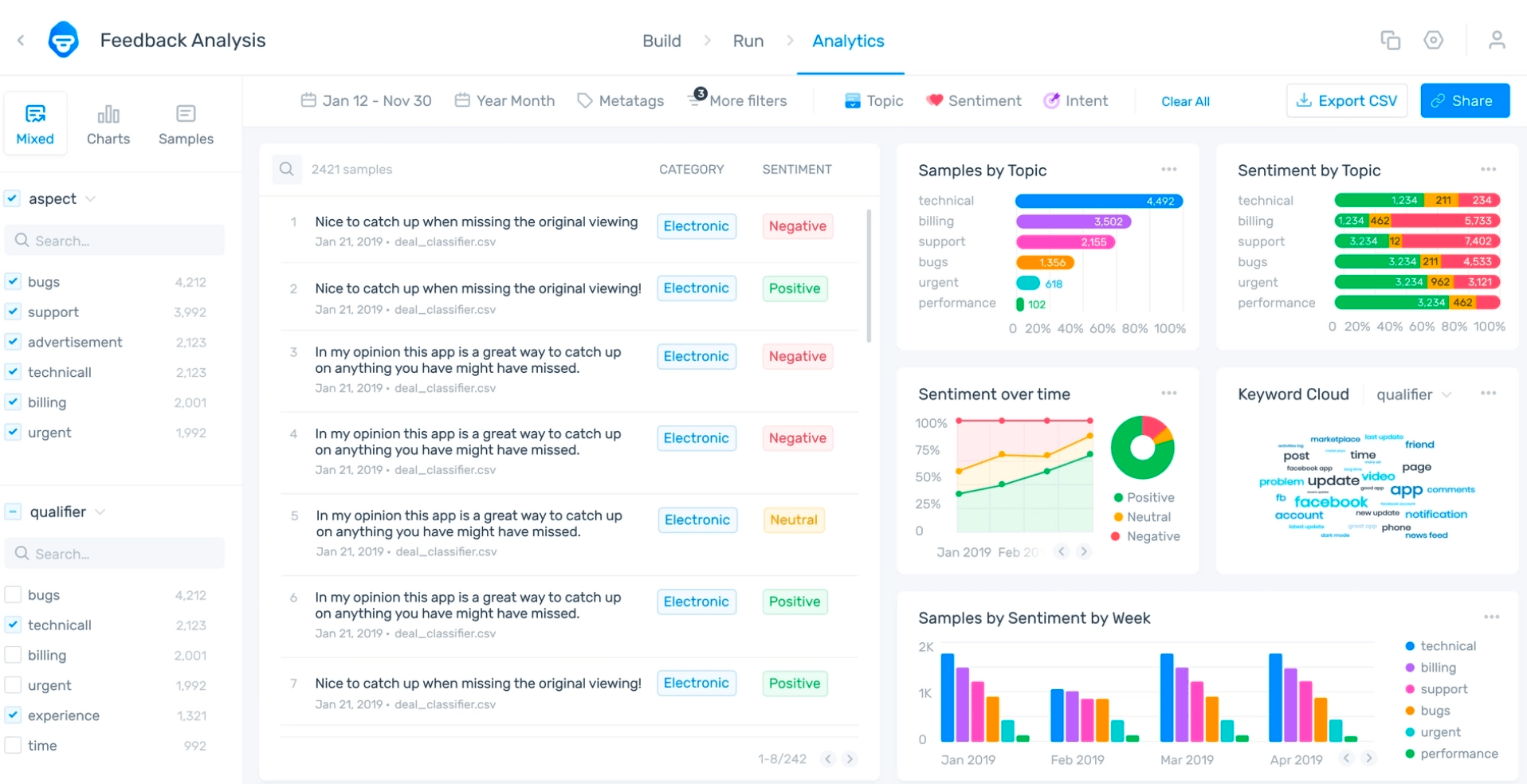

Powerful, machine learning AI software, like Monkeylearn are able to dig into swathes of text data and derive visualized, obvious insights from it, much like the quantitative survey methods above.

MonkeyLearn's no-code templates use keyword extraction, topic analysis, and sentiment analysis to chop up massive archives of written customer feedback and transform it into quantified, beautifully visualized graphics so your teams can make use of the deeper trends behind the initial numbers.

You can upload large survey datasets, let the software learn what it is looking at (customizing if desired), and derive beautiful results instantly.

Get survey results that look like this!

From there, the power of your customers is truly at your fingertips. With an accurate read on their voices, your teams are best equipped to make the decisions necessary for continued success.

Takeaways

At the end of the day, survey responses, the modalities by which they are delivered, and the nuance to finding success, are changing constantly.

The one constant, however, is the need to understand one's own customer base and where to reach them. And the best way to do this is to make sure you are collecting open-ended feedback so you can see the 'why' behind the customer satisfaction metrics in your surveys.

Monkeylearn can help with that - offering analytics solutions for every kind of feedback and a complete data visualization studio.

Get a free demo consultation with one of our data experts today, or dive right in and customize our no-code options yourself with a free trial.

Rachel Wolff

March 7th, 2022