Data Preparation: Basics & Techniques

Your data is only as good as its accuracy.

Imagine you’ve painstakingly collected customer feedback over a large geographic area which should allow you to target key demographics at specific locations – all this work will be rendered near useless if improperly prepared.

Sadly, bad data preparation can actually create additional work for already overburdened businesses.

Before you can put your data to use you have to make sure it’s ‘clean’ and organized, otherwise you will end up with inaccurate results and misleading insights – and nobody needs those.

For this reason, proper data preparation, aka data prep or data wrangling, is essential to any business looking for actionable results from their data.

Additionally, effective data preparation can also organize your bank of data in a manner suitable for powerful machine learning systems to understand, meaning you can get the most out of these powerful tools.

Let’s get into the how and why – feel free to jump around via the below links:

What Is Data Preparation?

Data preparation is the sorting, cleaning, and formatting of raw data so that it can be better used in business intelligence, analytics, and machine learning applications.

Data comes in many formats, but for the purpose of this guide we’re going to focus on data preparation for the two most common types of data: numeric and textual.

Numeric data preparation is a common form of data standardization. A good example would be if you had customer data coming in and the percentages are being submitted as both percentages (70%, 95%) and decimal amounts (.7, .95) – smart data prep, much like a smart mathematician, would be able to tell that these numbers are expressing the same thing, and would standardize them to one format.

Textual data preparation addresses a number of grammatical and context-specific text inconsistencies so that large archives of text can be better tabulated and mined for useful insights.

Text tends to be noisy as sentences, and the words they are made up of, vary with language, context and format (an email vs a chat log vs an online review). So, when preparing our text data, it is useful to ‘clean’ our text by removing repetitive words and standardizing meaning.

For example, if you receive a text input of:

‘My vacuum’s battery died earlier than I expected this Saturday morning

A very basic text preparation algorithm would omit the unnecessary and repetitive words leaving you with:

‘Vacuum’s’ [subject] died [active verb] earlier [problem] Saturday morning [time]’

This stripped down sentence format is now primed to be much easier to be tabulated analytically – an AI analysis bot could now, for instance, easily calculate the number of text responses complaining of ‘early’ ‘battery’ -related failures. Furthermore, this could be routed to a relevant support team that can help the customer.

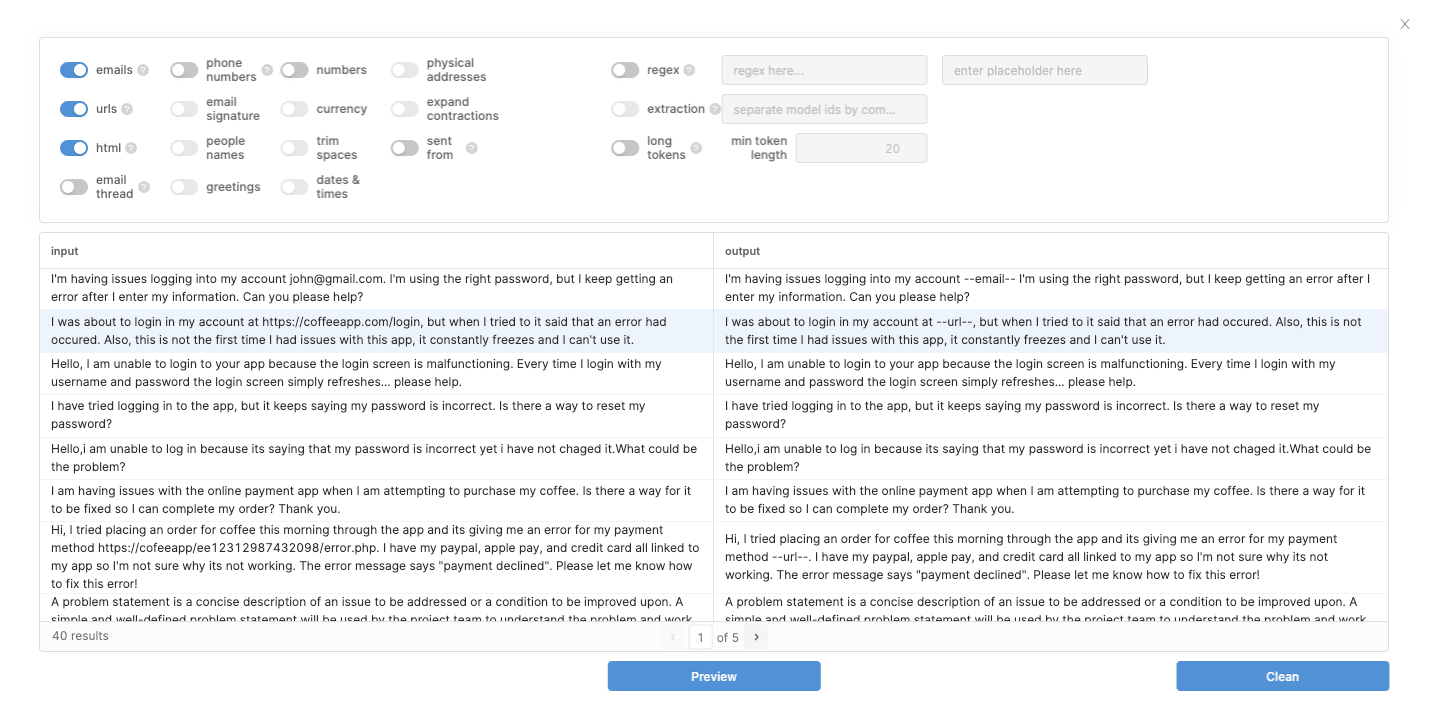

Another easy application is the removal of text noise, which, when dealing with customer service, can take the form of URLs. URLs can trip up machine analysis because each is a unique word and near impossible to group. So, an intelligent AI text prep bot can be trained to recognize the format of a URL and be programmed to turn all instances in the simple, recognizable format of ‘--url--’.

Check this out in action below:

It’s even more important for your system to use text analysis to detect user input variation. Just for starters, we want to make sure personal information that our users send us for support needs (phone numbers, email addresses) aren’t included in our larger analytics approach – to ensure data privacy and to simplify our data.

For example, customers may write their address and apartment in the same line, versus two separate lines. If your data prep doesn’t take this into account you’ll end up with a ton of confusing entries that will completely scramble results.

Because of its detail-oriented nature and perceived heavy manpower requirements, data prep is menial work, but it’s benefits are obvious.

Monkeylearn’s founder and CEO Raúl Garreta agrees that it can be tedious:

“I mean, it's not the most interesting thing to do, but if you are a data practitioner you find the importance of doing it and, as a result, you appreciate it,” he said.

Properly prepared, clean data, can make or break proper analysis and thus hamper or help entire business strategies. Let’s get into the benefits of well-prepped data.

Data Preparation Benefits

Recent studies showed that data preparation comprised a whopping 80% of the entire data analysis process.

Couple this with the growing need for effective customer analysis in the current competitive online market and you’re well on your way to understanding the importance of good data preparation. Let’s go through three specific ways that data preparation can benefit your business:

- Eliminating Dirty Data

- Future-Proofing Your Results

- Improving Cross-team Collaboration

Eliminating Dirty Data

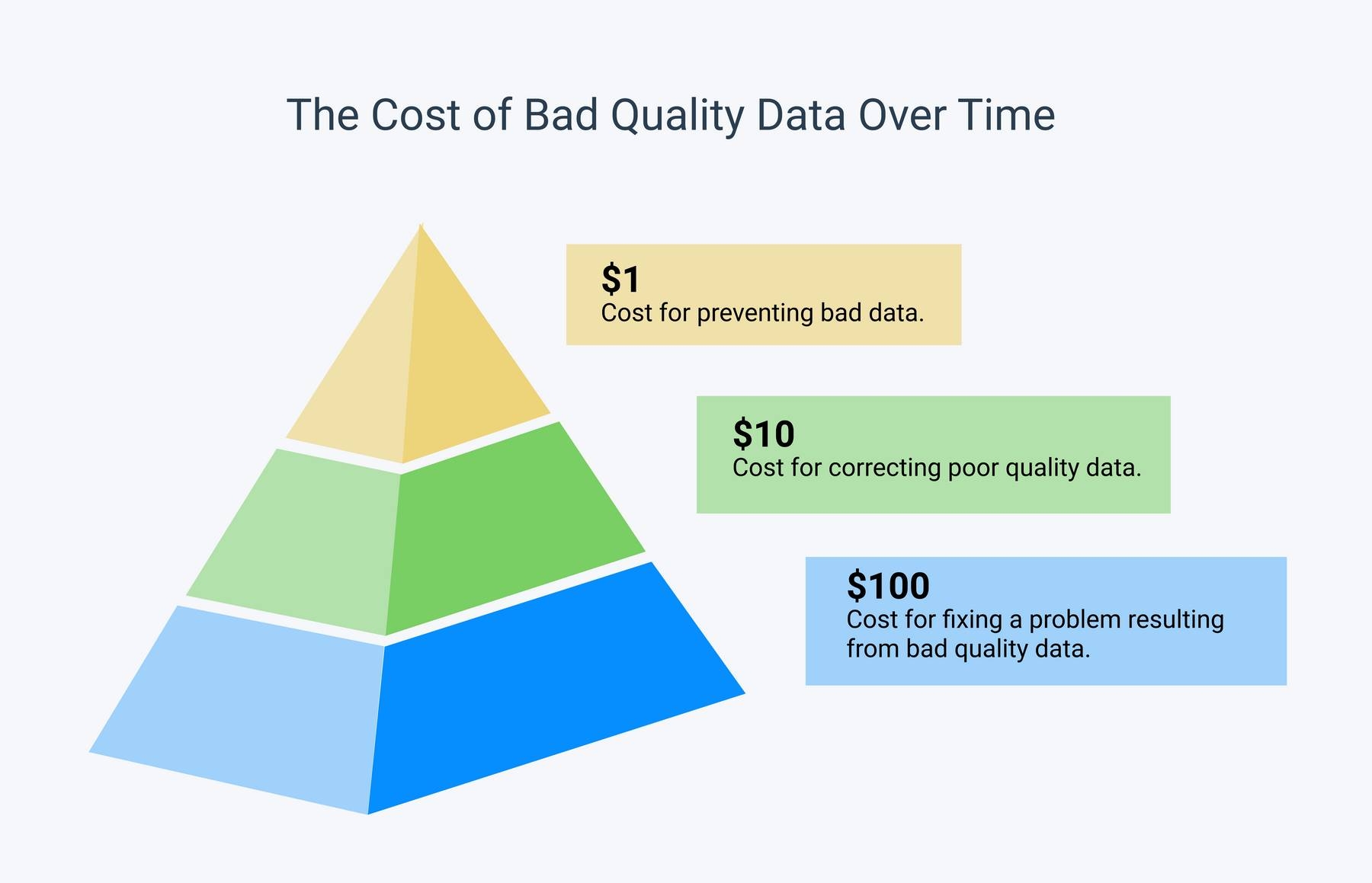

To illustrate what proper data preparation and, more specifically, data cleaning can do for your business let’s look at the problem from a purely cost-to-fix perspective:

As you can see in the 1-100 principle, the cost of fixing bad data or eliminating ‘dirty’ data grows exponentially as the issue moves down the data analysis pipeline.

Notably, this cost-to-fix principle applies to both structured data and unstructured data.

In simplest terms, to quote Monkeylearn’s CEO and Co-founder Raúl Garreta, “If your downstream process receives garbage as input data, the quality of your results will also be bad.”

Future-Proofing Your Results

According to Talend, a cloud-native self-service data preparation tool, data preparation will gain even greater importance for businesses as storage standards move to cloud-based models.

The most significant benefits of data preparation + the cloud will include improved scalability, future proofing, and easier access and collaboration.

- Improved Scalability - Unhampered by a need for physical storage, your data preparation process can be developed to custom fit the now unlimited scale that your data occupies.

- Future Proof - aka reverse compatibility, meaning any upgrades to your data preparation process can be applied in real-time to all incoming and previously collected data.

- Easier Access and Collaboration - Keeping your data on the cloud will allow for more intuitive data prep requiring less hard-coding and no manual technical installation, improving accessibility and thus allowing for greater collaboration.

Improving Cross-Team Collaboration

In the future, data prep won’t just be for data scientists. One of the greatest problems that modern companies face is a lack of data preparation-capable employees.

Your technical employees can’t be everywhere at once, and for this reason data preparation tends to either get put on the backburner or logjam the data cleaning process as a whole.

How can we fix this while improving collaboration? The best next step would be to make data preparation more accessible, so that business intelligence teams, business analytics professionals and all others can chip in to the data preparation approach as it is developed.

This type of accessibility is becoming a reality through no-code, self-service data preparation tools.

All of these services can be used in concert within a comprehensive data preparation approach and reduce employee workload.

Now that we have a grasp on the growing importance of data preparation, let’s get into the thick of it by outlining the key steps in a data prep process.

Data Preparation Steps

While every data prep approach should be customized to best fit the company it is designed for, here is a brief outline of some common data preparation steps.

We can break down data prep into four essential steps:

Let’s look at the best approaches for each step.

1. Discover Your Data

You can only improve your data prep practices if you know what you have. Data discovery has become a top spending priority with spending on data discovery tools predicted to grow 2.5x faster than on traditional IT tools.

‘Discovering’ your data simply means becoming more familiar with it. Relevant questions might include ‘what do I want to learn from my data’ and ‘how am I collecting it’.

Making sure you have the correct data gathering approach is key to successful data analysis.

2. Cleanse and Validate Data

This is essentially what we have been talking about throughout this article. This is usually the biggest step in any data preparation process – cleaning your data and fixing any errors.

This means standardizing the data i.e. making sure it’s format is understood, removing extraneous/unnecessary values, and filling in any missing values. Here is where helpful data preparation tools are of the most use, as they can detect inefficiencies and correct improper formatting.

3. Enrich Data

Here is where your data preparation approach matters most. Based on the now-better-defined objectives you landed on in the discovery step, you can now enrich (meaning improve) your data by adding whatever you are missing.

So, say you are searching for further insight on any problems your customers are having with your product’s functionality. For example, how well your vacuum’s battery is performing for customers.

You would enrich your customer support data by pairing it with customer review data, especially noting any review that mentions the battery. Now, you have a comprehensive picture of how the battery is affecting customer’s happiness with your vacuum.

4. Publish Data

Once you’ve prepared clean, helpful data it’s time to store it. We recommend finding a future-cognizant, cloud-based storage approach so you can always change your data prep parameters for further analysis in the future.

Speaking of being future-cognizant, let’s wrap up with a list of prominent data preparation solutions that can aid any data prep approach.

Data Preparation Tools

Here are some of the most popular data preparation tools:

1. Talend

Talend’s self-service data preparation tool is a fast and accessible first step for any business seeking to improve its data prep approach. And they offer a series of informative basic guides to data prep!

2. OpenRefine

Combining a powerful, no-code, GUI with easy Python compatibility,

OpenRefine is a favorite for no-code and Python literates alike. Regardless of your coding skill level, it’s complex data filtering capacity can be a boon to any business. Plus it’s free.

3. Paxata

Alternatively, Paxata offers a sophisticated, ‘data governing’ approach to data preparation, promising to clean and effectively govern datasets at scale.

4. Trifacta

With its sleek interface and innovative data wrangling approach, Trifacta hopes to revolutionize data preparation by promoting accessibility and engendering collaboration.

5. Ataccama

Ataccama provides a sleek self-service AI solution for companies that want to prioritize future-proofing their data archives.

Takeaways

Data preparation has a bad reputation as evidenced by a study that found that 74% of data scientists said that it is the worst part of their jobs.

But data prep’s importance cannot be understated as it has massive potential benefits for future business decisions and as improper data prep can have massive budget consequences.

Luckily, no-code, UI-friendly solutions are the future of data preparation and will take the hassle out of the process.

Once you have a clean set of data, check out Monkeylearn’s full array of text analysis tools, and schedule a free demo to see how we can help you transform your data into actionable insights!

Rachel Wolff

May 28th, 2021