What Is Text Mining? A Beginner's Guide

What is Text Mining?

Text mining (also known as text analysis), is the process of transforming unstructured text into structured data for easy analysis. Text mining uses natural language processing (NLP), allowing machines to understand the human language and process it automatically.

Mine unstructured data for insights

For businesses, the large amount of data generated every day represents both an opportunity and a challenge. On the one side, data helps companies get smart insights on people’s opinions about a product or service. Think about all the potential ideas that you could get from analyzing emails, product reviews, social media posts, customer feedback, support tickets, etc. On the other side, there’s the dilemma of how to process all this data. And that’s where text mining plays a major role.

Like most things related to Natural Language Processing (NLP), text mining may sound like a hard-to-grasp concept. But the truth is, it doesn’t need to be. This guide will go through the basics of text mining, explain its different methods and techniques, and make it simple to understand how it works. You will also learn about the main applications of text mining and how companies can use it to automate many of their processes:

Let’s jump right into it!

Getting Started With Text Mining

Text mining is an automatic process that uses natural language processing to extract valuable insights from unstructured text. By transforming data into information that machines can understand, text mining automates the process of classifying texts by sentiment, topic, and intent.

Thanks to text mining, businesses are being able to analyze complex and large sets of data in a simple, fast and effective way. At the same time, companies are taking advantage of this powerful tool to reduce some of their manual and repetitive tasks, saving their teams precious time and allowing customer support agents to focus on what they do best.

Let’s say you need to examine tons of reviews in G2 Crowd to understand what customers are praising or criticizing about your SaaS. A text mining algorithm could help you identify the most popular topics that arise in customer comments, and the way that people feel about them: are the comments positive, negative or neutral? You could also find out the main keywords mentioned by customers regarding a given topic.

In a nutshell, text mining helps companies make the most of their data, which leads to better data-driven business decisions.

At this point you may already be wondering, how does text mining accomplish all of this? The answer takes us directly to the concept of machine learning.

Machine learning is a discipline derived from AI, which focuses on creating algorithms that enable computers to learn tasks based on examples. Machine learning models need to be trained with data, after which they’re able to predict with a certain level of accuracy automatically.

When text mining and machine learning are combined, automated text analysis becomes possible.

Going back to our previous example of SaaS reviews, let’s say you want to classify those reviews into different topics like UI/UX, Bugs, Pricing or Customer Support. The first thing you’d do is train a topic classifier model, by uploading a set of examples and tagging them manually. After being fed several examples, the model will learn to differentiate topics and start making associations as well as its own predictions. To obtain good levels of accuracy, you should feed your models a large number of examples that are representative of the problem you’re trying to solve.

Now that you’ve learned what text mining is, we’ll see how it differentiates from other usual terms, like text analysis and text analytics.

Difference between Text Mining, Text Analysis, and Text Analytics?

Text mining and text analysis are often used as synonyms. Text analytics, however, is a slightly different concept.

So, what’s the difference between text mining and text analytics?

In short, they both intend to solve the same problem (automatically analyzing raw text data) by using different techniques. Text mining identifies relevant information within a text and therefore, provides qualitative results. Text analytics, however, focuses on finding patterns and trends across large sets of data, resulting in more quantitative results. Text analytics is usually used to create graphs, tables and other sorts of visual reports.

Text mining combines notions of statistics, linguistics, and machine learning to create models that learn from training data and can predict results on new information based on their previous experience.

Text analytics, on the other hand, uses results from analyses performed by text mining models, to create graphs and all kinds of data visualizations.

Choosing the right approach depends on what type of information is available. In most cases, both approaches are combined for each analysis, leading to more compelling results.

Methods and Techniques

There are different methods and techniques for text mining. In this section, we’ll cover some of the most frequent.

Basic Methods

Word frequency

Word frequency can be used to identify the most recurrent terms or concepts in a set of data. Finding out the most mentioned words in unstructured text can be particularly useful when analyzing customer reviews, social media conversations or customer feedback.

For instance, if the words expensive, overpriced and overrated frequently appear on your customer reviews, it may indicate you need to adjust your prices (or your target market!).

Collocation

Collocation refers to a sequence of words that commonly appear near each other. The most common types of collocations are bigrams (a pair of words that are likely to go together, like get started, save time or decision making) and trigrams (a combination of three words, like within walking distance or keep in touch).

Identifying collocations — and counting them as one single word — improves the granularity of the text, allows a better understanding of its semantic structure and, in the end, leads to more accurate text mining results.

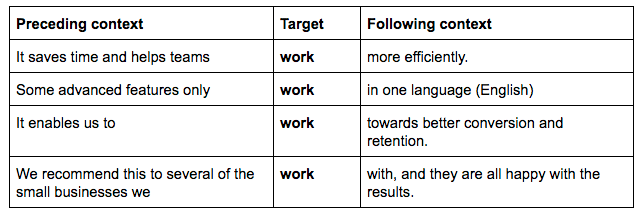

Concordance

Concordance is used to recognize the particular context or instance in which a word or set of words appears. We all know that the human language can be ambiguous: the same word can be used in many different contexts. Analyzing the concordance of a word can help understand its exact meaning based on context.

For example, here are a few sentences extracted from a set of reviews including the word ‘work’:

Advanced Methods

Text Classification

Text classification is the process of assigning categories (tags) to unstructured text data. This essential task of Natural Language Processing (NLP) makes it easy to organize and structure complex text, turning it into meaningful data.

Thanks to text classification, businesses can analyze all sorts of information, from emails to support tickets, and obtain valuable insights in a fast and cost-effective way.

Below, we’ll refer to some of the most popular tasks of text classification – topic analysis, sentiment analysis, language detection, and intent detection.

Topic Analysis: helps you understand the main themes or subjects of a text, and is one of the main ways of organizing text data. For example, a support ticket saying

my online order hasn’t arrived, can be classified asShipping Issues.Sentiment Analysis: consists of analyzing the emotions that underlie any given text. Suppose you are analyzing a series of reviews about your mobile app. You may find out that the most frequently mentioned topics in those reviews are

UI-UXorEase of Use, but that’s not enough information to arrive to any conclusions. Sentiment analysis helps you understand the opinion and feelings in a text, and classify them as positive, negative or neutral. Sentiment analysis has a lot of useful applications in business, from analyzing social media posts to going through reviews or support tickets. In terms of customer support, for instance, you might be able to quickly identify angry customers and prioritize their problems first.Language Detection: allows you to classify a text based on its language. One of its most useful applications is automatically routing support tickets to the right geographically located team. Automating this task is quite simple and helps teams save valuable time.

Intent Detection: you could use a text classifier to recognize the intentions or the purpose behind a text automatically. This can be particularly useful when analyzing customer conversations. For example, you could sift through different outbound sales email responses and identify the prospects which are interested in your product from the ones that are not, or the ones who want to unsubscribe.

Text Extraction

Text extraction is a text analysis technique that extracts specific pieces of data from a text, like keywords, entity names, addresses, emails, etc. By using text extraction, companies can avoid all the hassle of sorting through their data manually to pull out key information.

Most times, it can be useful to combine text extraction with text classification in the same analysis.

Below, we’ll refer to some of the main tasks of text extraction – keyword extraction, named entity recognition and feature extraction.

Keyword Extraction: keywords are the most relevant terms within a text and can be used to summarize its content. Utilizing a keyword extractor allows you to index data to be searched, summarize the content of a text or create tag clouds, among other things.

Named Entity Recognition: allows you to identify and extract the names of companies, organizations or persons from a text.

Feature Extraction: helps identify specific characteristics of a product or service in a set of data. For example, if you are analyzing product descriptions, you could easily extract features like

color,brand,model, etc.

Why is Text Mining Important?

Individuals and organizations generate tons of data every day. Stats claim that almost 80% of the existing text data is unstructured, meaning it’s not organized in a predefined way, it’s not searchable, and it’s almost impossible to manage. In other words, it’s just not useful.

Being able to organize, categorize and capture relevant information from raw data is a major concern and challenge for companies. Text mining is crucial to this mission.

In a business context, unstructured text data can include emails, social media posts, chats, support tickets, surveys, etc. Sorting through all these types of information manually often results in failure. Not only because it’s time-consuming and expensive, but also because it’s inaccurate and impossible to scale.

Text mining, however, has proved to be a reliable and cost-effective way to achieve accuracy, scalability and quick response times. Here are some of its main advantages in more detail:

Scalability: with text mining it’s possible to analyze large volumes of data in just seconds. By automating specific tasks, companies can save a lot of time that can be used to focus on other tasks. This results in more productive businesses.

Real-time analysis: thanks to text mining, companies can prioritize urgent matters accordingly including, detecting a potential crisis, and discovering product flaws or negative reviews in real time. Why is this so important? Because it allows companies to take quick action.

Consistent Criteria: when working on repetitive, manual tasks people are more likely to make mistakes. They also find it hard to maintain consistency and analyze data subjectively. Let’s take tagging, for example. For most teams, adding categories to emails or support tickets is a time-consuming task that often leads to errors and inconsistencies. Automating this task not only saves precious time but also allows more accurate results and assures that a uniform criteria is applied to every ticket.

How Does Text Mining Work?

Text mining helps to analyze large amounts of raw data and find relevant insights. Combined with machine learning, it can create text analysis models that learn to classify or extract specific information based on previous training.

Even though text mining may seem like a complicated matter, it can actually be quite simple to get started with.

The first step to get up and running with text mining is gathering your data. Let’s say you want to analyze conversations with users through your company’s Intercom live chat. The first you’ll need to do is generate a document containing this data.

Data can be internal (interactions through chats, emails, surveys, spreadsheets, databases, etc) or external (information from social media, review sites, news outlets, and any other websites).

The second step is preparing your data. Text mining systems use several NLP techniques ― like tokenization, parsing, lemmatization, stemming and stop removal ― to build the inputs of your machine learning model.

Then, it’s time for the text analysis itself. In this section, we’ll explain how the two most common methods for text mining actually work: text classification and text extraction.

Text Classification

Text classification is the process of assigning tags or categories to texts, based on their content.

Thanks to automated text classification it is possible to tag a large set of text data and obtain good results in a very short time, without needing to go through all the hassle of doing it manually. This has exciting applications in different areas.

Rule-based Systems

These type of text classification systems are based on linguistic rules. By rules, we mean human-crafted associations between a specific linguistic pattern and a tag. Once the algorithm is coded with those rules, it can automatically detect the different linguistic structures and assign the corresponding tags.

Rules generally consist of references to syntactic, morphological and lexical patterns. They can also be related to semantic or phonological aspects.

For example, this could be a rule for classifying product descriptions based on the color of a product:

(Black | Gray | White | Blue) → Color

In this case, the system will assign the tag COLOR whenever it detects any of the above-mentioned words.

Rule-based systems are easy to understand, as they are developed and improved by humans. However, adding new rules to an algorithm often requires a lot of tests to see if they will affect the predictions of other rules, making the system hard to scale. Besides, creating complex systems requires specific knowledge on linguistics and of the data you want to analyze.

Machine Learning-based Systems

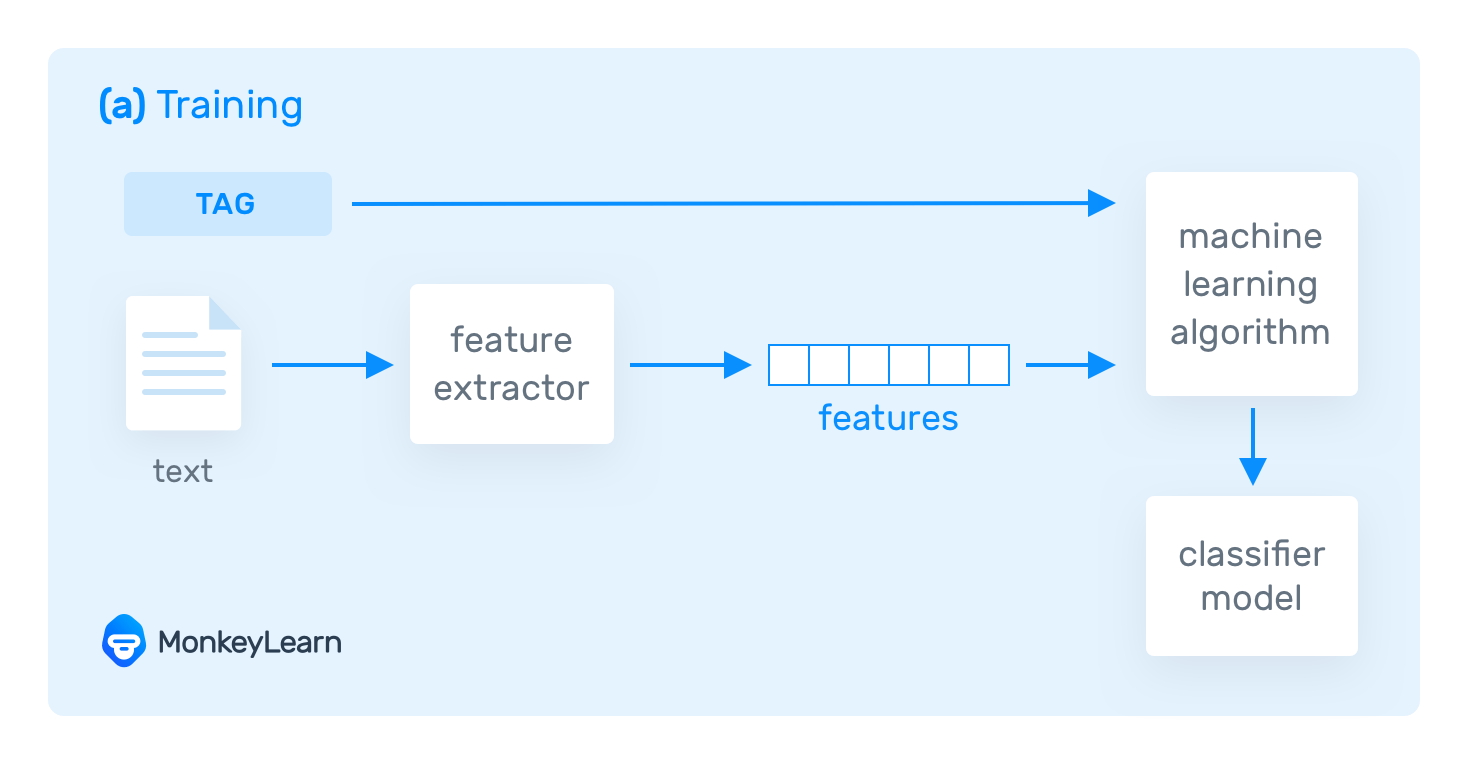

Text classification systems based on machine learning can learn from previous data (examples). To do that, they need to be trained with relevant examples of text — known as training data — that have been correctly tagged.

The training samples have to be consistent and representative, so that the model can make accurate predictions. But how does a text classifier actually work?

Machines need to transform the training data into something they can understand; in this case, vectors (a collection of numbers with encoded data). Vectors represent different features of the existing data. One of the most common approaches for vectorization is called bag of words, and consists on counting how many times a word ― from a predefined set of words ― appears in the text you want to analyze.

The text data transformed into vectors, along with the expected predictions (tags), is fed into a machine learning algorithm, creating a classification model:

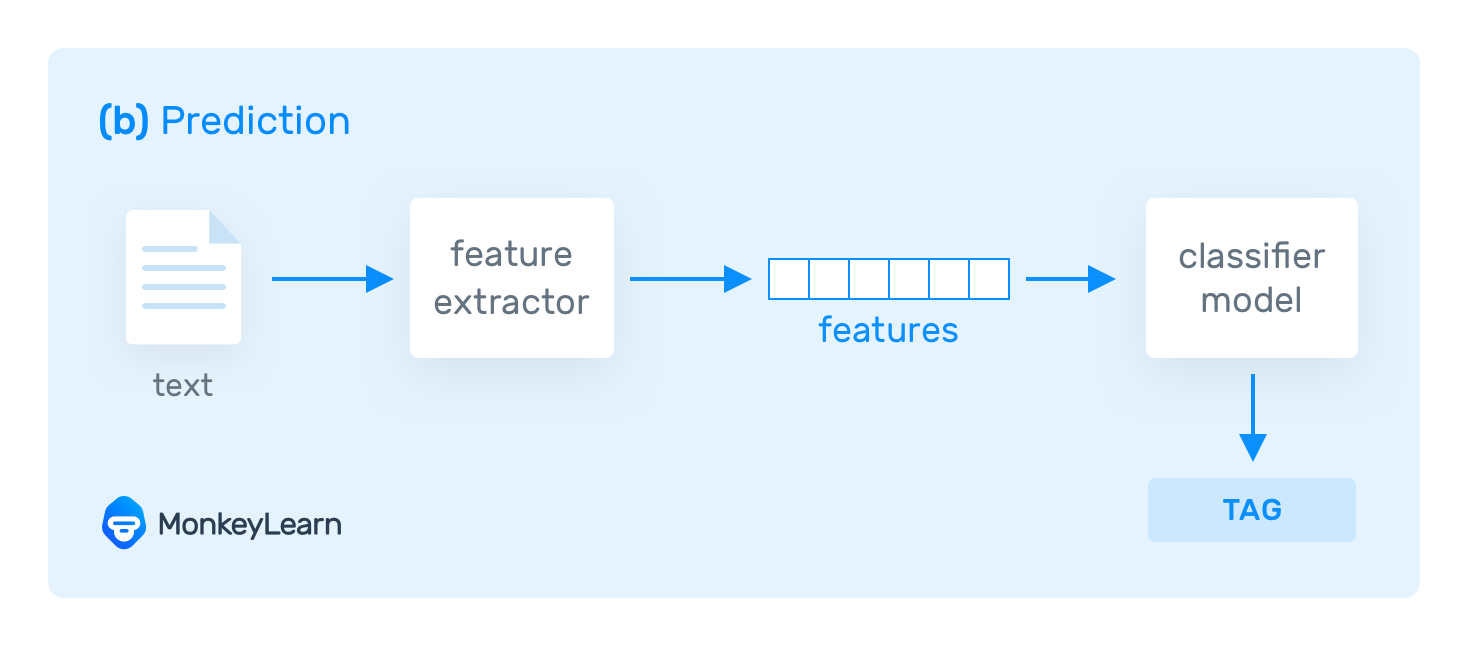

Then, the trained model can extract the relevant features of a new unseen text and make its own predictions over unseen information:

Machine Learning Algorithms

Naive Bayes family of algorithms (NB): they benefit from Bayes Theorem and probability theory to predict the tag of a text. In this case, vectors encode information based on the likelihood of words in a text belonging to any of the tags in the model. This probabilistic method can provide accurate results when there is not too much training data.

Support Vector Machines (SVM): this algorithm classifies vectors of tagged data into two different groups. One that contains most of the vectors that belong to a given tag, and another one with the vectors that do not belong to that tag. The results of this algorithm are usually better than the results you get with Naive Bayes. However, it requires more coding power to train the model.

Deep learning algorithms resemble the way the human brain thinks. By using millions of training examples, they generate very detailed representations of data and can create extremely accurate machine learning-based systems.

Hybrid Systems

Hybrid systems combine rule-based systems with machine learning-based systems. They compliment each other to increase the accuracy of the results.

Evaluation

The performance of a text classifier is measured through different parameters: accuracy, precision, recall and F1 score. Understanding these metrics will allow you to see how good your classifier model is at analyzing texts.

You can evaluate your classifier over a fixed testing set ― that is, a set of data for which you already know the expected tags ―, or by using cross-validation. This is a process that divides your training data into two subsets: a part of the data is used for training and the other part, for testing purposes.

This section will go through the different metrics to analyze the performance of your text classifier, and explain how cross-validation works:

Accuracy indicates the number of correct predictions that the classifier has made divided by the total number of predictions. However, accuracy alone is not always the best metric to evaluate the performance of a classifier. Sometimes, when categories are imbalanced (that means when there are many more examples for one category than for others), you may experience an accuracy paradox: the model is more likely to make a good prediction, as most of the data belongs to only one of the categories. When this occurs, it’s better to consider other metrics like precision and recall.

Precision evaluates the number of correct predictions made by the classifier, over the total number of predictions for a given tag (including both correct or incorrect predictions). A high precision metric indicates there were less false positives. It’s important to consider, though, that precision only measures the cases where the classifier predicts that a text belongs to a specific tag. Some tasks, like automated email responses, require models with a high level of precision, to deliver a response to a user only when it’s highly likely that the prediction is correct.

Recall indicates the number of texts that were predicted correctly, over the total number that should have been categorized with a given tag. A high recall metric means that there were less false negatives. This metric is particularly useful when you need to route support tickets to the right teams. You want to automatically route as many tickets as possible for a particular tag (for example

Billing Issues) at the expense of getting an incorrect prediction along the way.F1 score combines the parameters of precision and recall to give you an idea of how well your classifier is working. This metric is a better indicator than accuracy to understand how good predictions are for all of the categories in your model.

Cross-validation

Cross-validation is frequently used to measure the performance of a text classifier. It consists of dividing the training data into different subsets, in a random way. For example, you could have 4 subsets of training data, each of them containing 25% of the original data.

Then, all of the subsets except one are used to train a text classifier. This text classifier is used to make predictions over the remaining subset of data (testing). After this, all the performance metrics are calculated ― comparing the prediction with the actual predefined tag ― and the process starts again, until all the subsets of data have been used for testing.

The last step is compiling the results of all subsets of data to obtain an average performance of each metric.

Text Extraction

As we mentioned earlier, text extraction is the process of obtaining specific information from unstructured data.

This has a myriad of applications in business. For instance, you could use it to extract company names out of a Linkedin dataset, or to identify different features on product descriptions. Let’s say you have several finance contracts to analyze: you could easily scan this data and use a text extractor to obtain relevant information like who are lessors and who are lessees. All this, without actually having to read the data.

Text extraction can be done using different methods. Let’s have a look at the most common and reliable approaches:

Regular Expressions

Regular expressions define a sequence of characters that can be associated with a tag. Each of these patterns are the equivalent to ‘rules’ in the rule-based approach for text classification.

Every time the text extractor detects a match with a pattern, it assigns the corresponding tag.

If you establish the right rules to identify the type of information you want to obtain, it’s easy to create text extractors that deliver high-quality results. However, this method can be hard to scale, especially when patterns become more complex and require many regular expressions to determine an action.

Conditional Random Fields

Conditional Random Fields (CRF) is a statistical approach that can be used for text extraction with machine learning. It creates systems that learn the patterns they need to extract, by weighing different features from a sequence of words in a text.

CRFs are capable of encoding much more information than Regular Expressions, enabling you to create more complex and richer patterns. They can also make generalizations based on what they’ve ed. On the downside, more in-depth NLP knowledge and more computing power is required in order to train the text extractor properly.

Evaluation

It is possible to evaluate text extractors by using the same performance metrics as text classification: accuracy, precision, recall and F1 score. However, these metrics only consider exact matches as true positives, leaving partial matches aside.

Let’s look at an example:

Suppose you create an address extractor. This could be an example of an exact match (true positive for the tag Address): ‘6818 Eget St., Tacoma’. However, the output could also be ‘6818 Eget St.’. In this case, even though it is a partial match, it should not be considered as a false positive for the tag Address.

To include these partial matches, you should use a performance metric known as ROUGE (Recall-Oriented Understudy for Gisting Evaluation). ROUGE is a family of metrics that can be used to better evaluate the performance of text extractors than traditional metrics such as accuracy or F1. How do they work? They calculate the lengths and number of sequences overlapping between the original text and the extraction (extracted text).

The ROUGE metrics (the parameters you would use to compare overlapping between the two texts mentioned above) need to be defined manually. That way, you can define ROUGE-n metrics (when n is the length of the units), or a ROUGE-L metric if you intend is to compare the longest common sequence.

Use Cases and Applications

Text mining makes it simple to analyze raw data on a large scale. This is a unique opportunity for companies, which can become more effective by automating tasks and make better business decisions thanks to relevant and actionable insights obtained from the analysis.

The applications of text mining are endless and span a wide range of industries. Whether you work in marketing, product, customer support or sales, you can take advantage of text mining to make your job easier. Just think of all the repetitive and tedious manual tasks you have to deal with daily. Now think of all the things you could do if you just didn’t have to worry about those tasks anymore.

Text mining makes teams more efficient by freeing them from manual tasks and allowing them to focus on the things they do best. You can let a machine learning model take care of tagging all the incoming support tickets, while you focus on providing fast and personalized solutions to your customers.

Another way in which text mining can be useful for work teams is by providing smart insights. With most companies moving towards a data-driven culture, it’s essential that they’re able to analyze information from different sources. What if you could easily analyze all your product reviews from sites like Capterra or G2 Crowd? You’ll be able to get real-time knowledge of what your users are saying and how they feel about your product.

In this section, we’ll describe how text mining can be a valuable tool for customer service and customer feedback.

Customer Service

Customer service should be at the core of every business. After all, a staggering 96% of customers consider it an important factor when it comes to choosing a brand and staying loyal to it.

People value quick and personalized responses from knowledgeable professionals, who understand what they need and value them as customers. But how can customer support teams meet such high expectations while being burdened with never-ending manual tasks that take time? Well, they could use text mining with machine learning to automate some of these time-consuming tasks.

Here are four ways in which customer service teams can benefit from text mining:

Automate your ticket tagging process

Every complaint, request or comment that a customer support team receives means a new ticket. And every single ticket needs to be categorized according to its subject.

Tagging is a routine and simple task. If done manually, it requires a person to read each ticket and assign a corresponding tag. But here’s the thing: tagging is repetitive, boring and time-consuming, and above all, it’s not entirely reliable, as criteria for tagging may not be consistent over time or even within the members of the same team.

That’s what makes automated ticket tagging such an exciting solution. Text mining makes it possible to identify topics and tag each ticket automatically. For example, when faced with a ticket saying my order hasn’t arrived yet, the model will automatically tag it as Shipping Issues.

You will need to invest some time training your machine learning model, but you’ll soon be rewarded with more time to focus on delivering amazing customer experiences.

Automate your ticket routing and triage process

Besides tagging the tickets that arrive every day, customer service teams need to route them to the team that is in charge of dealing with those issues. It is possible to do that when the volume of tickets is small. But, what if you receive hundreds of tickets every day? Manually routing tickets becomes costly and it’s impossible to scale.

Using a text mining model allows you to automatically route and triage tickets to the appropriate person or area, based on different factors like:

The topic of the ticket: for example, a problem related to payment, would go to the area responsible for billing and payment.

The ticket’s language: if the company has teams across the world, the text mining model can identify the language and route the ticket to the appropriate geographical zone.

The complexity of the issue: the ticket can be routed to a person designated to handle specific issues. For example, a ticket sent by a high-value client would be automatically routed to the key account manager in charge of that client.

Automating the process of ticket routing improves the response time and eventually leads to more satisfied customers.

Detect the urgency of a ticket

When tickets start to pile up, it’s crucial that teams start prioritizing them based on their urgency. However, assessing the urgency of every ticket can end up killing your productivity. So, why not train a text mining model to detect urgency on a given ticket automatically?

By identifying words that denote urgency like as soon as possible or right away, the model can detect the most critical tickets and tag them as Priority.

Ticket analytics

When it comes to measuring the performance of a customer service team, there are several KPIs to take into consideration. First response times, average times of resolution and customer satisfaction (CSAT) are some of the most important metrics.

Text mining can be very useful to analyze interactions with customers through different channels, like chat conversations, support tickets, emails, and customer satisfaction surveys.

By performing aspect-based sentiment analysis, you can examine the topics being discussed (such as service, billing or product) and the feelings that underlie the words (are the interactions positive, negative, neutral?).

Customer Feedback

The Voice of Customer (VOC) is an important source of information to understand the customer’s expectations, opinions, and experience with your brand. Monitoring and analyzing customer feedback ― either customer surveys or product reviews ― can help you discover areas for improvement, and provide better insights related to your customer’s needs.

But how can you go through tons of open-ended responses in a fast and scalable way? The answer, once again, is text mining. Let’s take a closer look at some of the possible applications of text mining for customer feedback analysis:

Analyzing NPS Responses

Net Promoter Score (NPS) is one of the most popular customer satisfaction surveys. The first part of the survey asks the question: “How likely are you to recommend [brand] to a friend?” and needs to be answered with a score from 0 to 10. The results allow classifying customers into promoters, passives, and detractors.

The second part of the NPS survey consists of an open-ended follow-up question, that asks customers about the reason for their previous score. This answer provides the most valuable information, and it’s also the most difficult to process. Going through and tagging thousands of open-ended responses manually is time-consuming, not to mention inconsistent.

Text mining can help you analyze NPS responses in a fast, accurate and cost-effective way. By using a text classification model, you could identify the main topics your customers are talking about. You could also extract some of the relevant keywords that are being mentioned for each of those topics. Finally, you could use sentiment analysis to understand how positively or negatively clients feel about each topic.

Analyzing Customer Surveys

Text mining can be useful to analyze all kinds of open-ended surveys such as post-purchase surveys or usability surveys. Whether you receive responses via email or online, you can let a machine learning model help you with the tagging process. That way, you will save time and tagging will be more consistent.

Analyzing Product Reviews

Product reviews have a powerful impact on your brand image and reputation. In fact, 90% of people trust online reviews as much as personal recommendations. Impressive, right? Keeping track of what people are saying about your product is essential to understand the things that your customers value or criticize.

However, the idea of going through hundreds or thousands of reviews manually is daunting. Fortunately, text mining can perform this task automatically and provide high-quality results.

Let’s say you have just launched a new mobile app and you need to analyze all the reviews on the Google Play Store. By using a text mining model, you could group reviews into different topics like design, price, features, performance. You could also add sentiment analysis to find out how customers feel about your brand and various aspects of your product.

Analyzing product reviews with machine learning provides you with real-time insights about your customers, helps you make data-based improvements, and can even help you take action before an issue turns into a crisis.

Wrap-up

Text mining is helping companies become more productive, gain a better understanding of their customers, and use insights to make data-driven decisions.

Many time-consuming and repetitive tasks can now be replaced by algorithms that learn from examples to achieve faster and highly accurate results. The possibility of analyzing large sets of data and using different techniques, such as sentiment analysis, topic labeling or keyword detection, leads to enlightening observations about what customers think and feel about a product.

And the best of all is that this technology is accessible to people of all industries, not just those with programming skills but to those who work in marketing, sales, customer service, and production.

Ready to take your first steps? With MonkeyLearn, getting started with text mining is really simple. Contact us and request a customized demo from one of our experts!